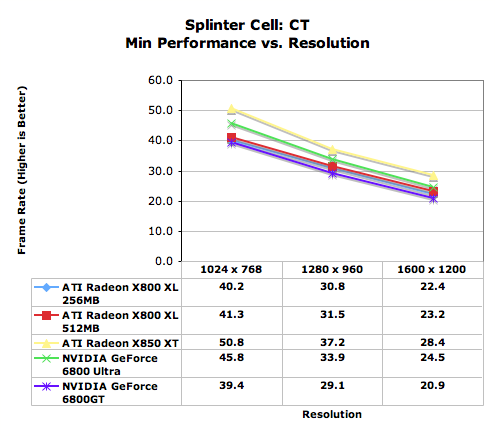

Well I'd say it depends on how much cost they want to save, and how the required performance/costs scales with the resolution (meaning better GPU). Looking at the graphs posted you'd think that it's a linear factor, but I'd say that that entirely depends on your shaders.

What I mean to say is following: if you take the X360 and make a game to run at 60fps for a 480p display you can definately make it "prettier" than a game that should run at 60fps on a 720p (the added resolution "only" gives you more clarity). You use the "extra" fillrate on producing nicer looking pixels instead of computing many more pixels.

Now say you need 3x the performance the get the "same" quality picture and frame-rate at 720p. If you only have a 2x performance GPU you can't make that. But you can make your 480p 60fps image "2x nicer" instead.

Now imagine that the costs of the GPU performance doesn't scale linearly, i.e. 2x would cost you $50 extra, but 3x $150. You could then save money going with only 2x and focusing on 480p, while making the picture prettier.

Of course this would assume that you could actually use that extra power at 480p to make distinctively better shaders, that would make the picture prettier than what you'd gain from the added clarity. For example (and this is totally made up to examplify): at 720p your sub-surface scattering shader might become to expensive to use, so you get "opaque" skin, but with the added resolution, you can make more (texture) detail (pores, spots, etc.) visible on the skin surface. At 480p these details could not be made out, but you have enough fillrate to run the expensive shader instead and you can accurately see the light penetrating your characters fingers, ears etc.

The question would be which of those would you prefer if you had to choose? (Again, I'm not saying the 360 can't do sub-surface scattering)