CJ_75

Member

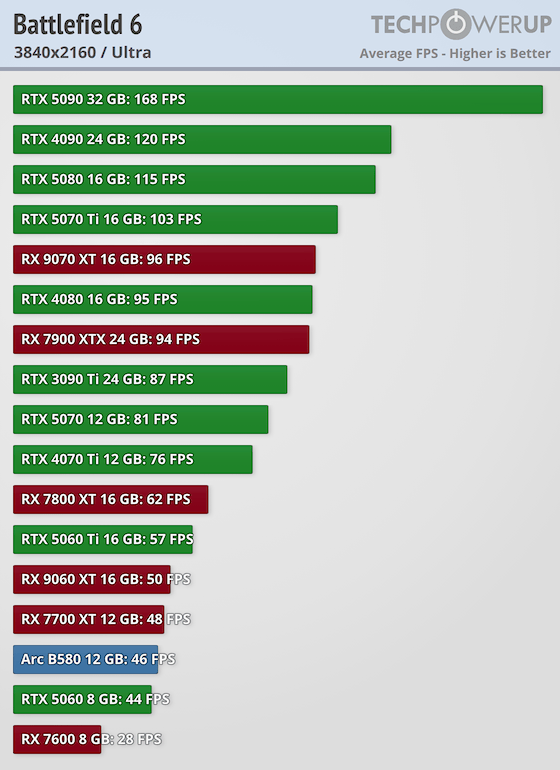

So that would mean the performance jump is similar to the leap from the RTX 4090 to the RTX 5090.Looks like it gives you 10 fps more than 5090. Congrats.

So that would mean the performance jump is similar to the leap from the RTX 4090 to the RTX 5090.Looks like it gives you 10 fps more than 5090. Congrats.

Keep ignoring multi framegen…So that would mean the performance jump is similar to the leap from the RTX 4090 to the RTX 5090.

It's definitely much more than that. Even without MFG, there are games where the 5090 is 50% faster than the 4090. For example counter strike or RDR2 with MSAA.So that would mean the performance jump is similar to the leap from the RTX 4090 to the RTX 5090.

Could you send me some jelli over?its not a gaming GPU so why bother comparing it to one.

and duct tape them for the ultimate power.You could buy 15 PS5 Pros for that price!

and your back to only using the one.What really matters is that I used more brain cells crafting my response than you did with your initial post.

So that would mean the performance jump is similar to the leap from the RTX 4090 to the RTX 5090.

and your back to only using the one.

Dude, chill. Obviously, I was being sarcastic, since the general consensus here was that the RTX 5090 isn't a massive jump over the previous generation, and there was a lot of debate about 'fake frames.' I personally upgraded from a Gigabyte RTX 4090 to an RTX 5090 Founders Edition and I'm loving it. Frame Generation and MFG doesn't bother me, and input lag isn't an issue for me because I mainly play single‑player games and some racing as well.is this person a troll or something?

10 fps gain in what ? Yukong for example ? so a jump from 20 fps path tracing to 30 fps which is more than 33% performance gain ? all the sudden this is not a performance jump ?

because the jump from the PS5 to PS5 pro is way less than that for a 100$ shy it being double the price of the initial PS5 release which was 400$...

i am confused..

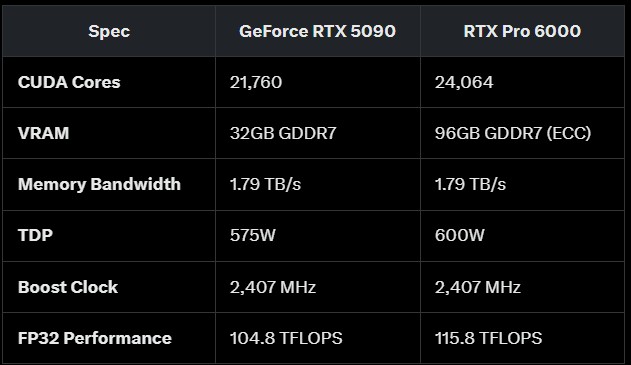

Seems like that's the most drastic difference between them.so, the pro versions are the same as the gaming versions with more VRAM?

That's not how it works.I get that it's just benchmarking for the hell of it, but it does just make me annoyed that stuff like the RTX 5090 even exists as a "gaming" card.

Shit is overpriced because it includes crap that is useless for gaming you're being charged for, like 32gb of vram during a time where top-end games are barely using 16gb, and it's actual utility is a workhorse card for AI, content creation, rendering, etc. It's not even beneficial for future proofing, by the time games use that much vram other specs on the card will be out of date.

I'm waiting for my RTX 6010 Super that could run Crysis 4.Meh. I'm waiting for the RTX 6000 PRO Super.

That's not how it works.

The 5090 has a 512 bit bus with a rather insane bandwidth; which is beneficial for games today. Simply put, more processing wants more memory bandwidth.

512 bit bus means 16 memory channels though. Which in turn means that 16 memory modules are required. And since the lowest capacity GDDR7 modules are 2GB you end up with at least 32GB to "fill up" that bus.

Also, the memory modules aren't that expensive to begin with. Let's say that 8 modules add $100 to the price.

Yepp, "highest end" always comes with non-linear added costs. I just pointed out that 32GB isn't that troublesome (with that said, the gaming performance would probably be very similar with a 384 bit bus).I understand that, but I don't think it's enough to justify it being twice as much as the 5080 for gaming. You're not getting double the gaming performance, and in most games I've seen it's between 40-50% difference or lower.

If you're not doing rendering, local AI models or content creation that gets more out of this card...you're wasting money that would be better spent pocketing for the next generation of GPU with that $1000 savings that will likely pay for almost all an rtx 6080 is going to be.

You have always been able to play games on Nvidia's workstation GPU'sIsn't that a workstation GPU, not for regular PC usage ?

You just need to shunt mod your 5090 to draw up to 800W and you can surpass the performance of the Pro 6000, like Derbauer did.

https://www.extremetech.com/computing/nvidia-rtx-5090-shunt-mod-gets-it-to-800w-beating-rtx-6000-pro

A

But at least it will be a true frame rate, not faked with MFGYeah but the framerate would still be low

Hahaha this is why I come to GAF.iTs NoT a GaMiNg GpU sO wHy BoThEr CoMpArInG iT tO oNe

Same man. Only beef is that FSR4 isn't everywhere, which sucks because its legit. Otherwise, card is all you really need.I'm, uh, quite satisfied with my 9070 XT. This seems p. overkill to me.

Yepp, "highest end" always comes with non-linear added costs. I just pointed out that 32GB isn't that troublesome (with that said, the gaming performance would probably be very similar with a 384 bit bus).

The same goes for 5080 btw, with the 5070Ti offering much better performance/$.

So that would mean the performance jump is similar to the leap from the RTX 4090 to the RTX 5090.

And sometimes getting older Quadro card can make sense for retro gaming as well.You have always been able to play games on Nvidia's workstation GPU's

I'm not saying it's particularly cost effective to do it but that's been the case since the Quadro days

Stop being poor.Idk why you would buy one unless you are a millionaire