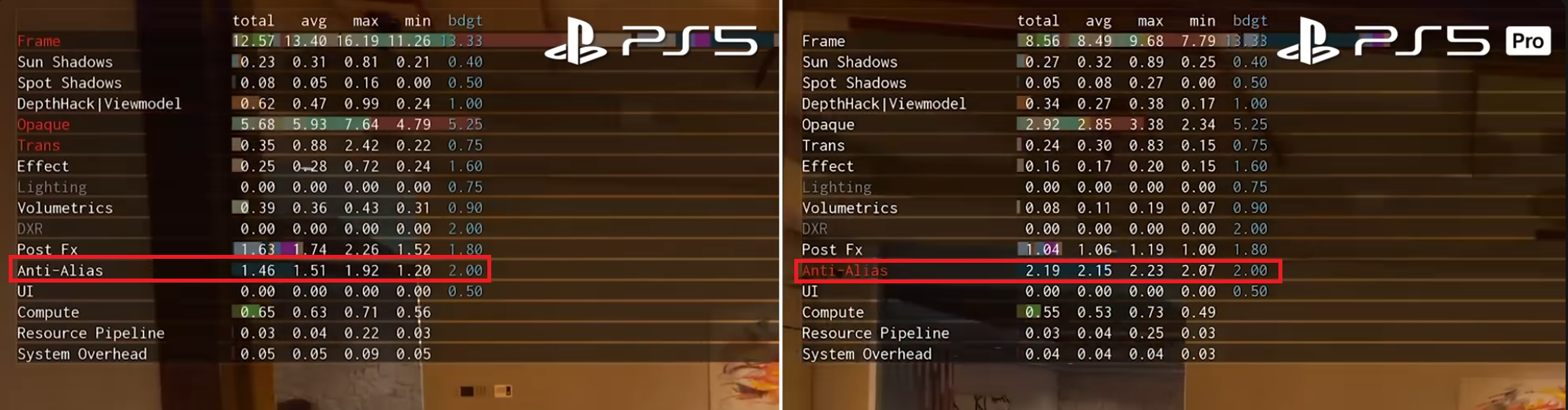

We can see the total frametime spent in the developer image I linked. No speculation, no calculations, no working back from relative framerates. TAAU is about 1.5ms and PSSR is slightly higher at 2.1ms. That's for reconstructing a 4K image at 60fps. So PSSR is about 0.6ms heavier than TAAU for a total rendering budget for the entire solution of 2.1ms. The comparison is the same in a wide spectrum of games I linked. Call of Duty, Alan Wake 2, AC Shadows, GoW: Ragnarok, and one other I didn't show a screenshot of, Control. PSSR is about 0.6-1ms heavier than the regular temporal AA/upscalers, and is around 2ms in total. Which isn't odd, DLSS4 and FSR4 are also both heavier than TAAU/FSR2.

So you are right, just the computational cost for PSSR is under a 1 ms, but it's also correct to say the end to end solution cost is 2ms.

Just out of interest where is the accompanying info for this readout? //have it in my head it was from a codemasters game readout

because that table when converted to csv files - by OCR - and given a closer look in a spreadsheet suggests something completely different; especially when you stop and ask: What has Anti aliasing got to do with FSR upscaling part? or PSSR's model inferencing? Nothing would be the logical answer, but because the top frame time in each table looks like the sum of the sub list values below we all just assume it is baked into the AA, but what if I tell you that on the PS5 table the difference between the frame-time and the sum of the sub values is 1.15ms - a reasonable scaling time for FSR - and on the PS5 Pro table the difference is just 0.65ms - which could easily be PSSR inferencing?

Please see the tables in text csv format below

, but either way I can't say why the AA value is so much bigger on PS5 Pro without knowing the context of how many jitter accumulation samples were used on each algorithm. but it does stand to reason that ML AI resulting in more accumulation jitter sample history will use more ROPs&Compute/time to do the final AA output, and obviously bigger upscales will increase that by a factor too.

PS5,,,,,,

Component,total,avg,max,min,bdgt,ocr_confidence

Frame,12.57,13.4,16.19,11.26,13.33,98

Sun Shadows,0.23,0.31,0.81,0.21,0.4,98

Spot Shadows,0.08,0.05,0.16,0,0.5,98

DepthHack|Viewmodel,0.62,0.47,0.99,0.24,1,98

Opaque,5.68,5.93,7.64,4.79,5.25,98

Trans,0.35,0.88,2.42,0.22,0.75,98

Effect,0.25,0.28,0.72,0.24,1.6,98

Lighting,0,0,0,0,0.76,98

Volumetrics,0.39,0.36,0.43,0.31,0.9,98

DXR,0,0,0,0,2,98

Post Fx,1.63,1.74,2.26,1.52,1.8,98

Anti-Alias,1.46,1.51,1.92,1.2,2,98

UI,0,0,0,0,0.5,98

Compute,0.65,0.63,0.71,0.56,,98

Resource Pipeline,0.03,0.04,0.22,0.03,,98

System Overhead,0.05,0.05,0.09,0.05,,98

,,,,,,

Sum of listed costs,11.42,,,,,

FSR before AA,1.15,,,,,

,,,,,,

PS5 Pro,,,,,,

Component,total,avg,max,min,bdgt,ocr_confidence

Frame,8.56,8.49,9.68,7.79,13.33,98

Sun Shadows,0.27,0.32,0.89,0.25,0.4,98

Spot Shadows,0.05,0.08,0.27,0,0.5,98

DepthHack\Viewmodel,0.34,0.27,0.38,0.17,1,98

Opaque,2.92,2.85,3.38,2.34,5.25,98

Trans,0.24,0.3,0.83,0.15,0.75,98

Effect,0.16,0.17,0.2,0.15,1.6,98

Lighting,0,0,0,0,0.75,98

Volumetrics,0.08,0.11,0.19,0.07,0.9,98

D:R,0,0,0,0,2,98

Post Fx,1.04,1.06,1.19,1,1.8,98

Anti-Alias,2.19,2.15,2.23,2.07,2,98

UI,0,0,0,0,0.5,98

Compute,0.55,0.53,0.73,0.49,,98

Resource Pipeline,0.03,0.04,0.25,0.03,,98

System Overhead,0.04,0.04,0.04,0.04,0.03,98

,,,,,,

Sum of listed costs,7.91,,,,,

PSSR cost before AA?,0.65,,,,,