Skifi28

Member

Polyphony Digital is developing a new rendering system for Gran Turismo that uses neural networks to determine which objects in a scene need to be drawn, and the early results suggest it could meaningfully improve performance on PlayStation 5. The system, called "NeuralPVS", was detailed in a technical presentation at the Computer Entertainment Developers Conference (CEDEC) last year. The talk was given by two Polyphony graphics engineers: Yu Chengzhong and Hajime Uchimura.

How Rendering Works Now

Every frame Gran Turismo renders contains thousands of objects: buildings, trees, grandstands, barriers, track surfaces, and everything else that makes up a course environment. But, at any given moment, only a fraction of those objects are actually visible to the player. Some are behind the camera, some are off to the side, and some are hidden behind other objects in the scene.

Drawing all of those invisible objects would be a waste of processing power. So the game uses a process called "culling" to figure out which objects can be skipped. The better the culling, the less work the CPU and GPU have to do, which means more stable frame rates and potentially more room for visual detail.

Gran Turismo 7 currently uses a precomputed culling system. Before a course ships, Polyphony's tools render the track from thousands of camera positions along the driving surface, recording which objects are visible from each spot. Those results are stored as visibility lists (internally referred to as "vision lists") that the game looks up at runtime.

To keep the data manageable, the system clusters those thousands of sample points into a smaller set of zones using a mathematical technique called Voronoi partitioning. At runtime, the game figures out which zone the camera is in and uses that zone's visibility list to decide what to draw.

Where The Current System Falls Short

This clustering approach works, but it has some inherent limitations.

The boundaries between zones are hard lines, which means visibility can only change in abrupt, discontinuous jumps as the camera crosses from one zone to the next. Those boundaries don't always line up neatly with the actual geometry of the course, either, which can lead to objects popping in or out at moments that don't look natural.

Neural Networks to the Rescue!

NeuralPVS replaces that zone-based lookup with a neural network that learns the relationship between a camera position and which objects should be visible. Instead of snapping to the nearest precomputed zone, the network takes the camera's exact coordinates and outputs a visibility prediction for every object in the scene.

The result is a smooth, continuous visibility field rather than a patchwork of discrete zones. Objects transition in and out of visibility gradually as the camera moves, rather than flipping on and off at arbitrary zone boundaries.

PS5 Benchmarks

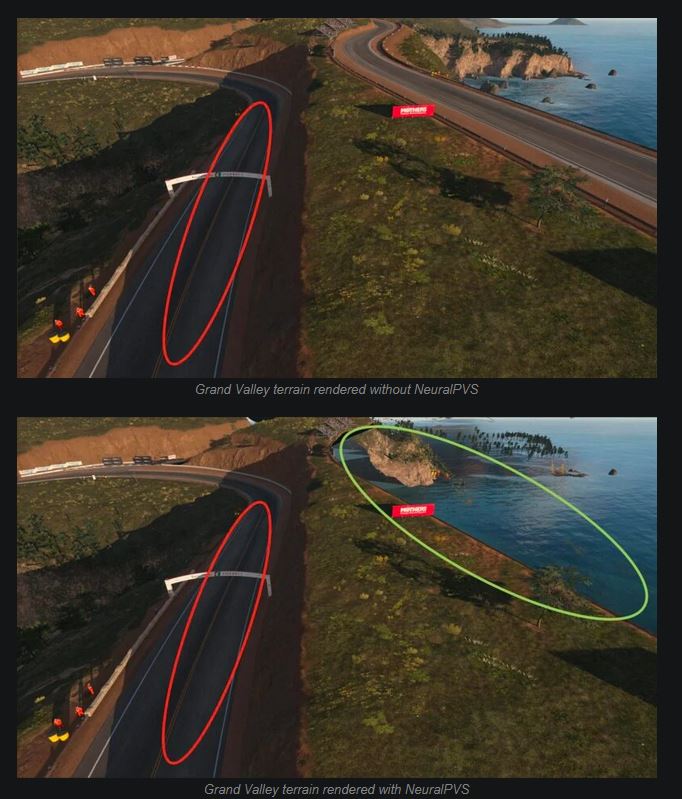

The presentation included benchmark data from PlayStation 5 running on two courses: Eiger Nordwand and Grand Valley.

On Eiger Nordwand, average CPU frame time dropped from 3.944ms to 3.758ms with NeuralPVS enabled. GPU improvements were smaller (averaging 0.026ms), which makes sense given that Eiger is a course with relatively few occluders. The gains come from the network learning to use terrain features that the existing system overlooks.

Grand Valley showed more dramatic results. CPU average dropped from 4.552ms to 4.256ms, and the CPU maximum dropped from 6.378ms to 5.849ms, a reduction of over half a millisecond. GPU load was also more stable across the course, with the maximum GPU time dropping by nearly 0.1ms.

I think this is a pretty interesting solution to an age-old problem and the gains seem to be there. On a 20km track like the ring, I imagine they would be even greater. As far as I'm concerned this is how AI should be used in games, to provide engineering solutions to things that can't be easily solved by hand, not replace artists with slop. I wonder if it'll be deployed on GT7 or we'll have to wait for the next.

Last edited: