You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

How close are current games' graphics to reality? Scale from 1 to 100?

- Thread starter Grildon Tundy

- Start date

Grildon Tundy

Member

Interesting. I'd completely flip that. Mirror's Edge's urban environments back in 2008 looked mind-blowing, and they've only gotten better. I think manmade structures are easier to mimic in a game due to their relatively less-complex nature. TLOU Part II's and RDR2's outdoor scenes are beautiful, but they're nowhere near real, imo. Can't think of a game where the outdoors look betterWe're close if you don't zoom in and analyse too much.

Urban environments still way off due to the complexity, but natural environments are very good looking.

Grildon Tundy

Member

I dunno, man. There are lots of examples of CG in movies people didn't even realize were CG. This is an old article, so I bet there are even better and more recent examples, but if you like movie trivia, it's pretty interesting:I'm not someone who plays for 'the graphics' but, games now are rendered better than CG in modern movies.

31 Mind-Blowingly Ordinary Scenes You Won't Believe Are CGI

These days we pretty much expect our movies to be almost entirely CGI. Minus a small scene or tw- What's that? Oh those are actually CGI also?

Kilau

Member

I mean, can you blame them?!People always say it's really close, but a few years later everyone agrees it really wasn't. Been like that for decades. I remember reading a review for some basketball game on PS1 back in the 90s where the reviewer stated the graphics were so realistic that some of his family members thought they were watching an actual game of basketball on TV.

majorgamer10

Banned

Mortal Kombat was using real life models on the Sega Genesis and Super Nintendo.

Phase

Member

Oh, you're one of those people who have shit eyes and can't see the difference in 30/60/144/240fps. It doesn't become more fake. It's actually the opposite. What you're actually seeing is more real, leading to you being able to tell, for example, when actors are on a fake set. If they had shows in 240fps it'd be even more apparent.

Framerate importance is incredibly overblown. 60fps games look MORE fake than 30-40 fps. Just like 60 fps movies look like soap opera garbage, so they stopped making them.

The human eye doesn't see 60fps in reality, so when we see it in media our brain thinks "fake".

Lighting and material representation, and that materials interaction with that lighting, is the holy Grail of photo realism. We're 10-15 years away.

Last edited:

Rusty Shackelford

Member

I thought Gran Turismo 2 was the pinnacle of visuals. Man, if I could go back to 1999 with the new FM and GT7...

simpatico

Gold Member

We're up to exactly 52.968 on this scale. We moved forward on lighting in some aspects, but it's crazy how far back we've fallen in physics, compared to as far back as the early 2000s. Which really for me was the thing that made games feel alive. When the world you are playing in is touched by your actions. Now we get games with great textures and lighting, but you can hit a wicker basket with a 40mm grenade and it doesn't even move or show damage.

Not so sure about that. I'd say the tradeoff is far worse for little gain. If you see a 24fps video recording on TV does it look any less real? the massive amount of graphical settings you would need to lower or forgo to reach something like 500fps for such little gain trying to get realism just isn't worth it. Not even TV considers that worth it.I think frames per second is a huge factor that is often overlooked when talking about realism. If a game looks really good but has 30fps it will feel substantially less real than if you had 500fps. Smooth movement in addition to great graphics gets us closer to real life.

Grildon Tundy

Member

I have just the monitor for you:I think frames per second is a huge factor that is often overlooked when talking about realism. If a game looks really good but has 30fps it will feel substantially less real than if you had 500fps. Smooth movement in addition to great graphics gets us closer to real life.

A PC monitor with a 500 Hz refresh rate is coming from Asus

Upcoming 500 Hz monitor targets PC gamers with beefed-up systems, various skill levels.

arstechnica.com

arstechnica.com

Phase

Member

I have just the monitor for you:

A PC monitor with a 500 Hz refresh rate is coming from Asus

Upcoming 500 Hz monitor targets PC gamers with beefed-up systems, various skill levels.arstechnica.com

TGO

Hype Train conductor. Works harder than it steams.

You think it's overlooked...........I think frames per second is a huge factor that is often overlooked when talking about realism. If a game looks really good but has 30fps it will feel substantially less real than if you had 500fps. Smooth movement in addition to great graphics gets us closer to real life.

iALX

Member

If you're asking strictly about how characters and environments look, we're very close to the point of realism in realtime game rendering.

However, to truly reach realism, games would not only have to look realistic, but behave realistic as well, and the latter has been stagnating for a long time.

Graphics have been at a steady rise since recently, as can be expected -- model fidelity, texture resolution and lighting have risen to the level where 4K is needed to enjoy the detail.

Animation, on the other hand, has mostly remained where it was since 5-6 years ago. There have been great outliers -- Aloy's facial animations in Horizon Forbidden West's main story and Hellblade 2 Senua demo using Unreal's MetaHumans, for example.

Another feature not being developed as much as it should is also the AI. It's not an easy/obvious feature to sell as graphics, but with current hardware capabilities, it's a shame AI has not advanced more, on average. Having characters behave more realistically, and having smarter enemies would add tons to the immersion and contribute to game realism.

However, to truly reach realism, games would not only have to look realistic, but behave realistic as well, and the latter has been stagnating for a long time.

Graphics have been at a steady rise since recently, as can be expected -- model fidelity, texture resolution and lighting have risen to the level where 4K is needed to enjoy the detail.

Animation, on the other hand, has mostly remained where it was since 5-6 years ago. There have been great outliers -- Aloy's facial animations in Horizon Forbidden West's main story and Hellblade 2 Senua demo using Unreal's MetaHumans, for example.

Another feature not being developed as much as it should is also the AI. It's not an easy/obvious feature to sell as graphics, but with current hardware capabilities, it's a shame AI has not advanced more, on average. Having characters behave more realistically, and having smarter enemies would add tons to the immersion and contribute to game realism.

I'd bang those tripletsCurrent? We've reached that point years ago

I thought we were almost photo realistic around COD2. Obviously that was a joke. But I find that the hyperdetailed environments of today are too distracting, hard to spot what I want from a game perspective, it's lost in the mass of shit on screen. Then I play a retro pixel game and its all sweet. VR is a bit different, somehow that full immersion works. But on a TV or monitor I kinda want a stylized art aesthetic these days versus photorealism. Sim games obviously an exception.

Black_Stride

do not tempt fate do not contrain Wonder Woman's thighs do not do not

I do offline rendering and realtime rendering using Unreal Engine.

Our offline renderers are on a different league even if we inject the exact same assets.

Unreal Engines Pathtracer is very good, but I dont think youll be seeing any games using Unreals Pathtracer and even then our offline renderer far surpasses it.

The "errors" are almost impossible to notice until you see the ground truth from an unbiased renderer

If you ask a renderer who uses unbiased rendering, theyll tell you the image on the left is inaccurate maybe theyd go as far as to say its straight up wrong even if to the naked eye without going too deep it is practically a photo.

The image on the right is much much closer to reality......but still not quite there. (yes offline renderering can go deep on whats accurate enough)

Has any game comes even close to the image on the left, let alone the image on the right?

And if we are talking about rendering humans, we are so far away the humans in games might as well be cartoons.

Yes, even Callisto Protocol and Senua 2 are far away, Callisto has a good skin shader, but the detail just isnt there.

You couldnt tell me which of the below images is CGI and which is a photo:

Our offline renderers are on a different league even if we inject the exact same assets.

Unreal Engines Pathtracer is very good, but I dont think youll be seeing any games using Unreals Pathtracer and even then our offline renderer far surpasses it.

The "errors" are almost impossible to notice until you see the ground truth from an unbiased renderer

If you ask a renderer who uses unbiased rendering, theyll tell you the image on the left is inaccurate maybe theyd go as far as to say its straight up wrong even if to the naked eye without going too deep it is practically a photo.

The image on the right is much much closer to reality......but still not quite there. (yes offline renderering can go deep on whats accurate enough)

Has any game comes even close to the image on the left, let alone the image on the right?

And if we are talking about rendering humans, we are so far away the humans in games might as well be cartoons.

Yes, even Callisto Protocol and Senua 2 are far away, Callisto has a good skin shader, but the detail just isnt there.

You couldnt tell me which of the below images is CGI and which is a photo:

PeteBull

Member

Super ez to tell left pic is real, right one is fake/cgi, just look at those dead/doll eyes .You couldnt tell me which of the below images is CGI and which is a photo:

PeteBull

Member

Since poll is about games i chose 71-80%, cgi can get much closer ofc, depending on quality of cgi, some of it, even from solid few years back, are super close/at photorealisitc lvl, but we talking games here, so gameplay/real time, so easily 20-25years away- lets assume ps6 happes in 2028, ps7 in 2036, ps8 in 2044, so soonest by ps8, likely by ps9 we will reach top cgi quality we got now-aka photorealism.

LazyParrot

Member

No, I have no problem believing that actually happened and that they were serious. I saw it myself when 3D games first started popping up in the 90s. People who weren't into video games and associated the term with shit like Pong and Frogger legit thought they weren't watching games when they saw 3D gameplay, because in their mind that wasn't what games looked like.I mean, can you blame them?!

Even gamers weren't immune to those delusions. I remember my friend showing up at my house all hyped up after he had played Soul Calibur on the Dreamcast for the first time, raving about how the characters were indistinguishable from real people.

My money is on both being CGI lit by different HDRIs. Everything is literally in the exact same place, down to the tiniest hair. That shit doesn't happen in real life.You couldnt tell me which of the below images is CGI and which is a photo:

Last edited:

Black_Stride

do not tempt fate do not contrain Wonder Woman's thighs do not do not

Close but no cigar.Super ez to tell left pic is real, right one is fake/cgi, just look at those dead/doll eyes .

Both are CGI

Drizzlehell

Banned

It really depends on several factors, most important of which are: a) what is being rendered, and b) is the developer even going for photo realism?

Human beings and animals are generally the hardest thing to pull off without going into the uncanny valley so in that area I think we're only about 30% of the way there. But when it comes to rendering scenes of still life, I think some games got pretty close. I remember The Vanishing of Ethan Carter pulled off some of the scenes that looked almost real, and that was back in 2014 so you can only imagine what could be done today using similar techniques that were used in that game.

Developers also get pretty damn far when it comes to rendering stuff like water and cars too. Looking at this photo mode shot from Gran Turismo 7 that I took, I honestly wouldn't be able to tell that the car isn't real if I didn't know that it was from the game:

Human beings and animals are generally the hardest thing to pull off without going into the uncanny valley so in that area I think we're only about 30% of the way there. But when it comes to rendering scenes of still life, I think some games got pretty close. I remember The Vanishing of Ethan Carter pulled off some of the scenes that looked almost real, and that was back in 2014 so you can only imagine what could be done today using similar techniques that were used in that game.

Developers also get pretty damn far when it comes to rendering stuff like water and cars too. Looking at this photo mode shot from Gran Turismo 7 that I took, I honestly wouldn't be able to tell that the car isn't real if I didn't know that it was from the game:

But the concept isn't different, a couple of tech guys decide what's closer to reality based on the choices they make.I wouldn't say offline is miles away. It's gotten pretty freakin' close. But games are far, far from reality.

HeWhoWalks

Gold Member

The concept is different, because offline doesn't have the restrictions of a game.But the concept isn't different, a couple of tech guys decide what's closer to reality based on the choices they make.

Then who made these tools?The concept is different, because offline doesn't have the restrictions of a game.

HeWhoWalks

Gold Member

"Same tools, different results"Then who made these tools?

Is this what you're trying to say? And, are you sincerely trying to argue that games/offline rendering are both near this goal at the same pace/rate?

Last edited:

Morrie’s wig shop

Banned

I picked 1-10,

I've been in war and battlefield looks nothing like it, in fact I wish war looked like battlefield cause it be less gory and dirty (nothing like the taste of brain matter in your mouth)

Besides that picture you used of an Abrams is over saturated and used for PR (it's from an exercise in Romania) and this shot is from a video.

I'd say the only game I've ever done a double take, is MS Flight Sim and it was running on a monster rig.

Honestly you can always tell within seconds that it's a game, usually from the lighting the movement of certain things but at some point in the future we will get there…… I think.

I've been in war and battlefield looks nothing like it, in fact I wish war looked like battlefield cause it be less gory and dirty (nothing like the taste of brain matter in your mouth)

Besides that picture you used of an Abrams is over saturated and used for PR (it's from an exercise in Romania) and this shot is from a video.

I'd say the only game I've ever done a double take, is MS Flight Sim and it was running on a monster rig.

Honestly you can always tell within seconds that it's a game, usually from the lighting the movement of certain things but at some point in the future we will get there…… I think.

rabbity-thing

Member

Close but no cigar.

Both are CGI

They walked right into your carefully laid trap.

I did too. Well played.

64gigabyteram

Reverse groomer.

in screenshots we are basically indistinguishableIt's clear that significant progress has been made in terms of approaching reality with video game graphics, but I'm curious where people think the "upper limit" is, and where we are in relation to it. If realism is one end goal of video game graphics, where do you think we are right now on a scale from 1-100?

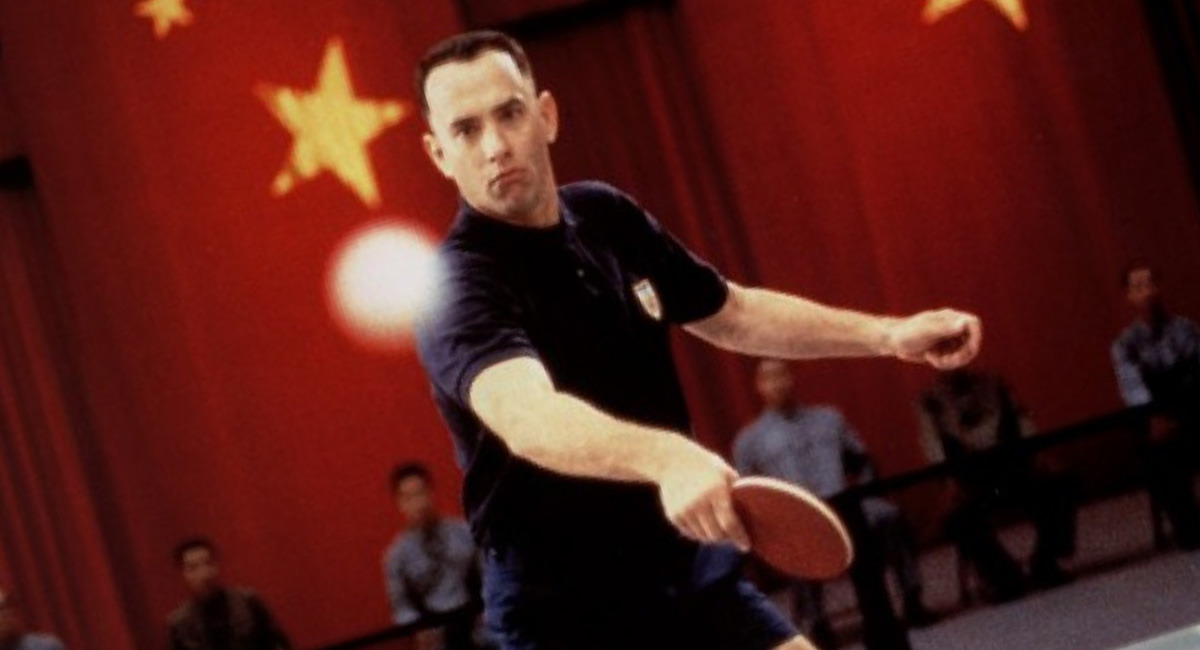

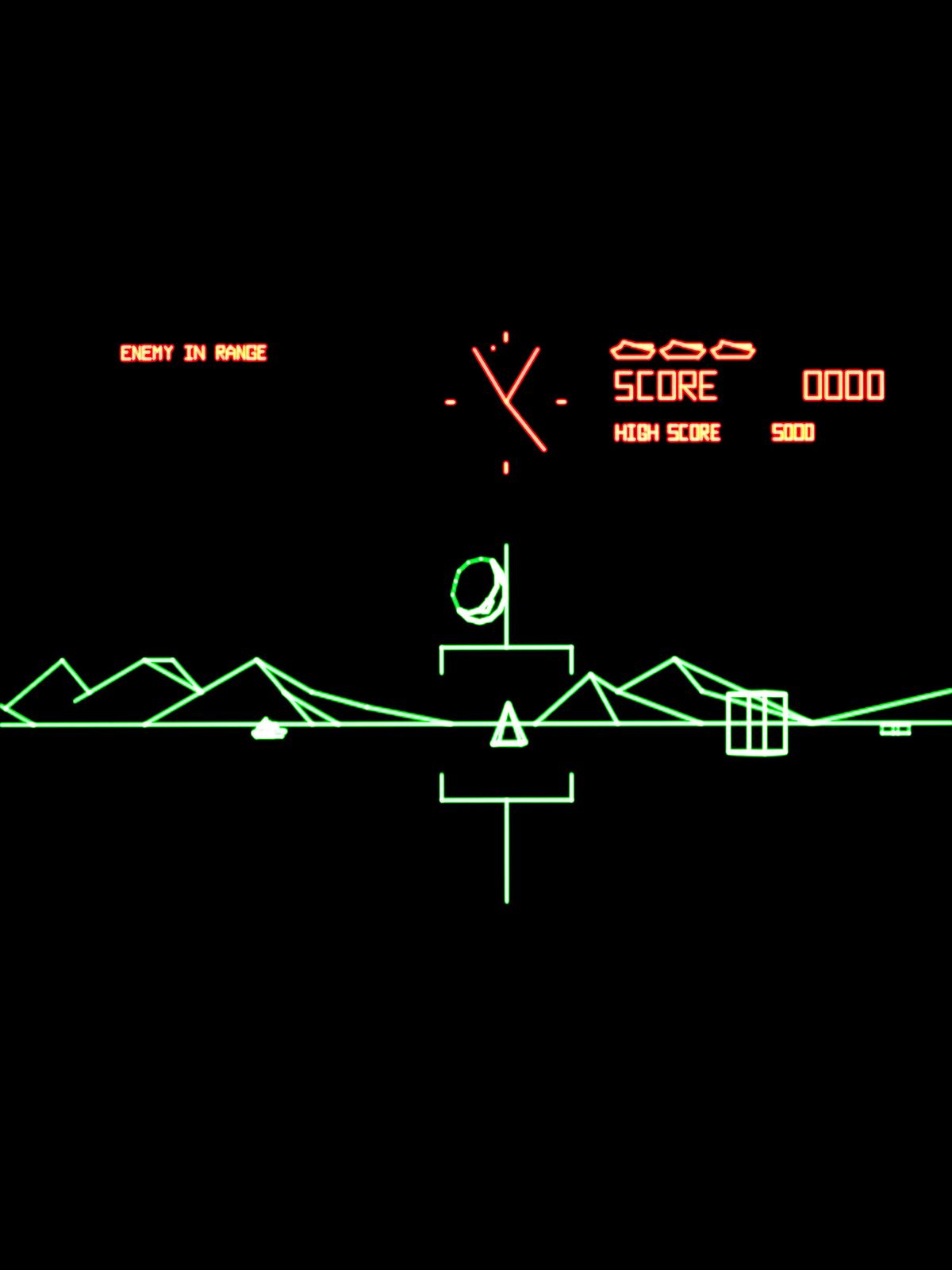

For the sake of conceptualizing and showing the trajectory of graphics towards realism, let's say Battlezone (1980) is the baseline (a "1"):

In keeping with the tank theme, about twenty years later, there was Battletanx in 1998:

And another ~20 years later, there was Battlefield 2042 (2021):

And here's a shot of an actual tank firing:

Yes, a picture of a tank is not reality, but hopefully you see what I'm getting at.

_______________________

I want to say we're at about 85%. But I remember standing in Walmart watching Ocarina of Time on the N64 and saying to the kid next to me something like: "Nothing will ever look better than this." I was proven wrong on how much video game graphics would progress then, and I hope I'm proven wrong again.

in motion with all the video gamey animations and everything... not even close

I'd consider it 50, they have the look part down, not so much the immersion. give us more interesting physics and animations, we'll be closer

64gigabyteram

Reverse groomer.

I wouldn't call Matrix that realistic, the green simulated filter keeps it from looking like real life since real life... doesn't have a green filter over everything.61-70. Most games still have that cartoony look. In terms of reality we only got FS2020 and the Matrix demo.

When I think of a realistic looking game, i think racing games like Gran Turismo and Forza Motorsport are the best candidates to compare, since their art directions usually don't stray far from reality, they're about as realistic as you can get. Horizon looks amazing but it's also not a real universe, Spiderman is too styilized to count, God of War is also not a real universe, etc. Not to mention it's a lot easier to make a car look realistic than a human since cars are machines, they are harder to make look "unnatural" since there's nothing "natural" about them.

Last edited:

Akuji

Member

Ur joking, right ?

Framerate importance is incredibly overblown. 60fps games look MORE fake than 30-40 fps. Just like 60 fps movies look like soap opera garbage, so they stopped making them.

The human eye doesn't see 60fps in reality, so when we see it in media our brain thinks "fake".

Lighting and material representation, and that materials interaction with that lighting, is the holy Grail of photo realism. We're 10-15 years away.

crazyriddler

Member

I didn't think there were still people who use that in a non-ironic way.The human eye doesn't see 60fps in reality, so when we see it in media our brain thinks "fake".

And no, people perceive movies at over 24 frames as fake because they are used to 24 frame movies. While video games have always had higher framerates (since input time is important). So it is a mental association, not a physical reality of human vision.

Raven77

Member

I didn't think there were still people who use that in a non-ironic way.

And no, people perceive movies at over 24 frames as fake because they are used to 24 frame movies. While video games have always had higher framerates (since input time is important). So it is a mental association, not a physical reality of human vision.

Proof of wrongness

64gigabyteram

Reverse groomer.

Seems to me like 60fps in video games is more natural and accurate to what we can see. I think that should be pretty obvious too judging by how real life looks.... exceptionally smooth compared to any image on a screen displayed below 100hzThat's especially rapid when compared with the accepted 100 milliseconds that appears in earlier studies. Thirteen milliseconds translate into about 75 frames per second.

PeteBull

Member

Then it proves my point, cgi already can look photorealistic, now just need to patiently wait 20-25years to achieve it in gameplay/real time xDClose but no cigar.

Both are CGI

Black_Stride

do not tempt fate do not contrain Wonder Woman's thighs do not do not

Whats was your point exactly in regards to my post?Then it proves my point, cgi already can look photorealistic, now just need to patiently wait 20-25years to achieve it in gameplay/real time xD

I was proving that biased offline renderers are still far from real life compared to unbiased renderers.....and games are a million miles away from biased renderers.

So on scale from 1 to 100, we are closer to 1 than we are to 10 let alone 100 with current real time graphics.

Ammogeddon

Member

There are certainly moments in some games now where they look photo real, but once you start moving and interacting with the world

the illusion goes.

We're definitely getting there but there are so many things that need to come together.

Thinking back only a few years I'd never have imagined raytracing or 4K would be a thing. I'm sure there will be other essential technological hurdles we need to jump but I'm sure we'll get there.

the illusion goes.

We're definitely getting there but there are so many things that need to come together.

Thinking back only a few years I'd never have imagined raytracing or 4K would be a thing. I'm sure there will be other essential technological hurdles we need to jump but I'm sure we'll get there.

crazyriddler

Member

Did you even read my comment? The article reaffirms what I said. We can perceive information at over 60fps (16.6ms).

The thing is that in the case of cinema, our eyes and brains are used to the 24 frames per second cadence, this is a fact. TV shows, news and other live content are recorded at 30fps (our brain perceives it as lower quality content because we associate that rate with that "quality"). And video games (usually between 30 and 60) we perceive them as digital or "fake" for the same reason, pure association.

There are thousands of studies on this, and it is the reason (besides the cost of editing) why cinema has remained "loyal" to 24fps, and experiments like "The Hobbit" at 48fps failed and were not liked.

crazyriddler

Member

Yes, the thing is that if you get a movie at 60fps, besides looking somewhat cheap (by cheap, I mean home movie style), any CGI-generated image will look more "digital", but this is purely due to conditioning. If cinema had been traditionally recorded at 60fps, this would not be the case.You mean close to something filmed right?

Because to actual real life it's not close at all. In fact it's really far from it.

Raven77

Member

Did you even read my comment? The article reaffirms what I said. We can perceive information at over 60fps (16.6ms).

The thing is that in the case of cinema, our eyes and brains are used to the 24 frames per second cadence, this is a fact. TV shows, news and other live content are recorded at 30fps (our brain perceives it as lower quality content because we associate that rate with that "quality"). And video games (usually between 30 and 60) we perceive them as digital or "fake" for the same reason, pure association.

There are thousands of studies on this, and it is the reason (besides the cost of editing) why cinema has remained "loyal" to 24fps, and experiments like "The Hobbit" at 48fps failed and were not liked.

Good points, but a 60 fps N64 game looks like crap. 60 fps will not make a game much more photorealistic. The things I mentioned, like lightning, will have a much bigger impact.

And developers understand this, most of them could care less about getting their games to 60 fps, instead they're pushing things that really matter, like lighting.

64gigabyteram

Reverse groomer.

certainly a hell of a lot more playable than the original 20fps version though....Good points, but a 60 fps N64 game looks like crap.

Red-Dead-Ocelot

Gold Member

The Order 1886. Still looks good. Come at me.