Tickle My Tendies

Member

it's true what they say. beauty is in the eye of the beholder.

Only ugly people say that.

Source: Me. I am ugly as fuck.

Last edited:

it's true what they say. beauty is in the eye of the beholder.

But doesn't HDR10 require 10 bit color? 8 bit is SDR right?

Yeah its pretty much half the resolution, but done in a way that masks it pretty well. I agree its a big performance saver and when done right looks really, really good. They made the right decision building the Pro around using this technique, and I expected them to continue for the PS5... not sure if that is the path they are going though.Yeah I guess its like for a 60 FPS game, 30 of the frames are around 50% of the pixels of 2160p (or sometimes more than 50% like half the vertical pixels but all the horizontal pixels, so like 1920x2160, or diff stuff like that) and then the other 30 frames are the other pixels, so by combining them together it looks like 2160p to the players eye on screen. I think thats how I read it all works.

But yeah there can be the motion artifacts with fast moving scenes. Not perfect but a solid performance saver.

lol. Maybe.

Just made this gif by comparing the 30 fps ps4 version of spiderman with the 60 fps pro version of Infamous. Looks like a massive difference.

That means they are using checker boarding to render to full 4K (full height anyways) and then not doing another upscale. Checker boarding, or other methods of reconstructions using local data and temporal information (previous frame data) is a lot more effective than standard up scaling.I thought 4K checkerboard was a sort of "upscale" way to run 4k but actually at a lower resolution.

If they put 2160p that is true 4k. So it doesn't make sense to me.

I have too many good games in my backlog, I'm never catching up.

In a native PS5 version they could add native 4K, 120fps, HDR, super short loading times, 3D audio, DualSense features.What would they do for Wipeout: Omega Collection? I thought it was native 4K, locked 60 anyway, I guess they could downsample from above 4K? Maybe LOD tweaks, I haven't played for an extended time in ages though so maybe there are some glaring issues I've forgotten about. It probably does drop frames during explosions of alpha effects since everything does come to think of it.

I would love to see HDR added, but it sounds like too much extra work when the studio doesn't exist anymore, sadly.

very close to completionAwesome! I was slow to get rolling on this am curious how far I am?

I just unlocked the gate to Jotenheim after flipping Tyr's chamber...how far am I?

any idea how 3d audio work on god of war on ps5?

Will it be just stereo with headphones connected to a controller or will it use the "3d audio" from ps5 settings since it had 3d audio on ps4?

Ok thanks. I honestly just assumed the HDR10 standard required 10 bit color due to the name.Not really, the colour space is still BT2020 instead of REC709 (the SDR space) so you get the "extra colours" (If they are even used) but technically the banding will be worse.

You get worse gradients with an 8-bit signal/panel over a 10-bit one, but if you dither the 8-bit you get very comparable and often better results because certain aspects of the games pipeline might have lower bit precision anyway (Like for a vignette over the graphics) so sending out true 10-bit will show up the banding more where 8-bit + dithering would mask it.

Many (most) 4K TVs that are marketed as HDR TVs don't have true 10-bit panels, they have 8-bit panels and use something called FRC or Frame Rate Control to rapidly flash different colour values inbetween the ones it can't show, so the gradient appears smooth to our eyes.

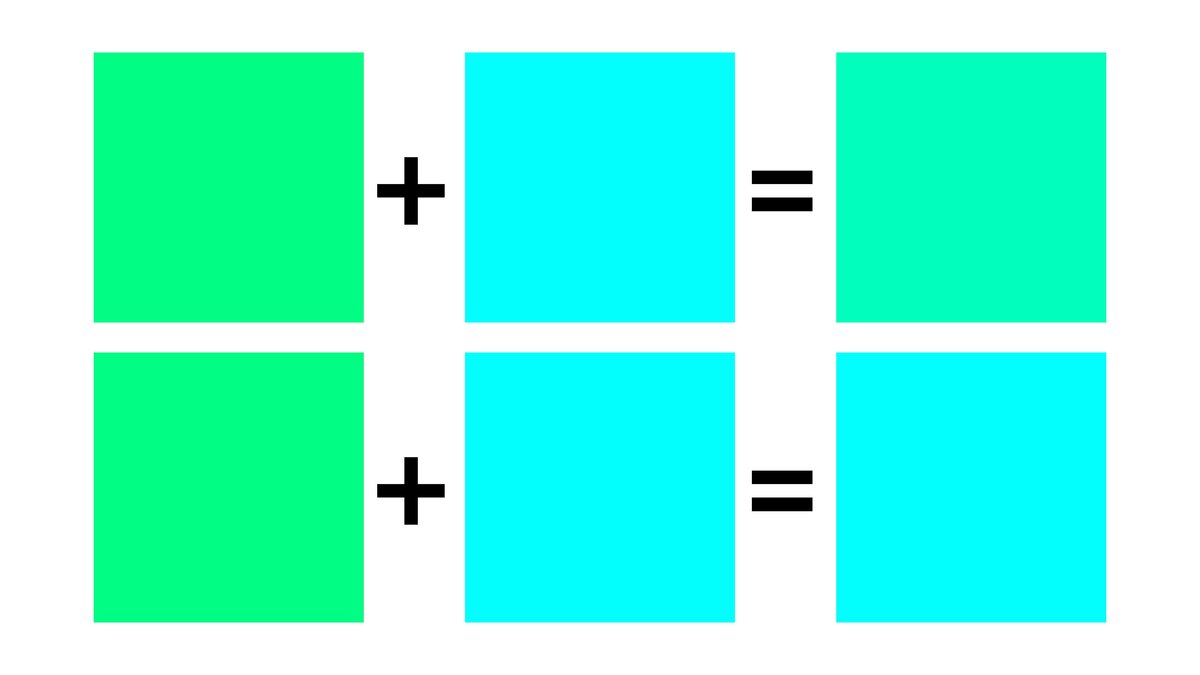

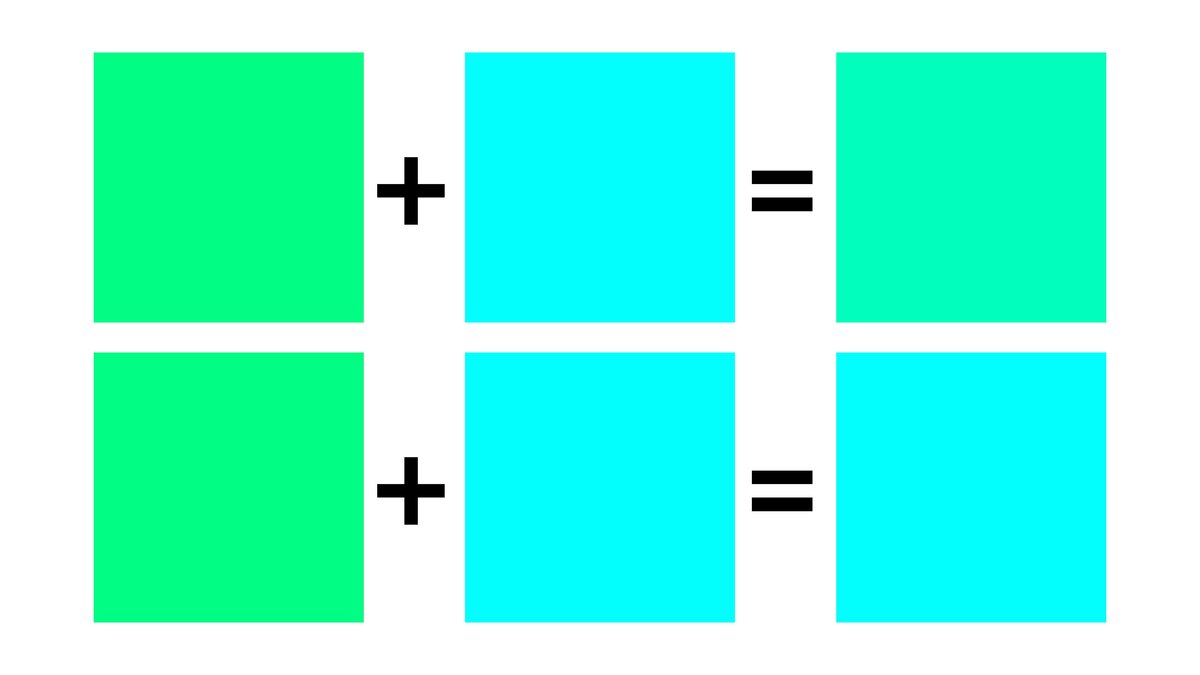

The 8-bit panel can't show as many "shades" of a colour as the 10-bit panel so it basically flashes the inbetween colour values between frames so its gives the appearance of a 10-bit panel:

Frame rate control - Wikipedia

en.wikipedia.org

edit - found this as well, the "best solution" answer starts out really technical but the 2nd or 3rd paragraph explains it in a simple way: https://forums.tomshardware.com/threads/true-10bit-vs-8bit-frc.2937222/

If you want to know if your TV has a true 10-bit panel then put your model number in the search here and do a control-f for "panel bit depth":

DisplaySpecifications - Specifications and features of desktop monitors and TVs

Detailed specifications of desktop monitors, smart TVs and other types of displays. The latest display-related news. Comparisons of the specifications of different models, user reviews and ratings.www.displayspecifications.com

This is my understanding of it anyway, others please feel free to correct me if you have more knowledge on the subject.

Good to know, I'll leave it on the old school hard drive.I was hoping for better load time optimization. Installing on the SSD barely changes anything in the current patch.

So it disables the previous patch's?

Lol i straight up harassed him on Twitter.Fucking DOPE. I Tweeted Herman Hulst about this a few months ago.... must be why he did it (I also asked for a Bloodborne 60FPS patch, you're welcome guys).

Removed it from my mini list...thanks!UC4 at 60fps would be prime, grade A beef.

inFamous runs at 60 fps and 4k if you unlock FR and select resolution mode in the settings. It is amazing. Plat'd it and Last Light when PS5 dropped. Feels like a new game.

I miss your old avatar smh...Ok thanks. I honestly just assumed the HDR10 standard required 10 bit color due to the name.

I also kinda assume Sony know what their doing by choosing to output 4K60 HDR 10 bit YUV 422 instead of 4K60 HDR 8 bit RGB 444 when using HDMI 2.0 out. Theres gotta be some reason why.

In a native PS5 version they could add native 4K, 120fps, HDR, super short loading times, 3D audio, DualSense features.

BloodborneFuck!!!

I played Jedi Fallen Order on PS5, finished it. One week later - next gen update. Then I decided to finally play God of War, finished it. The week has passed. Update! What the hell?!

What game should I finish next?

Ok thanks. I honestly just assumed the HDR10 standard required 10 bit color due to the name.

I also kinda assume Sony know what their doing by choosing to output 4K60 HDR 10 bit YUV 422 instead of 4K60 HDR 8 bit RGB 444 when using HDMI 2.0 out. Theres gotta be some reason why.

IKR, I do look forward to seeing Bloodborne running at 4K/60 with terrible frame pacing.Cough... Bloodborne next... cough

its still a PS4 game so it can be left on a hard driveDoes the patch apply if the game is running off of external hard drive or does it have to be on ssd?

Because its running in backwards compatible mode on PS5. All this patch is doing is rasing the frame rate cap from 30fps to 60fps for quality mode.if its 2160p then how is it also 4k checkerboarded?