Dirk Benedict

Gold Member

240hz boys eating well.

Can keep gamers on old stock and scraps that way, want more frames? Fake em!Is their plan to keep increasing fake frames each generation? Such a weird play

They will eventually. You can only spend so much money and time on something before giving up if you don't see results. And AGI anytime soon is still extremely unlikely. The scientific consensus is pretty clear on this. LLM research especially appears more and more likely to be a dead end.

If a decade passes and nobody can come close to an AGI, well, expect countries and companies to loose interest.

Think Tanks on another level. It's as much fascinating as the potential horror scenarios are scary.Thinking these companies are stuck on LLMs is old news

By the time you hear mass media talk about a limit or bottleneck in AI, it's like stock market, by the time your uncle talks about a stock at the Christmas party, it's already old news.

These companies hire the best brains in the field and participate heavily with the best computer scientists in universities.

There's already a ton of branching models in development moving away from LLMs towards world/physical models

Meta's Yann LeCun VL-JEPA

Google's Titan architecture

Amazon's project Prometheus

OpenAI is known to be working on a world model

And of course the other ones like Anthropic, China and other countries'

Nobody will slow down until AGI, even if it's 20 years, but it'll be much faster than that I guarantee it. Even before AGI, when you have agents that can beat the brightest minds in a field or scale up 200,000 experts level human equivalents on an engineering project and speed development that would take decades into hours or days, it's already a huge national security asset.

It's not humans who will develop AGI, it'll be AI. New models with the wheels off for programming their own language and trying to create the future model that will achieve AGI will speed run any attempts that would be made by humans.

Not really a weird play, its the inevitable future and a smart long-term strategy. Theres really no other way to infinitely progress "performance" this fast + affordably because CPU cores get faster at a much slower rate than GPUs and game engines run into a wall (and nobody needs to buy a new GPU once theyre limited by the CPU in everything)Is their plan to keep increasing fake frames each generation? Such a weird play

dlss 4.5 today, mfr springI didnt watch the full video, did they announce when DLSS 4.5 will be released?

Already out.is there a new driver coming today?

weird, my nvidia app says I already have the latest driver, guess I will do it manuallyAlready out.

Just checkedis there a new driver coming today?

I think the hotfix 591.67 driver is messing it up. Thanks for checking, I will update manually.Just checked

Driver Version:591.74

Release Date:Mon Jan 05, 2026

File Size:919.69 MB

When is it coming out? after the announcement or later this year?

Still can't believe a year ago I made this postI'm excited to try out 4.5. People scoff at this stuff but it's literally extending the life and performance of Nvidia cards like never before. Last time they improved the model I gained performance by dropping down a tier while keeping the same IQ. I'm hoping to do the same again this time.

And I don't give a damn about MFG giving that I'll be using this 4090 for a while, but every time they improve 4x, and now 6x, it makes the 2x that much better, to the point that it has gone from basically unusable to be to something I use in multiple games.

It's kinda hilarious how there are still detractors to this stuff when it's clearly the best GPU tech introduced in a long ass time. And as much as I hate that Nvidia is all about AI these days, at least they are giving their gaming customers some tech that it the result of it, AMD didn't show shit that was interesting at their show and mentioned AI 200 times in an hour long show. It's no wonder the gap hasn't been closed at all.

Which is DLSS 4.5? Preset L or M?It's out

Releases · NVIDIA/DLSS

NVIDIA DLSS is a new and improved deep learning neural network that boosts frame rates and generates beautiful, sharp images for your games - NVIDIA/DLSSgithub.com

Which is DLSS 4.5? Preset L or M?

I installed driver 591.74, but preset M and L doesnt shows up.

PS: Opting for beta make the new presets to appear.

Do you guys use DLSS Override - Super Rsolution mode from Nvidia App? Or just select the DLSS mode from in-game?

They definitely moved pass simple chatbots.Thinking these companies are stuck on LLMs is old news

By the time you hear mass media talk about a limit or bottleneck in AI, it's like stock market, by the time your uncle talks about a stock at the Christmas party, it's already old news.

These companies hire the best brains in the field and participate heavily with the best computer scientists in universities.

There's already a ton of branching models in development moving away from LLMs towards world/physical models

Meta's Yann LeCun VL-JEPA

Google's Titan architecture

Amazon's project Prometheus

OpenAI is known to be working on a world model

And of course the other ones like Anthropic, China and other countries'

Nobody will slow down until AGI, even if it's 20 years, but it'll be much faster than that I guarantee it. Even before AGI, when you have agents that can beat the brightest minds in a field or scale up 200,000 experts level human equivalents on an engineering project and speed development that would take decades into hours or days, it's already a huge national security asset.

It's not humans who will develop AGI, it'll be AI. New models with the wheels off for programming their own language and trying to create the future model that will achieve AGI will speed run any attempts that would be made by humans.

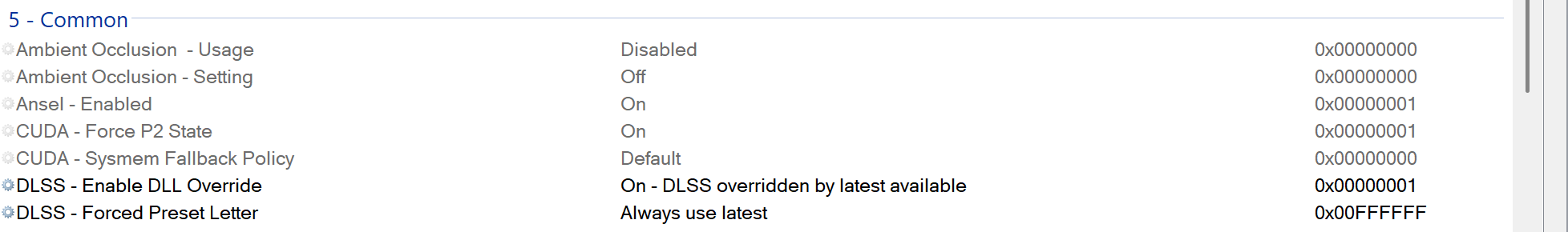

Download the latest Nvidia Profile InspectorDo you guys use DLSS Override - Super Rsolution mode from Nvidia App? Or just select the DLSS mode from in-game?

I just use Global-Latest in the Nvidia app, that's usually the default anyway, no need to change it for the most part. Convenient enough to me.Do you guys use DLSS Override - Super Rsolution mode from Nvidia App? Or just select the DLSS mode from in-game?

Something that might have had lots of ghosting.

Yeah arc and ac shadows had those issues also arc had issues with foliage. Gonna test both games nowArc Raiders. Playing in 4K DLAA with 2xFG and the flying particles smear like hell with Transformer 4.0. Really wonder if they fixed this with 4.5.

Actual research in the field, not hype from companies, cannot even give a timeframe. The overwhelming consensus is it's still decades away. Not because of any real science, the fact is that most don't even know how or if AGI is possible. Currently the entire idea of AGI is built on a ton of hopium.Thinking these companies are stuck on LLMs is old news

By the time you hear mass media talk about a limit or bottleneck in AI, it's like stock market, by the time your uncle talks about a stock at the Christmas party, it's already old news.

These companies hire the best brains in the field and participate heavily with the best computer scientists in universities.

There's already a ton of branching models in development moving away from LLMs towards world/physical models

Meta's Yann LeCun VL-JEPA

Google's Titan architecture

Amazon's project Prometheus

OpenAI is known to be working on a world model

And of course the other ones like Anthropic, China and other countries'

Nobody will slow down until AGI, even if it's 20 years, but it'll be much faster than that I guarantee it. Even before AGI, when you have agents that can beat the brightest minds in a field or scale up 200,000 experts level human equivalents on an engineering project and speed development that would take decades into hours or days, it's already a huge national security asset.

It's not humans who will develop AGI, it'll be AI. New models with the wheels off for programming their own language and trying to create the future model that will achieve AGI will speed run any attempts that would be made by humans.

Do you guys use DLSS Override - Super Rsolution mode from Nvidia App? Or just select the DLSS mode from in-game?

You can use Inspector and DLSSSwapper to get the latest builds of DLSS in your games.I like to know too. I dont wish to install the Nvidia app, just too bloaty visuals I dislike

Yeah the app is essential for RTX HDR amongst other things. My only complain is that it sometimes meddles with my NVPI profiles without permission.You can use Inspector and DLSSSwapper to get the latest builds of DLSS in your games.

The NVapp is so much better than GeforceExperience and access to ShadowPlay, Reflex, Filters, Override are worth the "bloat"........the app literally doesnt even load on startup on my machine.

I only open it when I need to change presets.....otherwise global latest and just leave it alone.

DLSSSwapper gets me the DLSS builds.

MFG is the future. I know some may not like it, but there's not much you or we can do about it.

While 4x often struggled in DLSS 4, that's not really the point. We're simply anticipating the improvements in the new 4.5 models, like we saw when going from DLSS3 to 4.

TLDR; Perhaps 4x is the new 2x in image quality now, or something

The bubble is not about the quality and usefulness of AI, but rather the investments into companies purporting to leverage AI but are nothing burgers. The DOT COM bust didn't happen because the Internet was a bad idea, but companies being overleveraged while promising the world. Looking into the financials of the various layers of AI companies today, something smells. A lot of borrowing from Peter to pay Paul.I think it's hilarious that people think AI is some kind of bubble that will pop. Funny shit. It's here to stay and it's only getting better.