Thebonehead

Gold Member

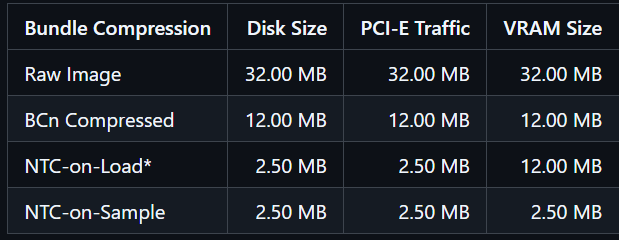

This only concerns the KV-cache quantization.

So let's say we have LLM that occupies 500GB RAM+VRAM or just VRAM. When we load it, we set a certain context window size (like 100k tokens). That's what this "turbo-mumbo-jumbo" aims to reduce in size. So, if it was 500GB + 50GB initially, with this thing it would get down to 500GB + 20GB or something like that.

Long story short, no, this doesn't solve anything unless they find a way to quantize the models themselves aggressively without lobotomizing them so much.

Beat me to it.

It's playing with semantics when they say reduction in model size.

TurboQuant is a compression method that achieves a high reduction in model size with zero accuracy loss, making it ideal for supporting both key-value (KV) cache compression and vector search. It accomplishes this via two key steps:

It just allows for a larger context window - which means analyse the whole repo instead of a couple of files for instance.