thicc_girls_are_teh_best

Member

This isn't an announcement or scoop or anything at all; instead, it's just me having a really quick idea WRT solutions for RAM shortages. Truth is, this type of shit is probably going to keep happening as time goes on, and in greater degrees of severity. For mass-market consumer electronics in particular, no real long-term solution will kill the industry off sooner or later, because things like RAM and NAND are always going to be in demand from more & more industries, naturally driving up costs.

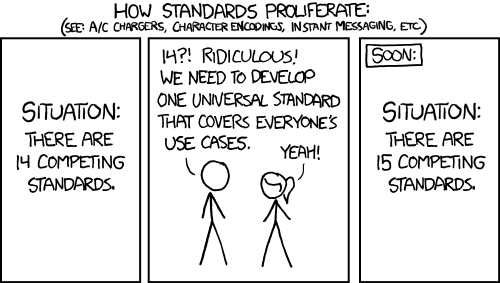

So a thought that came to me is, why doesn't a company make a standardized modular RAM standard in tandem with JEDEC specification? I'm thinking of something as a cross between Dell's CAMM memory, m.2 NVMe storage standard, and microSD cards. It would build off the decades of memory standardization we've seen going from FPM to EDO to SDRAM to DDR to GDDR to HBM, from bubble memory to ROM to NOR to NAND, you get the idea.

The idea is simple: a memory interface standard (at the logical, physical, port connect & memory device physical dimension level) that defines a way of being scalable by utilizing a commonly agreed-upon minimum capacity type (i.e 1 Gbit) "memory units", each 8-bit along each of four data interconnect points (to interface with neighbor memory units, and in some cases the controller & bus interface), integrated on a "card layer" that can both increase capacity and ensure connectivity between the memory units via a multi-point mesh data interconnect. You still have a row/column setup, you still use the "column count" to define bus width and "row count" to define capacity with this type of standard etc. Sticking with the microSD card dimension sizing, a practical capacity limit is probably around 2 GB (32-bit interface per card, 4x2 arrangement) to 8 GB (64-bit interface per card, 8x8 arrangement)

The thing is, you get this memory on devices the size of microSD cards, and certain devices might have slots for just one, or up to two, or four, or eight etc. Devices would stipulate pairing requirements i.e 2x 32-bit 2 GB cards for a 64-bit interface/4 GB RAM capacity limit, etc. There's no theoretical limit to the number of slots that can be interfaced, just a practical one based on the type of device and its market, physical footprint, power target (both performance & TDP consumption) etc. I figure you could design this type of memory with replication of different standards in mind i.e GDDR for GPUs, HBM for data centers, DDR for CPUs, NAND for storage etc. Mind, we are not talking about the same physical design as those memories; the actual memory logic for this would be more universal and scalable, but particular features of the memory controller could be adapted to replicate these types of pre-existing types.

In the same way you can, today, take a microSD card from your phone and pop it in your laptop, that's what kind of future I see existing for volatile memory, especially in the consumer electronics space. This way, a person just buys the amount they foresee themselves using, they don't have to worry if it's "compatible" with their devices (they just assume it automatically is), and can swap it along devices as they see fit. It'd need to be very plug-and-play, and I still expect devices supporting it would need a small block (32 MB - 512 MB) of soldered RAM installed (or maybe some NOR with XIP support) for when the user pulls the cards out (fall back automatically to a low-power, internal RAM/NOR-backed UEFI/BIOS environment while cards are swapped in or out before auto-prompting the user to initiate the "real" UI space when minimum RAM amount is inserted & detected).

But with modern advances in memory fields (including CXE 3.0 and beyond), I don't feel this type of future development is far-fetched or even far off, and it's going to become an absolute necessity because the resources to keep making more and more RAM & NAND that's soldered and non-transferrable, are going up in prices while depleting in supply. We're going to have to start thinking of volatile memory in a renewable resource type of way (paired with smarter logic for upscaling, compute (PNM, PIM), etc.) if especially we want consumer electronics spaces like gaming to exist in a non-niche capacity beyond the next 10-20 years, IMHO.

Anyway, just a brief concept for a potential memory standard & solution. Game consoles would really benefit from this, clearly, considering the frankly stupid increases we just saw with PS5 today, and will very likely see with Xbox and Nintendo in the near future. Let alone what this means for things like the PS6. It'd be cool if Valve are already developing something like this, considering Steam Machine's specs are somewhat more modest, but I doubt they are. And yes, there are aspects to latency that'd have to be ironed out with this type of memory concept clearly, but that could be solved over time. Plus, there are always still workarounds to that (i.e arranging your data as SoA to only pay penalty for initial access latency), tho granted that requires more effort on part of the developers.

Does anyone else around see potential in this type of memory concept, especially as a solution to what we're seeing today (or part of the solution, anyway)? Any ideas on how to improve it? Alternative memory solutions (that are realistic and relatively practical)?

(*Bolded certain parts for emphasis to act as a kind of TL;DR)

So a thought that came to me is, why doesn't a company make a standardized modular RAM standard in tandem with JEDEC specification? I'm thinking of something as a cross between Dell's CAMM memory, m.2 NVMe storage standard, and microSD cards. It would build off the decades of memory standardization we've seen going from FPM to EDO to SDRAM to DDR to GDDR to HBM, from bubble memory to ROM to NOR to NAND, you get the idea.

The idea is simple: a memory interface standard (at the logical, physical, port connect & memory device physical dimension level) that defines a way of being scalable by utilizing a commonly agreed-upon minimum capacity type (i.e 1 Gbit) "memory units", each 8-bit along each of four data interconnect points (to interface with neighbor memory units, and in some cases the controller & bus interface), integrated on a "card layer" that can both increase capacity and ensure connectivity between the memory units via a multi-point mesh data interconnect. You still have a row/column setup, you still use the "column count" to define bus width and "row count" to define capacity with this type of standard etc. Sticking with the microSD card dimension sizing, a practical capacity limit is probably around 2 GB (32-bit interface per card, 4x2 arrangement) to 8 GB (64-bit interface per card, 8x8 arrangement)

The thing is, you get this memory on devices the size of microSD cards, and certain devices might have slots for just one, or up to two, or four, or eight etc. Devices would stipulate pairing requirements i.e 2x 32-bit 2 GB cards for a 64-bit interface/4 GB RAM capacity limit, etc. There's no theoretical limit to the number of slots that can be interfaced, just a practical one based on the type of device and its market, physical footprint, power target (both performance & TDP consumption) etc. I figure you could design this type of memory with replication of different standards in mind i.e GDDR for GPUs, HBM for data centers, DDR for CPUs, NAND for storage etc. Mind, we are not talking about the same physical design as those memories; the actual memory logic for this would be more universal and scalable, but particular features of the memory controller could be adapted to replicate these types of pre-existing types.

In the same way you can, today, take a microSD card from your phone and pop it in your laptop, that's what kind of future I see existing for volatile memory, especially in the consumer electronics space. This way, a person just buys the amount they foresee themselves using, they don't have to worry if it's "compatible" with their devices (they just assume it automatically is), and can swap it along devices as they see fit. It'd need to be very plug-and-play, and I still expect devices supporting it would need a small block (32 MB - 512 MB) of soldered RAM installed (or maybe some NOR with XIP support) for when the user pulls the cards out (fall back automatically to a low-power, internal RAM/NOR-backed UEFI/BIOS environment while cards are swapped in or out before auto-prompting the user to initiate the "real" UI space when minimum RAM amount is inserted & detected).

But with modern advances in memory fields (including CXE 3.0 and beyond), I don't feel this type of future development is far-fetched or even far off, and it's going to become an absolute necessity because the resources to keep making more and more RAM & NAND that's soldered and non-transferrable, are going up in prices while depleting in supply. We're going to have to start thinking of volatile memory in a renewable resource type of way (paired with smarter logic for upscaling, compute (PNM, PIM), etc.) if especially we want consumer electronics spaces like gaming to exist in a non-niche capacity beyond the next 10-20 years, IMHO.

Anyway, just a brief concept for a potential memory standard & solution. Game consoles would really benefit from this, clearly, considering the frankly stupid increases we just saw with PS5 today, and will very likely see with Xbox and Nintendo in the near future. Let alone what this means for things like the PS6. It'd be cool if Valve are already developing something like this, considering Steam Machine's specs are somewhat more modest, but I doubt they are. And yes, there are aspects to latency that'd have to be ironed out with this type of memory concept clearly, but that could be solved over time. Plus, there are always still workarounds to that (i.e arranging your data as SoA to only pay penalty for initial access latency), tho granted that requires more effort on part of the developers.

Does anyone else around see potential in this type of memory concept, especially as a solution to what we're seeing today (or part of the solution, anyway)? Any ideas on how to improve it? Alternative memory solutions (that are realistic and relatively practical)?

(*Bolded certain parts for emphasis to act as a kind of TL;DR)