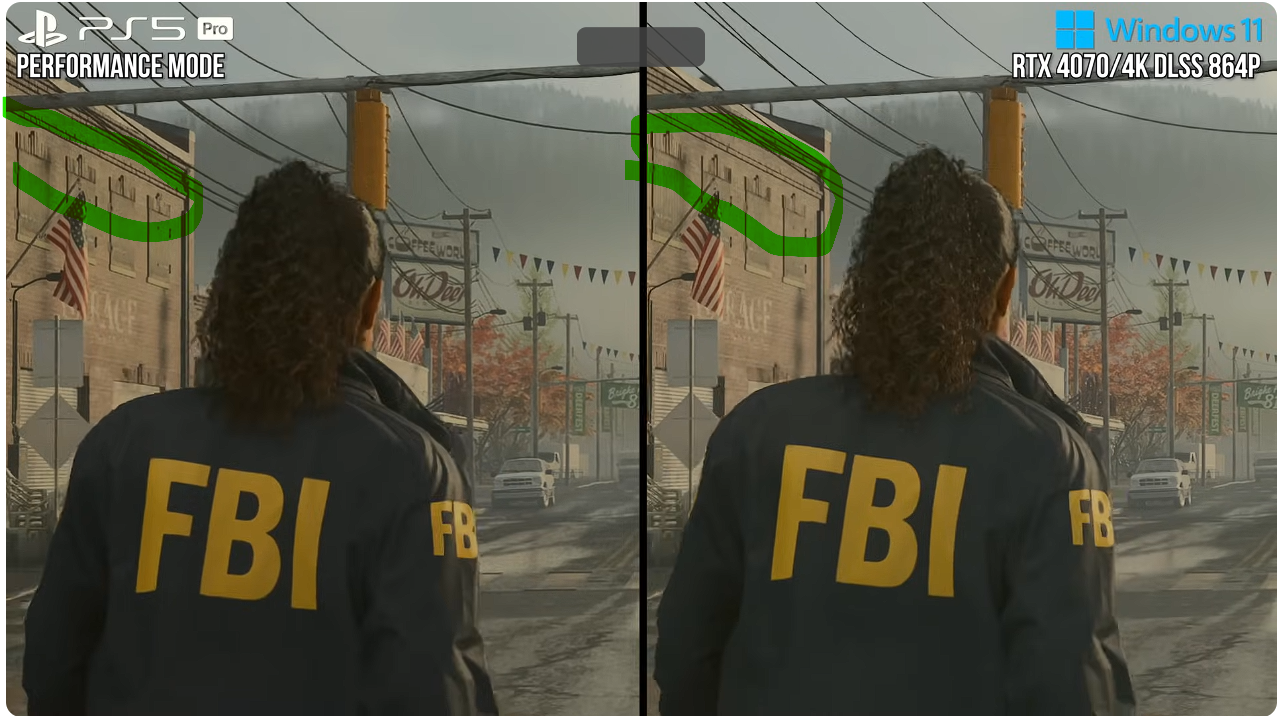

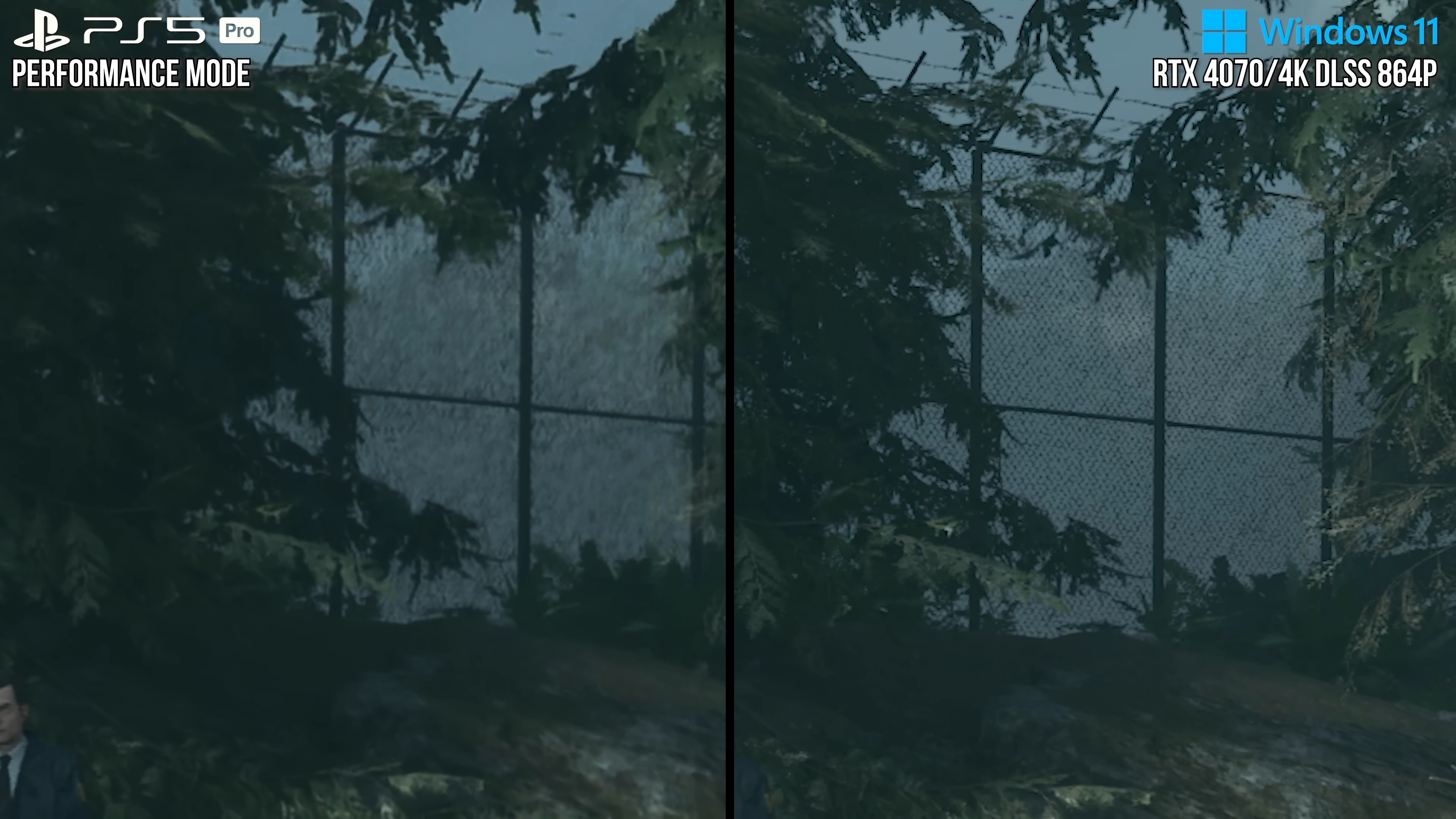

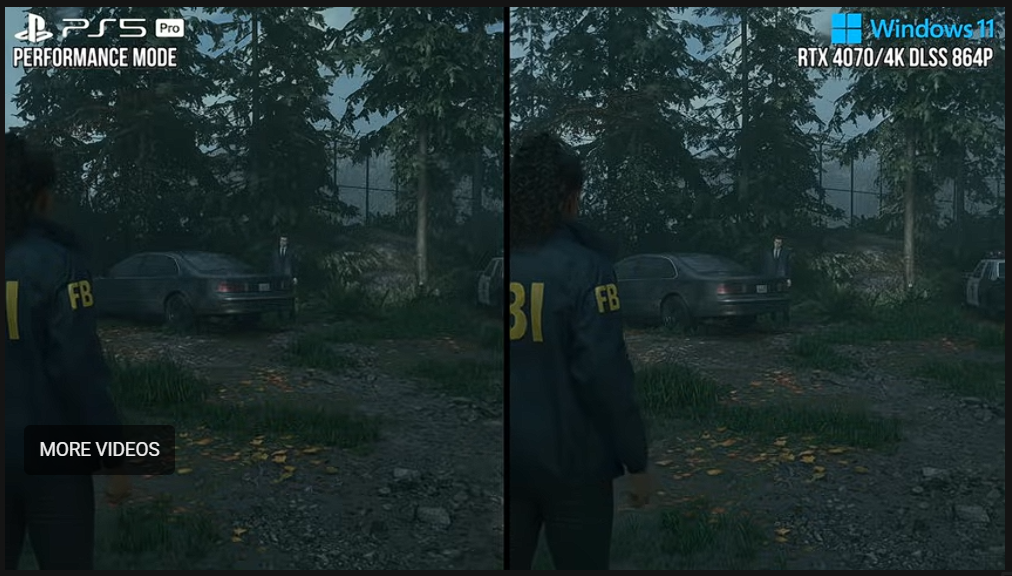

this is not path tracing. this specific issue does not happen with preset E and high settings. it still happens with high internal resolutions when using preset M. it doesn't even happen with TSR which I show here

The latest DLSS 4.5 with "M" preset definitely causes a noise problem around the grass in your video. Your video made me test all the available anti-aliasing (AA) methods in this game and rethink my opinion about DLSS.

Here's my own test. I used path tracing at low settings because it shows noise around vegetaion more easily compared to lumen.

-DLSSP (50% resolution scale) using "E" DLSS preset shows noise / white splashes around the vegetation, particularly in dimly lit areas.

-DLSSP (50%) the latest "M" DLSS4.5 preset has even stronger noise.

-TSR (50%) has no noise. I also tested the FSR3, and there was no noise as well.

For some strange reason the DLSSP (50% resolution scale) introduces noise around the vegetation when path tracing is employed. Other anti-aliasing (AA) methods use the same internal resolution and number of rays, yet I don't see similar noise. TSR and FSR3 have other problems though. The image isn't as sharp and clean, and it breaks up during motion, so still DLSS offers the best results overall. It's also possible to eliminate DLSS noise around the grass if I increase the internal resolution to 67% (DLSS Q) and maximize the path tracing settings. This results in a clean, artifact-free image.

I also tested Black Myth: Wukong with Lumen and High settings but I was too lazy to record another video, so I will just describe my observations. I found that the DLSS "E" preset works well with Lumen lighting, so it's just PT in this game that cause DLSS problems. The latest DLSS 4.5 with "M" preset caused shimmering / noise even with lumen lighting. I think the "K" DLSS 4.0 preset offers the best image quality in this game. The image is sharper than the "E" preset and does not have the noise that can be seen in the "M" preset.

While testing Lumen at the high settings, I realized that the game cun run with locked 60fps even at 4K DLAA 4.0 on my RTX4080S. Black Myth Wukong is extremely demanding with maxed out settings, but it's also very scalable. However, the latest 4.5 DLAA comes at a significant cost.

DLAA 4.0 (100% res scale) "K" preset, high settings preset, lumen lighting - 71 fps

DLAA 4.5 (100%) "M" preset with the same settings. I think "M" preset is not worth using on my card at 4K 100% resolution, the cost is too high (26% relative difference).

With DLSSP (50% res scale) and FGx2 on top of that the game runs at 178fps and I think the graphics still looks good. I think the PS5 version uses similar settings (1080p internally + FGx2 and high settings), so it appears that my RTX 4080 is exactly three times faster. The PS6 should offer similar GPU power, which gives an idea of how big the difference will be between the PS5 and PS6.

Using the same settings (50% res scale) with the latest DLSS 4.5 "M" preset, the performance difference compared to 4.0 is just 2%. That's acceptable. It seems that tensor cores on my RTX4080 are powerful enough to use 50% resolution scale DLSS 4.5 without worrying about performance.

PT decreases performance significantly, but even at low settings, PT improves the lighting quality a lot and I think 37% performance cost is justified.

DLSSP K preset, 50% res scale + FGx2, high settings, lumen lighting

Maxed out PT with Epic settings is much more demanding though. My framerate went from 178 fps (lumen, high settings) to 87 fps. It's still playable with a gamepad, but the maximum settings in this game clearly require much stronger GPU (RTX 5090), even with DLSS.