-

Hey Guest. Check out your NeoGAF Wrapped 2025 results here!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Pragmata PC Performance Raises Concerns for 8 GB GPU Users, Digital Foundry Reports

- Thread starter seb85

- Start date

Buggy Loop

Member

Where is the Neural Texture Compression Nvidia? We need you.

There's probably a point where most 8GB cards won't have the inference power to do on-sample NTC anyway, unless it's on blackwell I guess, Ada maybe.

kevboard

Member

Pragmata demo sold me on the game unfortunately my 1060 made it not worth to play on my current rig. Runs smooth but graphic had to set so low it look like I was playing a PS3 game at 480p.

should still run better than the Switch 2 version on a 1060 tho.

MayauMiao

Member

should still run better than the Switch 2 version on a 1060 tho.

Sure but I'm not going to play Switch 2 level of graphic on my just updated PC (except GPU) with a 32" monitor.

kevboard

Member

Sure but I'm not going to play Switch 2 level of graphic on my just updated PC (except GPU) with a 32" monitor.

should be Series S level actually.

MayauMiao

Member

should be Series S level actually.

Its just look bad on a 1060. I'll try to get a 5060ti with 16gb.

WX3

Member

I do the same as you with a 3060ti and at 1080p I have zero issues with performance. I will be upgrading in the future, but I don't really worry about it at all.Lmfao

The article literally doesn't test or even mention performance at the resolution I'm using. It completely dodges the exact scenario I described: RTX 3070 8GB running every modern 2025/2026 release at 1080p, stable 50-60 FPS, no stuttering, no crashes, no texture pop-in. That's what you called 'inadequate for far too long'

Next time link something that actually addresses my setup instead of proving you can't read past the headline.

Weak af

blastprocessor

The Amiga Brotherhood

Looking at those RT reflection suggests they have a poor quality variant, it really should be that bad.

I'm just comparing that to say Cybperpunk 2077 on Pro, which has surprisingly high-resolution RT reflections.

Also doesn't seem to support RT shadows and need path tracing to actually do decent shadows.

I'm just comparing that to say Cybperpunk 2077 on Pro, which has surprisingly high-resolution RT reflections.

Also doesn't seem to support RT shadows and need path tracing to actually do decent shadows.

Last edited:

Bojji

Gold Member

Looking at those RT reflection suggests they have a poor quality variant, it really should be that bad.

I'm just comparing that to say Cybperpunk 2077 on Pro, which has surprisingly high-resolution RT reflections.

Also doesn't seem to support RT shadows and need path tracing to actually do decent shadows.

Standard RT in RE Engine was always super weak. With Pro in the picture they should upgrade it, both RTGI/RT reflections quality, denoiser and maybe add RT shadows (PT on PC is doing all of this and more).

Hyet

Member

let's cope together, brotherI guess my 10gb card is fine?

Madserb2023

Member

It has been a problem since 2020 when consoles had 16 gigs as they are lowest common denominator. Capcom will be developing their games around having 16 gigs of vram.

Bojji

Gold Member

It has been a problem since 2020 when consoles had 16 gigs as they are lowest common denominator. Capcom will be developing their games around having 16 gigs of vram.

True, but actual usable memory is ~13GB, and you have to subtract "CPU stuff" from this so 2-6GB on average (maybe?). Console memory that can be used as vram is probably 7-11GB range, depending on the game.

The problem with 8GB GPUs is that they are 8GB only in total, actual memory that can be used for games is ~6.7GB.

Skifi28

Member

Watanother foolish developer that will be deleted sooner or later.

Bojji

Gold Member

another foolish developer that will be deleted sooner or later.

It doesn't matter if 8GB VRAM is enough or not.

8GB VRAM is all gamers can afford right now.

People that don't know what they are doing. AMD offered cheaper cards with more vram for years, intel as well. Nvidia also "by mistake" released 12GB 3060 in 2021 that is the most popular GPU right now.

YeulEmeralda

Linux User

Meh it depends on resolution. Some people are still okay with 1080p.It has been a problem since 2020 when consoles had 16 gigs as they are lowest common denominator. Capcom will be developing their games around having 16 gigs of vram.

MCH2024

Member

People that don't know what they are doing. AMD offered cheaper cards with more vram for years, intel as well. Nvidia also "by mistake" released 12GB 3060 in 2021 that is the most popular GPU right now.

They offered substantially inferior cards for slightly less money. They are quite literally half a console gen to a full console gen behind in GPU architecture like every gen. They then put them on maintenance driver support practically 2-4 years in. That's why RDNA2 is double digit better on Linux than Windows. AMD froze the RDNA2 driver for all intents a long time ago. They just fix critical issues and security vulnerabilities.

AMD Cards are what we call "value traps". It's the appearance of value for frugal suckers without actual value.

You buy a worse GPU from a worse brand so you pay a lower price. Real gamers understand this. It's why 9070 XT ends up competing with 9070 / 5070 in the real world.

Last edited:

nkarafo

Member

No, on consoles that's the full, shared memory. It also counts as regular RAM.It has been a problem since 2020 when consoles had 16 gigs as they are lowest common denominator. Capcom will be developing their games around having 16 gigs of vram.

Around 12GB should be available as VRAM, which should also be the minimum for console settings/resolution on PC GPUs.

Topher

Identifies as young

Meh it depends on resolution. Some people are still okay with 1080p.

51% of steam hardware survey still use 1080p

kevboard

Member

It has been a problem since 2020 when consoles had 16 gigs as they are lowest common denominator. Capcom will be developing their games around having 16 gigs of vram.

they only have about 13GB usable by devs, and you can probably expect around 3 to 4 GB of that being used by the CPU and not as VRAM.

Bojji

Gold Member

They offered substantially inferior cards for slightly less money. They are quite literally half a console gen to a full console gen behind in GPU architecture like every gen. They then put them on maintenance driver support practically 2-4 years in. That's why RDNA2 is double digit better on Linux than Windows. AMD froze the RDNA2 driver for all intents a long time ago. They just fix critical issues and security vulnerabilities.

AMD Cards are what we call "value traps". It's the appearance of value for frugal suckers without actual value.

You buy a worse GPU from a worse brand so you pay a lower price. Real gamers understand this. It's why 9070 XT ends up competing with 9070 / 5070 in the real world.

No matter the inferior upscaling or RT, you still won't be vram limited on AMD cards.

There are raster games that require more than 8GB to function properly on console settings, like FFXVI. And you still have access to XeSS on AMD cards or ability to mod in FSR4 support.

nkarafo

Member

I sworn i would never buy an AMD GPU after my x1950pro was on "legacy driver" before i knew it. I could play some newer, lighter games on my older/weaker Nvidia card that wouldn't even launch on the newer AMD one, because the drivers wouldn't support them.AMD Cards are what we call "value traps". It's the appearance of value for frugal suckers without actual value.

You buy a worse GPU from a worse brand so you pay a lower price. Real gamers understand this. It's why 9070 XT ends up competing with 9070 / 5070 in the real world.

MCH2024

Member

One last thing is that "you need infinite RAM. VRAM is free" is AMD Marketing line since RDNA2 being parroted around by AMD Influencers who constantly communicate with AMD and people at AMD (MLID, KeplerL2, Steve HUB, Vex, etc).

They know that consumers really don't want to touch AMD cards if they can help it. So their marketing strategy is quite literally to disqualify Nvidia cards from consideration even when they are way better and more technologically advanced. And it's a moving goalpost. HUB opened his 5070 review by complaining about the one game that had an issue with 12GB.

It's just a line that's very easy to launder as pro consumerism so it spreads.

The major issue with VRAM is that for PS5 ~9GB VRAM acts as a realistic upper limit on how much can be allocated for just GPU. And moving data from CPU to GPU is a lot more flexible.

PC meanwhile has GPUs that smoke PS5 Pro but have 8GB VRAM. and that amount gets eaten up by System usage and GSP on Linux.

It's just too brutal and is only manageable if you target PC first, or you target PS5 / PC. PS5 => PC is just asking for trouble and you'd need considerable work just to make it work (look at nexus spiderman stuff).

This whole situation is also why Nvidia is strongly considering 9GB 5050 / 5060 / 5060 Ti. Launch TBD. The only hold back is that AMD Influencers and reddit will devour Nvidia with a hate cycle if they do it.

They know that consumers really don't want to touch AMD cards if they can help it. So their marketing strategy is quite literally to disqualify Nvidia cards from consideration even when they are way better and more technologically advanced. And it's a moving goalpost. HUB opened his 5070 review by complaining about the one game that had an issue with 12GB.

It's just a line that's very easy to launder as pro consumerism so it spreads.

The major issue with VRAM is that for PS5 ~9GB VRAM acts as a realistic upper limit on how much can be allocated for just GPU. And moving data from CPU to GPU is a lot more flexible.

PC meanwhile has GPUs that smoke PS5 Pro but have 8GB VRAM. and that amount gets eaten up by System usage and GSP on Linux.

It's just too brutal and is only manageable if you target PC first, or you target PS5 / PC. PS5 => PC is just asking for trouble and you'd need considerable work just to make it work (look at nexus spiderman stuff).

This whole situation is also why Nvidia is strongly considering 9GB 5050 / 5060 / 5060 Ti. Launch TBD. The only hold back is that AMD Influencers and reddit will devour Nvidia with a hate cycle if they do it.

MCH2024

Member

Most consumers don't agree. It's a valid view held by a niche subset of real gamers.No matter the inferior upscaling or RT, you still won't be vram limited on AMD cards.

PS5 rush ports.There are raster games that require more than 8GB to function properly on console settings, like FFXVI

Bojji

Gold Member

Most consumers don't agree. It's a valid view held by a niche subset of real gamers.

PS5 rush ports.

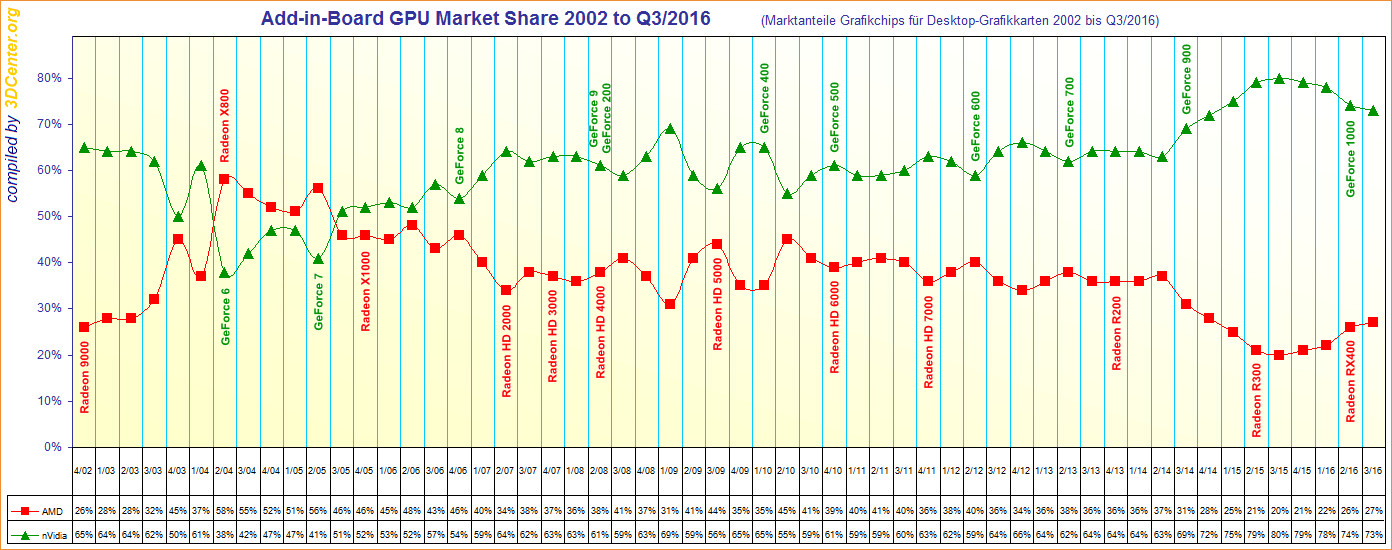

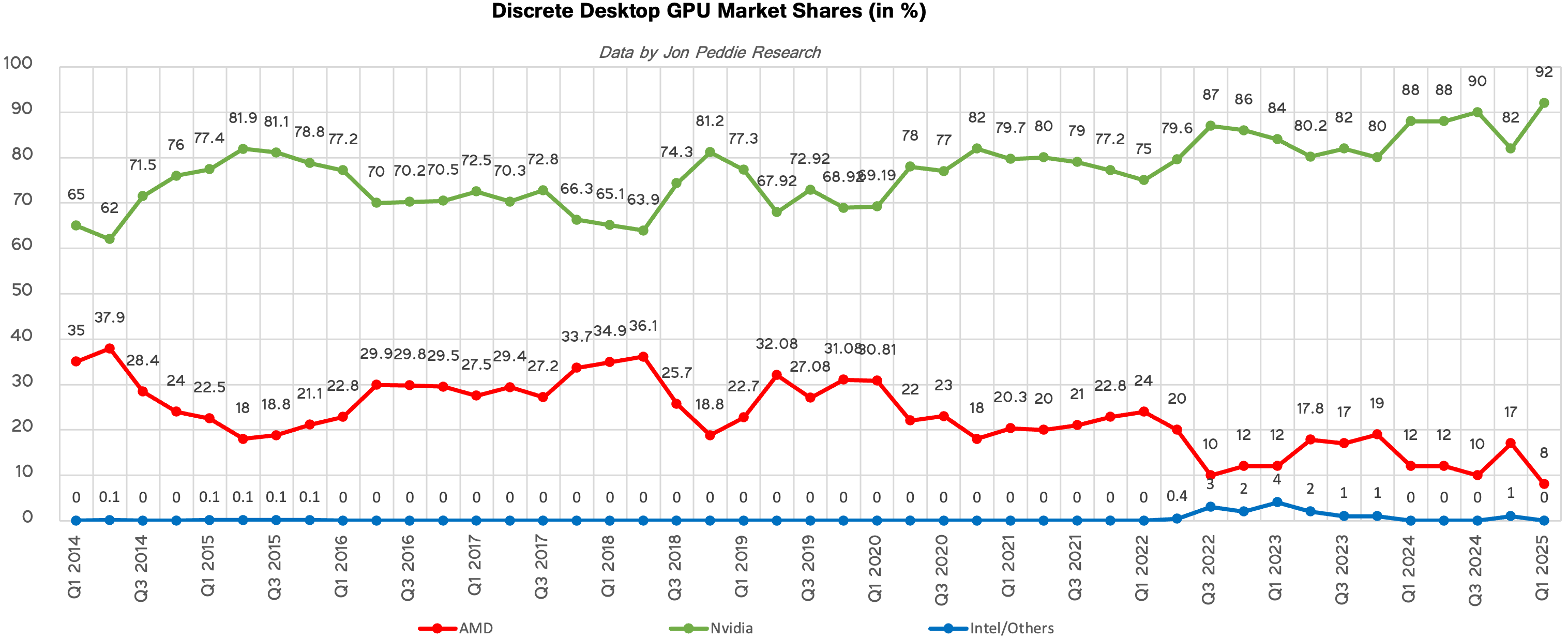

Last time AMD was relevant was ~5000 series when they had around half of the market. Since then, nvidia was dominating and this was long before DLSS or RT

They started getting totally dominated around Maxwell launch, never recovered since then...

And maxwell offered no real advantages over AMD, no RT/ML like stuff - it was just more efficient.

MCH2024

Member

Exactly.I sworn i would never buy an AMD GPU after my x1950pro was on "legacy driver" before i knew it. I could play some newer, lighter games on my older/weaker Nvidia card that wouldn't even launch on the newer AMD one, because the drivers wouldn't support them.

The issue is that no one owns or buys AMD at this point except for a small handful of gamers. And those gamers have extreme brand loyalty from sunk cost fallacy.

This makes it so there is no demand for AMD whining, while Nvidia whining gets millions of views. It's just youtube algorithm dynamics being reflected in social media perception while being mostly divorced from commercial perception.

It's also such a stupid market because most of these people whining about Nvidia still buy Nvidia. Praising AMD to them is just psychological games to get cheaper Nvidia. AMD engineers have complained about this before.

The best example is the RDNA2 maintenance driver episode. RDNA2 has already been on maintenance drivers for like well over 12 months when that came out. An AMD engineer was honest for a sec and it generated a PR fiasco. So AMD is just shipping packages that change as little as possible for RDNA2/3 as a way of minimizing the validation work they have to do.

MCH2024

Member

Equivalence fallacy.Last time AMD was relevant was ~5000 series when they had around half of the market. Since then, nvidia was dominating and this was long before DLSS or RT

There is a massive difference between the days where AMD had 1/3rd share, heck the days where they have 1/5 to 1/7 share. (RDNA2-3)

To now where it's close to 1/20……..

Last edited:

Madserb2023

Member

8 gigs is not enough and manufactures should not be offering it. They will keep selling supposedly mid range GPUs with 8 gigs of ram when the PS6 is out even if that console has 24 gigs or more if people defend it. Consoles should be seen as the minimum target which it is for developers. It's not just about resolution, games will be designed around whatever the next generation has and then Pragmata will be the norm. It's going to happen as it has done before several times. Nothing new. The only difference, people didn't defend GPUs with 512mb when the PS4 was out. That would be the equivalent of defending 8 gigs when the PS6 is out and apparently that's next year.they only have about 13GB usable by devs, and you can probably expect around 3 to 4 GB of that being used by the CPU and not as VRAM.

MCH2024

Member

Yes.And maxwell offered no real advantages over AMD, no RT/ML like stuff - it was just more efficient.

And a few generations later Turing was the greatest GPU generation leap in decades. There is a lot of shit that Nvidia did on Turing that's

they only have about 13GB usable by devs, and you can probably expect around 3 to 4 GB of that being used by the CPU and not as VRAM.

12.5GB on the dot for PS5. In RE9 you can see PS5 had worse textures than PC 8GB VRAM. Because it was built for PC as the primary platform. This made it so it made sense to use more memory on the CPU.

The major issue with 8GB is the games designed for PS5 first where PS5 is pushing the maximum usage (~9GB VRAM). Then add PC's overheads and 8GB VRAM has issues.

PS5 was designed around a small amount of memory being used by CPU backed up by constant streaming from the SSD. But That only took off in a few games mostly by insomniac.

Last edited:

asdasdasdbb

Member

That would be the equivalent of defending 8 gigs when the PS6 is out and apparently that's next year.

8/9 GB is going to be around for awihle... because NV wants to hit price points and still have some margin.

MCH2024

Member

Nvidia margin is law at GeForce.8/9 GB is going to be around for awihle... because NV wants to hit price points and still have some margin.

Means more VRAM => Higher MSRP. Inescapably.

In the past they had to compete with AMD and rely on gaming for profits. But neither of that is the case now. So the margins will not come down.

But that's 16gb in total ram. What's the difference between that and a PC with separate vram and system ram?Anyone who bought 8GB cards knowing consoles had 16 GBs is an idiot and needs to turn in their PC gaming card. I dont want you in my team. You are dumb and you should go buy a switch.

The demo looked and ran great at 4k (I think with one of the DLSS settings but can't remember) and over 60fps on my 8GB 3070TI. I don't know how much the later parts of the game push things.

Madserb2023

Member

No doubt but it will set a precedent for next generation. I am skeptical but if the next consoles are anywhere near 30 gigs it will 100% cause problems. Not going to bet on an exception. I had a 4870 XT back in the day with 2 gigs and was perfectly fine during the Xbox 360/PS3 but died to death when the PS4 came out. My GeForce 4 became useless the generation previous. One thing I agree with Digital Foundry on was the discussion around Doom the Dark Age. People don't accept having to upgrade anymore which was the norm with PCs. Maybe it will be different this time with Steam Deck etc. still don't agree with Nvidia offering it because they can.8/9 GB is going to be around for awihle... because NV wants to hit price points and still have some margin.

nkarafo

Member

512MB would be way below minimum requirements of games from that era. The correct equivalent would be 2GB since that amount was barely cutting it.The only difference, people didn't defend GPUs with 512mb when the PS4 was out. That would be the equivalent of defending 8 gigs when the PS6 is out and apparently that's next year.

Ι got a GTX 950 2GB during those days. Big mistake. Because even though this GPU was much faster than the 750ti equivalent inside the PS4, i still had to lower the resolution in some games like RE7 from 1080p to 720p to be able to run them at otherwise console settings without the massive VRAM limit hiccups.

I quickly replaced it with a GTX 1060 6GB after a year. That was a good decision. And after that a 3060 12GB. And now a 5060ti 16GB. I now always go for more VRAM than i need because i know first hand how bad it is when you hit the limit.

Bottlenecking otherwise capable GPUs with less VRAM than they can easily handle is e-waste.

Last edited:

SlimySnake

Flashless at the Golden Globes

The problem is path tracing. Thats what Alex was talking about.But that's 16gb in total ram. What's the difference between that and a PC with separate vram and system ram?

The demo looked and ran great at 4k (I think with one of the DLSS settings but can't remember) and over 60fps on my 8GB 3070TI. I don't know how much the later parts of the game push things.

kevboard

Member

8 gigs is not enough and manufactures should not be offering it. They will keep selling supposedly mid range GPUs with 8 gigs of ram when the PS6 is out even if that console has 24 gigs or more if people defend it. Consoles should be seen as the minimum target which it is for developers. It's not just about resolution, games will be designed around whatever the next generation has and then Pragmata will be the norm. It's going to happen as it has done before several times. Nothing new. The only difference, people didn't defend GPUs with 512mb when the PS4 was out. That would be the equivalent of defending 8 gigs when the PS6 is out and apparently that's next year.

You're moving the goalposts tho.

the fact is that devs already have to optimise for systems with around 8 to 9 GB of VRAM, the consoles don't have 16GB of VRAM, only realistically 8 to 9, due to a massive portion being taken up by the OS and another sizable chunk by the CPU

asdasdasdbb

Member

You're moving the goalposts tho.

the fact is that devs already have to optimise for systems with around 8 to 9 GB of VRAM, the consoles don't have 16GB of VRAM, only realistically 8 to 9, due to a massive portion being taken up by the OS and another sizable chunk by the CPU

Devs won't optimize for 8/9 GB. People will just have to turn the settings down.

The consoles having 30 GB might not mean much if it only gets used for neural rendering.

Senua

Member

Switch 2 master race?It sucks that most gaming PC will struggle with Pragmata.

bender

What time is it?

Switch 2 master race?

memoryman3

Member

Nvidia margin is law at GeForce.

Means more VRAM => Higher MSRP. Inescapably.

In the past they had to compete with AMD and rely on gaming for profits. But neither of that is the case now. So the margins will not come down.

Then there's an opening for AMD to undercut and deliver value. GPUs aren't ecosystems like Steam - no one needs most of Nvidia's features. Especially now as RDNA4 and beyond are actually competent at upscaling and RT.

PS6 having 30GB (24GB+ VRAM) will set the standard and Nvidia will have to follow if somehow Sony can moderate prices. Gamers will eventually catch on to Nvidia's gouging and never recommend their cards.

8GB is just useless for driving 4K displays. At least with an "underpowered GPU" you can opt for 4K at 30fps.

MCH2024

Member

Except that the opening is fighting against a massive deficit in engineering competence. your margin is my opportunity only works when the technical competence is equalized. Furthermore, Tapeout and mask set costs are rising and they add fixed costs that hurt a lot more when you sell a fraction of the volume.Then there's an opening for AMD to undercut and deliver value.

GeForce is just so much better. just compare 9070 XT (N4C, 357 mm², 640 GB/s) and RTX 4080 Super (N5B, 379 mm², 736 GB/s). 4080 Super quite literally wins on all metrics including being half a console generation ahead architecturally.

RDNA2/3 fared even worse than this vs Nvidia.

They want Nvidia features because the features are genuinely revolutionary and industry shaking. The idea that for less than 1 ms you can generate 15/16 pixels artificially at native like quality and motion fluidity is absolutely nuts. Same for Nvidia's RTX neural texture compression.no one needs most of Nvidia's features

The usage rate of practically all Nvidia features is very high from Nvidia telemetry. people really like it when their GPUs have their life extended and their GPUs get better overtime.

competent yet still architecturally worse than Ada.RDNA4 and beyond are actually competent at upscaling and RT.

The cruel reality is that at MSRPs, Nvidia has a slight consumer perceived value edge in the RTX 50 vs RX 9000 market. That forces AMD into a very low margin position. Which is why AMD is trying and failing to sell closer to Nvidia prices by leveraging their best department: AMD product marketing.

it will set the standard for nothing mainstream. it's a dedicated gaming only device with >750$ initial price and likely well over 1500$ in total cost of ownership over its lifetime.PS6 having 30GB (24GB+ VRAM) will set the standard

PS6 is a Pro. Playstation is transitioning into a PC like "family of consoles" so they can target multiple price points. This is necessary given inequality makes it so the optimal strategy is upscale customers subsidize lower income ones.

And it will be competing with 18GB dGPUs overall. 5070 Super / PTX 1070 XT. PS6 deliberately compromised performance (160W, cheap cooling, etc) to commodity max as a strategy to differentiate from PC. whether it succeeds depends on developers and market power dynamics that currently are going in favor of PC hard.

Nonsense. most older games will work and many games will just have pop in or acceptable slowdowns. 4K gaming is just not that mainstream, it's more 500$ GPU Territory8GB is just useless for driving 4K displays.

Last edited:

memoryman3

Member

People aren't on the market for new GPUs/consoles to play old games. New GPUs come with the expectation of better graphics and 1440p/4K TVs and monitors assist with this.Nonsense. most older games will work and many games will just have pop in or acceptable slowdowns. 4K gaming is just not that mainstream, it's more 500$ GPU Territory

If you say that acceptable slowdown = 10-20FPS with awful stuttering, then okay. The PS5 Pro or even Xbox Series X's "outdated" AMD RDNA GPUs have been besting new Nvidia RTX cards on modern displays with respect to graphics capabilities that actually matter to people. Ray tracing/path tracing are also VRAM intensive.

GeForce is just so much better. just compare 9070 XT (N4C, 357 mm², 640 GB/s) and RTX 4080 Super (N5B, 379 mm², 736 GB/s). 4080 Super quite literally wins on all metrics including being half a console generation ahead architecturally.

Not MSRP. It's a $600 card vs a $1000 card. That is what ultimately matters to consumers. The 9070XT should be compared to Nvidia's 4070 Super or 5070 cards. At 4K with good art and textures, AMD is the easy choice. 30FPS and 60FPS locks make the small Nvidia performance bump irrelevant.

Last edited:

MCH2024

Member

8GB is fine. you're acting like it's a problem that a 300$ GPU has compromises of any sort.People aren't on the market for new GPUs/consoles to play old games. New GPUs come with the expectation of better graphics and 1440p/4K TVs and monitors assist with this.

8GB issues are disgustingly overstated by AMD influencers who provide cover for PS5 rush ports. look at every game.

Last of us, Forespoken, Monster Hunter Wilds, Spiderman, etc

All those games were fixed for 8GB months after release after complaints. Some developers just wanted to be able to copy paste PS5 games. Some games were even fixed with unreal engine streaming pool tweaks. it's just lazy developer syndrome + AMDUB misinfo and marketing combo.

Not really the norm. I can tell you don't play on PC.If you say that acceptable slowdown = 10-20FPS with awful stuttering,

They don't. XSX / PS5 are strictly inferior to an RTX 3060 in every way, including VRAM.. The PS5 Pro or even Xbox Series X's "outdated" AMD RDNA GPUs have been besting new Nvidia RTX cards

You're acting like Console tech isn't on the PC market. you can get a GPU slightly above PS5/XSX (RX 6700) easily.

Guess what? it sucks. No one wants it. better image quality also includes better than native DLSS 4.0 / 4.5, Real RayTracing (Greatest leap in graphics fidelity since the 3d triangle, mark cerny) and path tracing.

Yes, they have similar costs but Nvidia provides substantially more value. that was my point, if one looks at the silicon and the memory, and considers Ada is late 2022 and RDNA4 is early 2025, it's disgraceful that RDNA4 is so much worse than Ada.Not MSRP. It's a $600 card vs a $1000 card.

Just like AMD occasionally bullies Intel, Nvidia bullies Radeon 24/7. every generation is basically a question if whether or not AMD can close the technical competence gap by enough that they can still make money while undercutting Nvidia.

The possibility of AMD outdesigning Nvidia doesn't really exist. you'd need massive shakeups at either GeForce or Radeon.

Yes and that's how it is in reality.The 9070XT should be compared to Nvidia's 4070 Super or 5070 cards

the problem is that AMD priced 9070 XT at 699$ for months vs 549$ 5070. closer to 5070 Ti than to a 5070, even though 9070 XT is a 5070 competitor overall.

Last edited:

Roni

Member

My 3080Ti is Path Tracing everything thus far precisely because I can live with 1080p. I can't see myself moving on from it. Whatever extra power I get I'll want it in effects.51% of steam hardware survey still use 1080p