1) Dynamic HDR metadata. Allows scene-by-scene or frame-by-frame HDR metadata with the picture. With HDR10 today, it's static. As in, when you start a movie, it defines all of the metadata once. This is an advantage Dolby Vision has today, which is now directly in the HDMI 2.1 spec.

2) Variable Refresh Rate. Definitely huge on the list for gamers. GSync/FreeSync capabilities as part of the standard is huge.

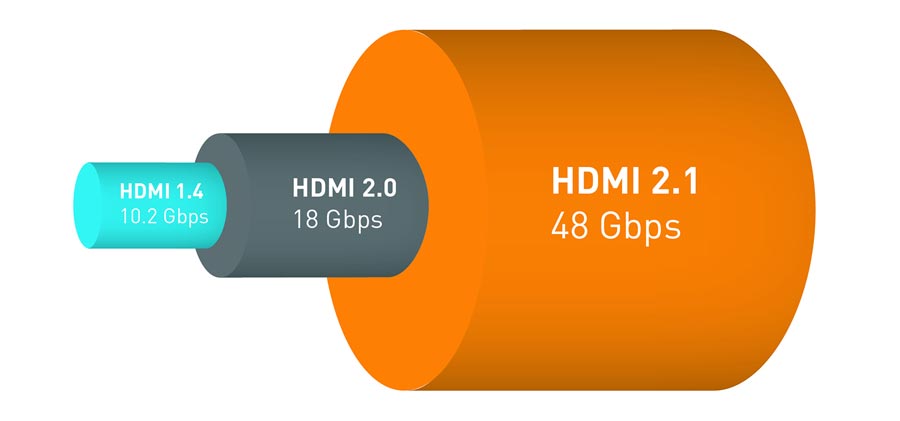

3) Larger pipe = no need to compress. HDMI 2.0 can only handle 4:4:4 at 4k/60fps with 8-bit color depth. If you are on a 10-bit TV, you need color compression to get 4k/60. Most people buying (decent) 4k TV's are getting 10-bit panels or greater, and want to use that extra color information. HDMI 2.1 is important if you want uncompressed color information at 4k/60Hz. I believe it can do 4k 120Hz with no compression up to like 16-bits (?), and 8k 60Hz with no compression up to 16 bit. Sure, for TV's, very few things can drive 4k / 120 Hz, but it's really the ability to run 4k / 60 uncompressed which has more immediate benefits. If you do hook up your PC, you can have your TV be a giant uncompressed 4k / 120 Hz GSync-esque monitor as well with HDMI 2.1.

The first two points are potentially software upgradeable. They don't require the new cable for the most part. However, the last point is something you won't get unless everything in the chain is HDMI 2.1 certified as higher bandwidth requirements require updated hardware throughout the chain to support.