Lol ?

When peoples went from bilear/trilinear filtering and upgraded to anisotropic filtering 16x, what do you think happened?

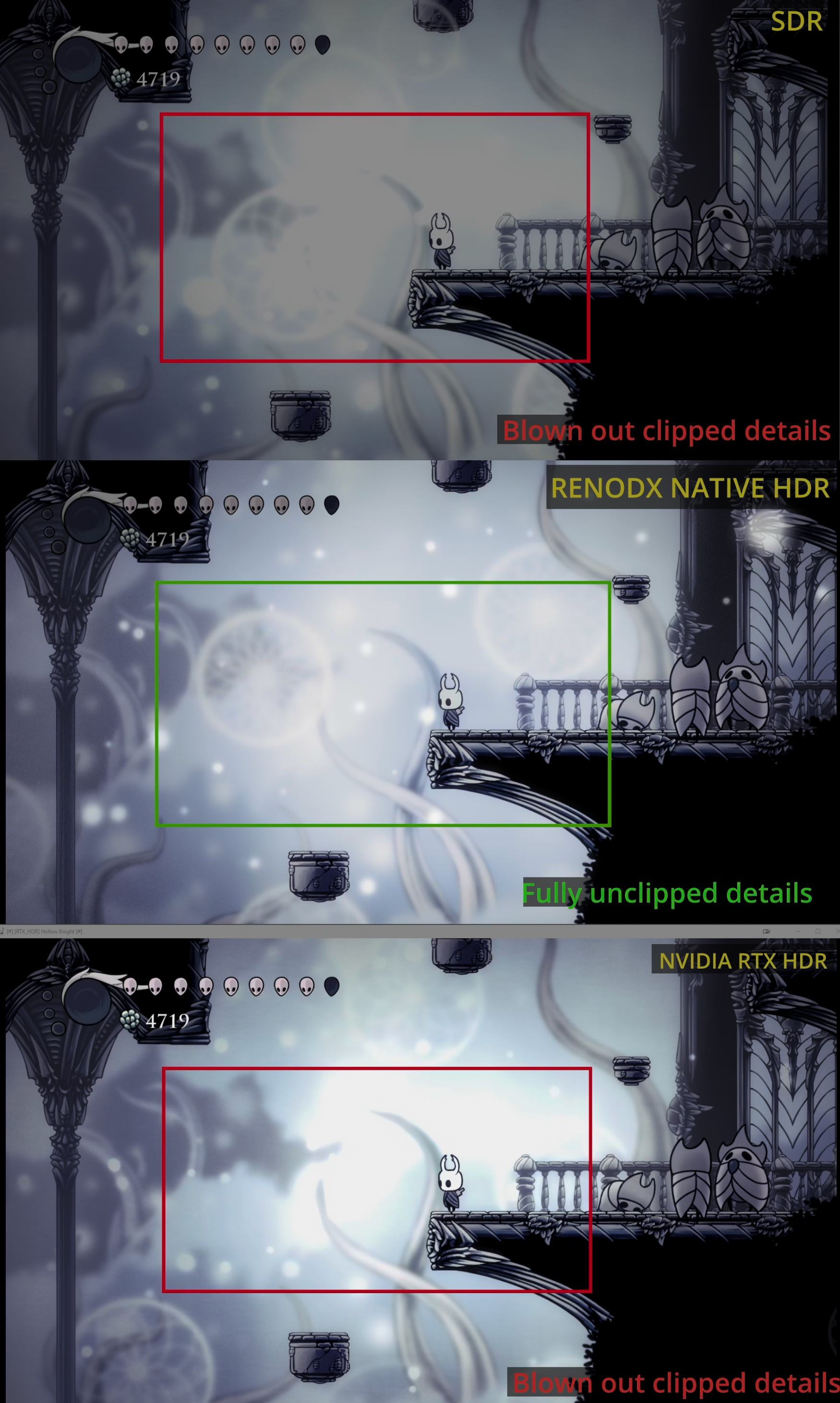

I've owned GPUs since 1996. I've followed every tech changes. Never you make a feature that has better image quality than a previous solution without a performance hit, unless its a revolutionary method, but most of the time its cutting corners for performances and your eyes are fooled. Just like half/quarter resolution effects in UE engine being hidden by temporal averages.

Holy shit lol

- MSAA rings any bells? SSAA?

- Programmable vertex/pixel shaders at directX 8 & 9 for more sophisticated materials and lighting models but resulting in more demanding games with performance bottlenecks? Moving away from fixed pipeline came at a cost.

- Tessellation? Helllooo?? Anybody home? Whole fucking TressFX and Hairworks kneecapping performances for better visual quality? Call of Pripyat having huge performance issues? Crysis 2 controversy?

- Volumetric effects that can kneecap the best hardware with 1% visual difference from medium to ultra high?

- Ray tracing? Surely that's recent enough to remember?

- DLAA itself, you run at native resolution to have best image quality... at the cost of performances. Mind blowing stuffs.

Ah yes, let's doubt Nvidia who basically is the frontrunner of this tech implementation and basically changed the upscaling game since Turing

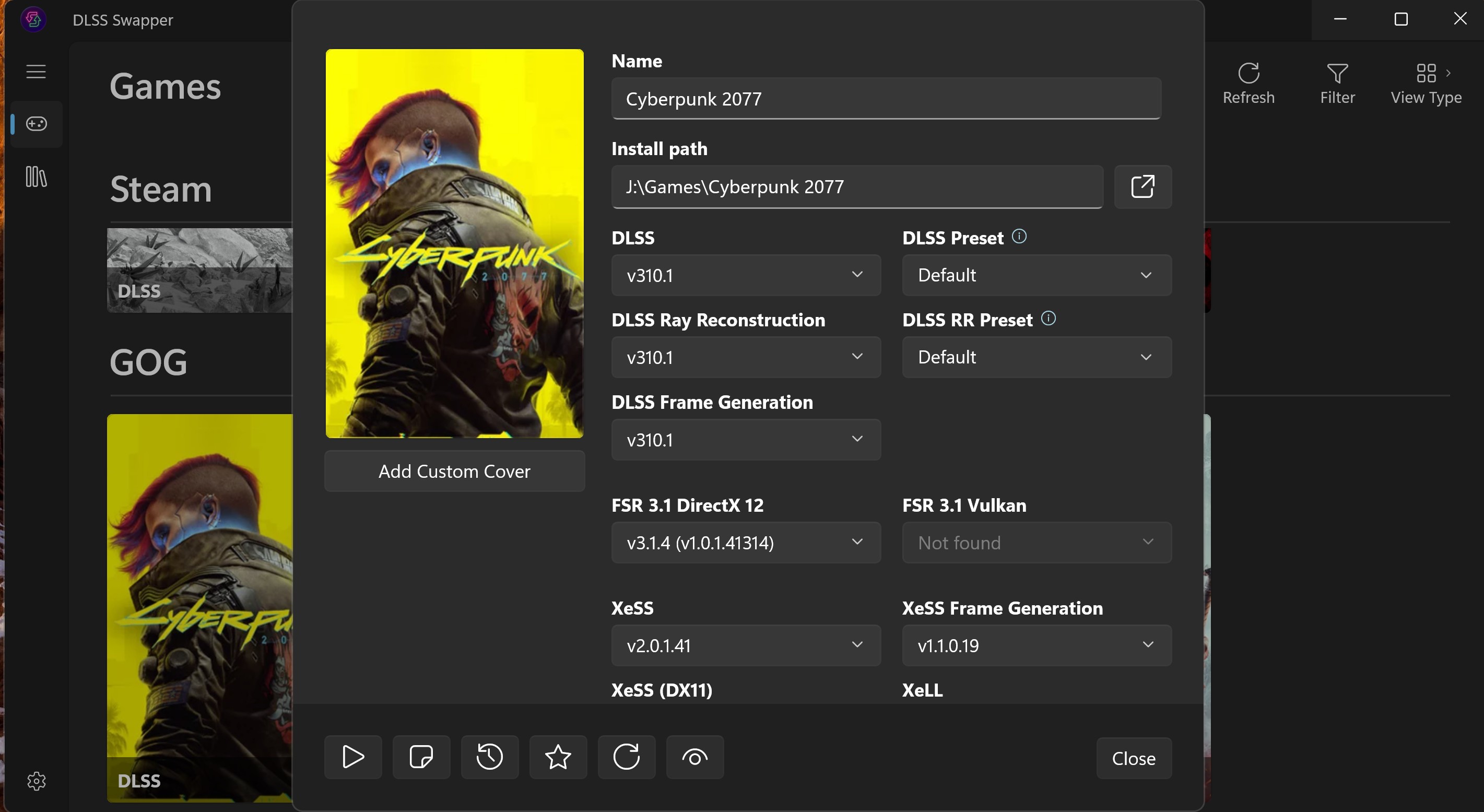

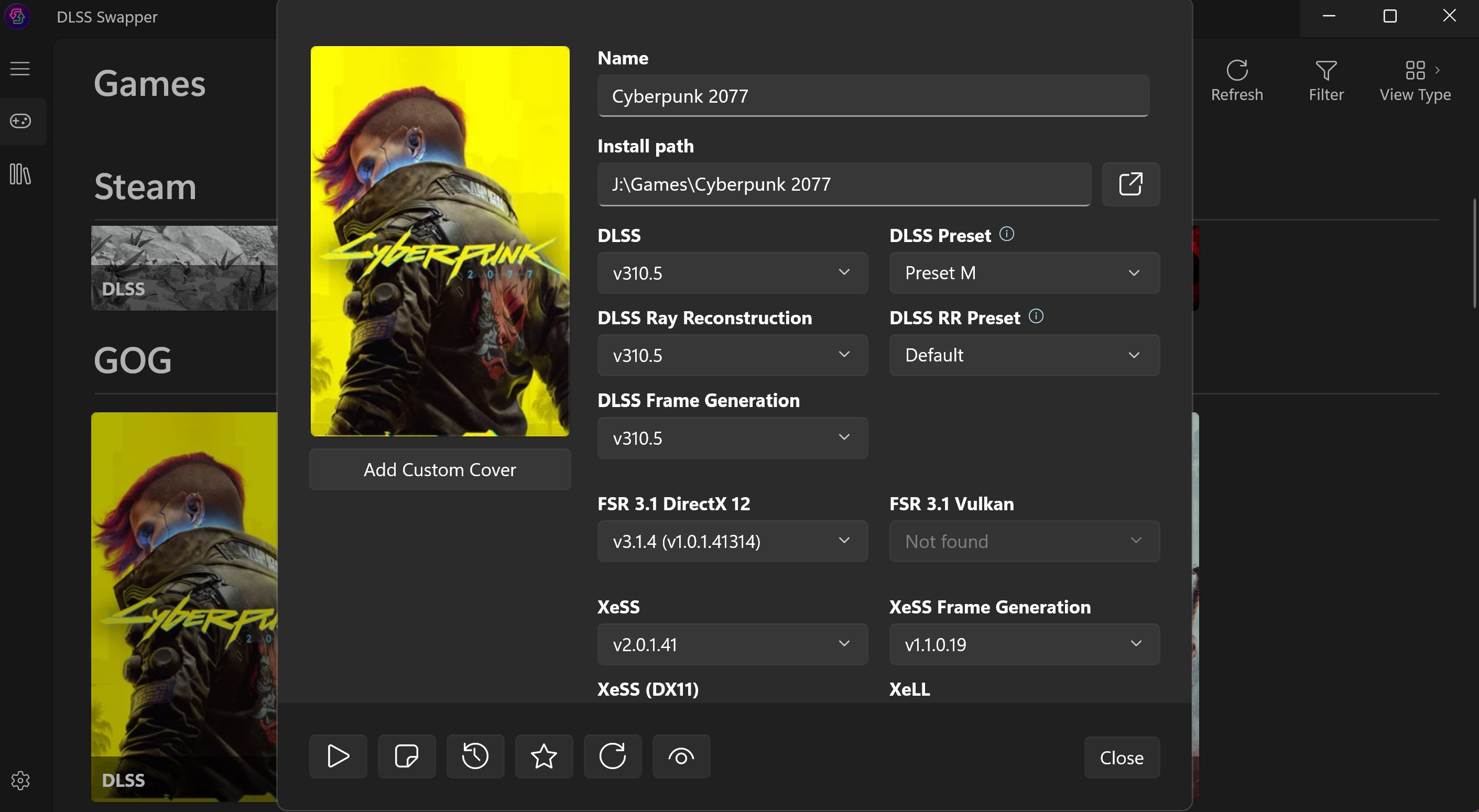

Nvidia is kneecapping DLSS 4, it should have better image quality with more parameters but somehow also more performant.

Hell, let's go at r/AMD and r/Intel to simply tell them to beat the fuck out of Nvidia with their upscalers, seems easy enough.

Because both series use FP8?

Do you understand how a pipeline works? Clearly not. There's inherently a bottleneck that you need an image to come in to be fixed by AI, no matter how many TOPS you can have, DLSS is a fraction of the frametime. A GPU is not using all its TOPs for DLSS, ever. Inherently the 5090 generates frames faster than other cards and of course ML blocks can tackle more workload, but they go hand in hand, of course never using full TOPS available.

Even for a 4070 Ti its a ~40% latency increase to run M model vs K model, narrowing it down to just DLSS, not your whole frametime.

5090 is a 20% penalty, but even at M model on a 5090 is faster than 4070 Ti's K model by 1.88x

Nothing so far of what you say makes any sense. of course a ms difference in the overall frametime is gonna shrink down to a low % difference in performances, but the data's there, it's much faster on a 5090. That leaves room to breathe for a lot more AI effects, be it Neural radiance cache path tracing, framegen, and upcoming neural texture compressions / neural shaders, etc.

Same fucking discussion happened during DLSS 4.0 release really, I think you even mentionned it.

Now go ask Turing card owners if they are happy to have received DLSS 4 for free years later and allows them to run games at performance/ultra performance with never before seen image quality at those resolutions and letting them play more modern games for a longer period of time over upgrading. They're fucking happy campers. For all the "AMD finewine" bullshit back then suggesting to buy a 5700XT over Turing, that ended up aging like milk.