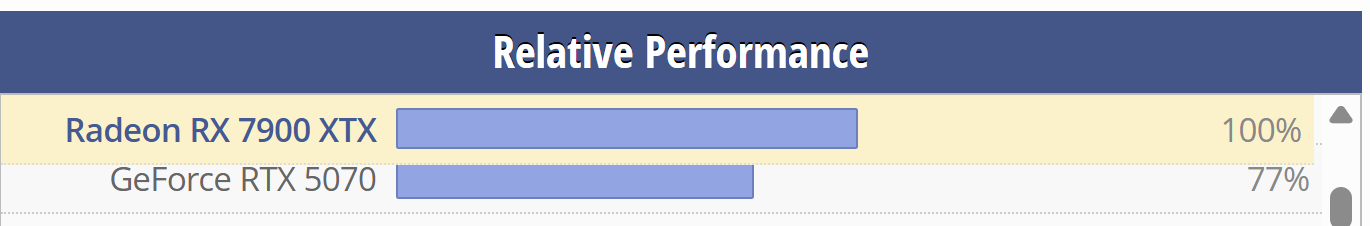

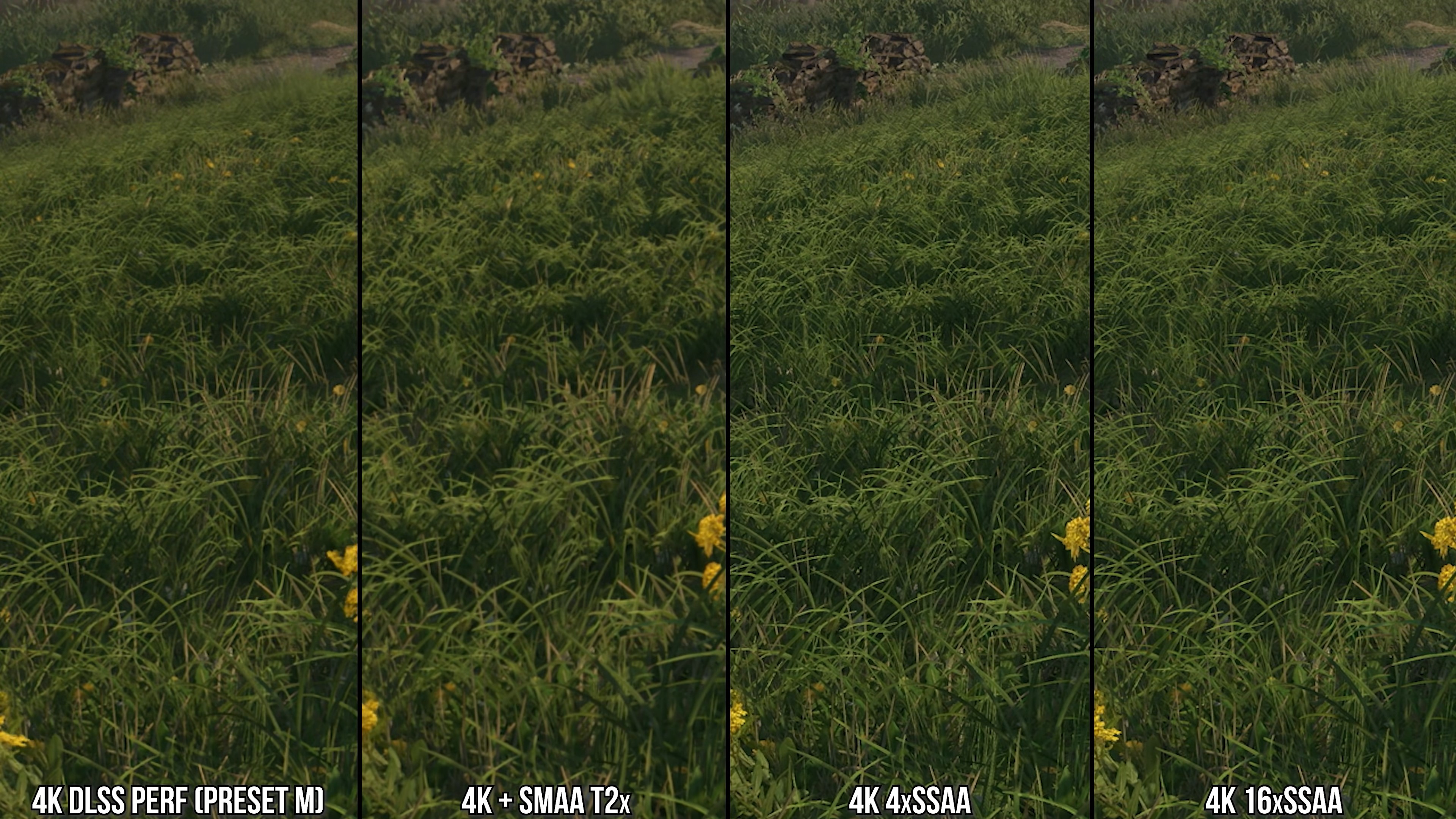

Based on YT videos I saw the mighty 5090 is the only card that can run 4.5 DLSS quality with a minimal performance cost. There's a noticeable performance impact on the 5080, 5070ti, and 40 series GPUs like my 4080, although DLSS Q is still somewhat usable (there's still noticeable performance boost over TAA native). On the 20/30 series though, the performance impact is greater, and DLSS Q is often more demanding than native TAA, rendering it unusable.

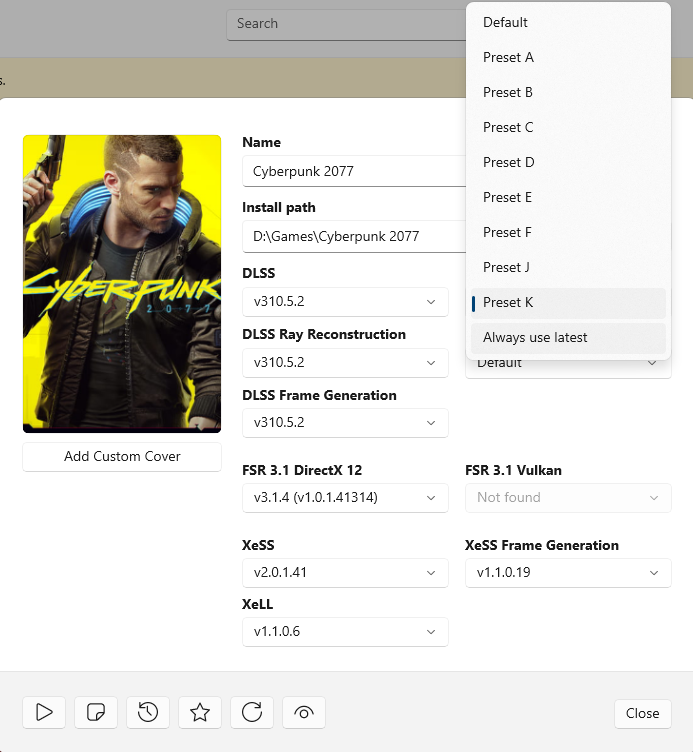

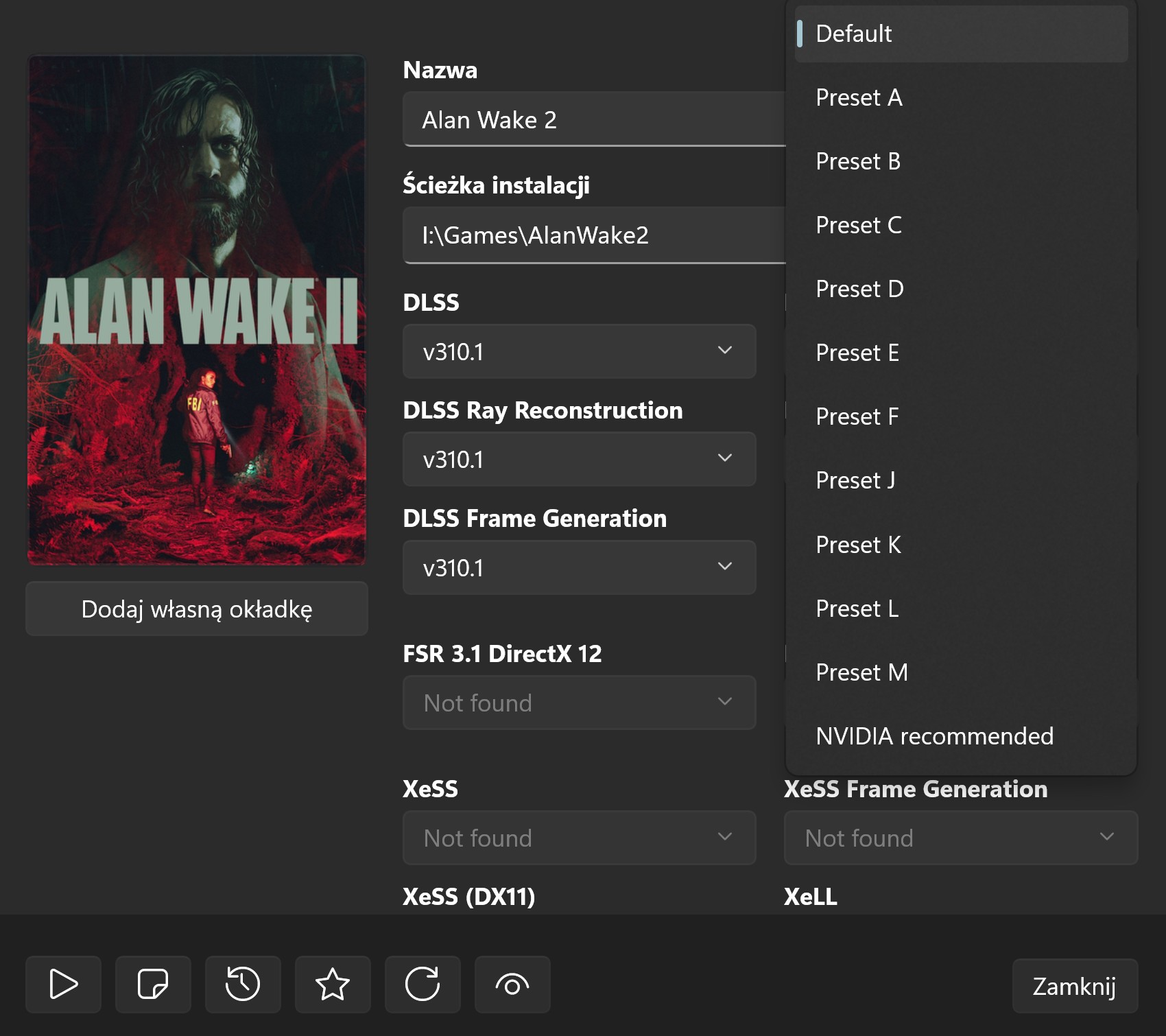

I don't plan on using DLSS 4.5 Quality mode for many games. The performance impact is too high at 4K, and I don't feel like I'm missing out much with older 4.0. However, 4.5 in Performance Mode is usable and only runs 1-3% slower than 4.0 on my card's Tensor Cores. That's acceptable, so I plan to use this mode for the most demanding games.