-

Hey Guest. Check out your NeoGAF Wrapped 2025 results here!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

TintoConCasera

I bought a sex doll, but I keep it inflated 100% of the time and use it like a regular wife

But we've already seen that it can't, no? People earlier ITT were complaining about how in the Oblivion remaster footage the shadows are all wrong because the source of the shadow (some building) is out of the camera view, so it's not taken into acount.the AI can distinguish between the person and the environmental impact on that person, such as lighting, rain, wind, and occlusion

I don't know man, maybe in the future they are able to improve on this but imho, right now this is just smoke and mirrors to appease the investors.

MazingerDUDE

Member

the occlusion artifact looks horrible

Last edited:

Sadly we are back in a era of pitchfork toting witch hunters , it's like everything is orchestrated & minions are just out & about running loose repeating whatever they're told to repeat & some of them be too dumb to actually understand what's going on but they just make sure they rally the troops on every website by repeating whatever they have been programmed to sayI'm starting to think a huge number of people's brains have been literally broken by some sort of psyop to have a Pavlovian reaction anything ai that literally stops their brains working and forces the tiresome "ahhregh ai slop, slop slop" to just slop out their mouths.

It's all very strange.

PeteBull

Member

If u could use the "lifehack" of lowsettings+ dlss5 to beauty it all up im sure nvidia would scream about it from the rooftops, more realistic scenario is- u need as high settings/internal res as possible and only then it gives u somewhat acceptable but still ai-alike results and requires at least 32gigs of vram(aka 5090 exclusive + maybe 6070ti/80/90 too in 1,5year or so).The real question. Can you run every thing at low settings and then get this cost of paint? Would it be greatly diminished?

Its infant stage of futuretech, kinda like dlss/rt was back in mid 2018.

falconhoof

Member

I've pondered the idea of where something like DLSS might go in the future and I see this as both a step towards and away from it.

What I hoped for was for games to be developed using the highest assets possible made by the development team. This is somewhat already a reality as there are often very high detail assets generated for cutscenes or even renders.

The notion is though that these assets would be used to train a model which would then be used to upscale the end results. Essentially like a very advanced version of LODding with extra stuff like lighting o top.

So this DLSS5 does that but I presume the results are all based on generalized data instead of per game, hence why these characters have their faces shifted into the usual AI face gen stuff.

The hope I have is that the end result would be the absolute artistic vision of the team presented to the player. The stuff you see in cinematics, but for all visuals.

What I hoped for was for games to be developed using the highest assets possible made by the development team. This is somewhat already a reality as there are often very high detail assets generated for cutscenes or even renders.

The notion is though that these assets would be used to train a model which would then be used to upscale the end results. Essentially like a very advanced version of LODding with extra stuff like lighting o top.

So this DLSS5 does that but I presume the results are all based on generalized data instead of per game, hence why these characters have their faces shifted into the usual AI face gen stuff.

The hope I have is that the end result would be the absolute artistic vision of the team presented to the player. The stuff you see in cinematics, but for all visuals.

Guardian Monkey

Member

Thats where I am thinking as well we are back to the rtx 200X series @ 500x series with this.If u could use the "lifehack" of lowsettings+ dlss5 to beauty it all up im sure nvidia would scream about it from the rooftops, more realistic scenario is- u need as high settings/internal res as possible and only then it gives u somewhat acceptable but still ai-alike results and requires at least 32gigs of vram(aka 5090 exclusive + maybe 6070ti/80/90 too in 1,5year or so).

Its infant stage of futuretech, kinda like dlss/rt was back in mid 2018.

ZehDon

Member

Oblivion uses UE5, which employs Lumen as a software based real-time ray tracing solution for its lighting. Lumen uses a LOT of shortcuts to achieve it's visuals, resulting in a huge amount of noise visible in most games that employ it (often called light boiling). Lumen doesn't play nice with DLSS period. I'd be surprised if UE5 out of the box had the necessary structure to feed the hooks the AI would need, resulting in a lot of screen-edge and occlusion issues. Put it this way: were the issues noted present in every frame of every game shown? No. So, we can deduce that it's not an issue with tech fundamentally, rather it's the nature of retrofitting new tech onto old games not built for it.... But we've already seen that it can't, no? People earlier ITT were complaining about how in the Oblivion remaster footage the shadows are all wrong because the source of the shadow (some building) is out of the camera view, so it's not taken into acount...

March Climber

Member

There's a very big difference between a completely original, customizable game made with AI tools, versus an AI-filter-esque alteration of an existing one.And as predicted people are upset

fran.fernandez

Member

That's impressive!

Assassin creed forest with the real lighting look awesome . And looks like the guy is rotating the camera to show consistency with previous rendering .

. And looks like the guy is rotating the camera to show consistency with previous rendering .

Assassin creed forest with the real lighting look awesome

mdkirby

Gold Member

Oh for sure, many of these people, methods and language are 100% identical to that of the virtue signalling narcissistic prigs that plagued the entertainment industry and social media over the last decade.Sadly we are back in a era of pitchfork toting witch hunters , it's like everything is orchestrated & minions are just out & about running loose repeating whatever they're told to repeat & some of them be too dumb to actually understand what's going on but they just make sure they rally the troops on every website by repeating whatever they have been programmed to say

Their power waned within their prior systems and virtues following trumps second election, and they've found fertile ground with ai. It's all the same, and just as many of them or many of the bot farms that promoted them, they are and will be financed by the same shadow money from both domestic and foreign sources (the foreign ones being primarily Russia and China). People forget the enemies of the west use this as a tool of war, to cause division within our societies, and weaken us, and as you may have noticed we're kinda in ww3 even if some don't like to use those words.

Grildon Tundy

Member

People hating on DLSS 5 are like people crapping on lab-grown diamonds.

ReyBrujo

Member

the artifact looks horrible

You don't mind the bag appearing out of thin air?

DavidGzz

Member

Sigh...I hope Sony and fsr4 will have this feature too, to fix and clean all the ugly characters made by sony california hq.

I don't think this beautifies characters at all. It just makes them look more realistic. Starfield is a special case because their models have always been goofy looking. Besides Sony will be able to see what the models look like with this tech and make the necessary downgrades lol

TintoConCasera

I bought a sex doll, but I keep it inflated 100% of the time and use it like a regular wife

Well then fucking bravo for them showcasing their new technology with a game that uses Lumen.Lumen doesn't play nice with DLSS period.

But Lumen's not the point. Lumen + DLSS 4 causes small artifacts sure, but in the showcase there weren't just small artifacts but the shadows in the world being completely wrong. I doubt that has anything at all to do with Lumen.

Haven't watched it all, mind you, but isn't most if not all of what they've shown either still shots or the camera moving really slow? There's probably a reason for that.Put it this way: were the issues noted present in every frame of every game shown? No.

Last edited:

All of thisOh for sure, many of these people, methods and language are 100% identical to that of the virtue signalling narcissistic prigs that plagued the entertainment industry and social media over the last decade.

Their power waned within their prior systems and virtues following trumps second election, and they've found fertile ground with ai. It's all the same, and just as many of them or many of the bot farms that promoted them, they are and will be financed by the same shadow money from both domestic and foreign sources (the foreign ones being primarily Russia and China). People forget the enemies of the west use this as a tool of war, to cause division within our societies, and weaken us, and as you may have noticed we're kinda in ww3 even if some don't like to use those words.

Come On Tars

Member

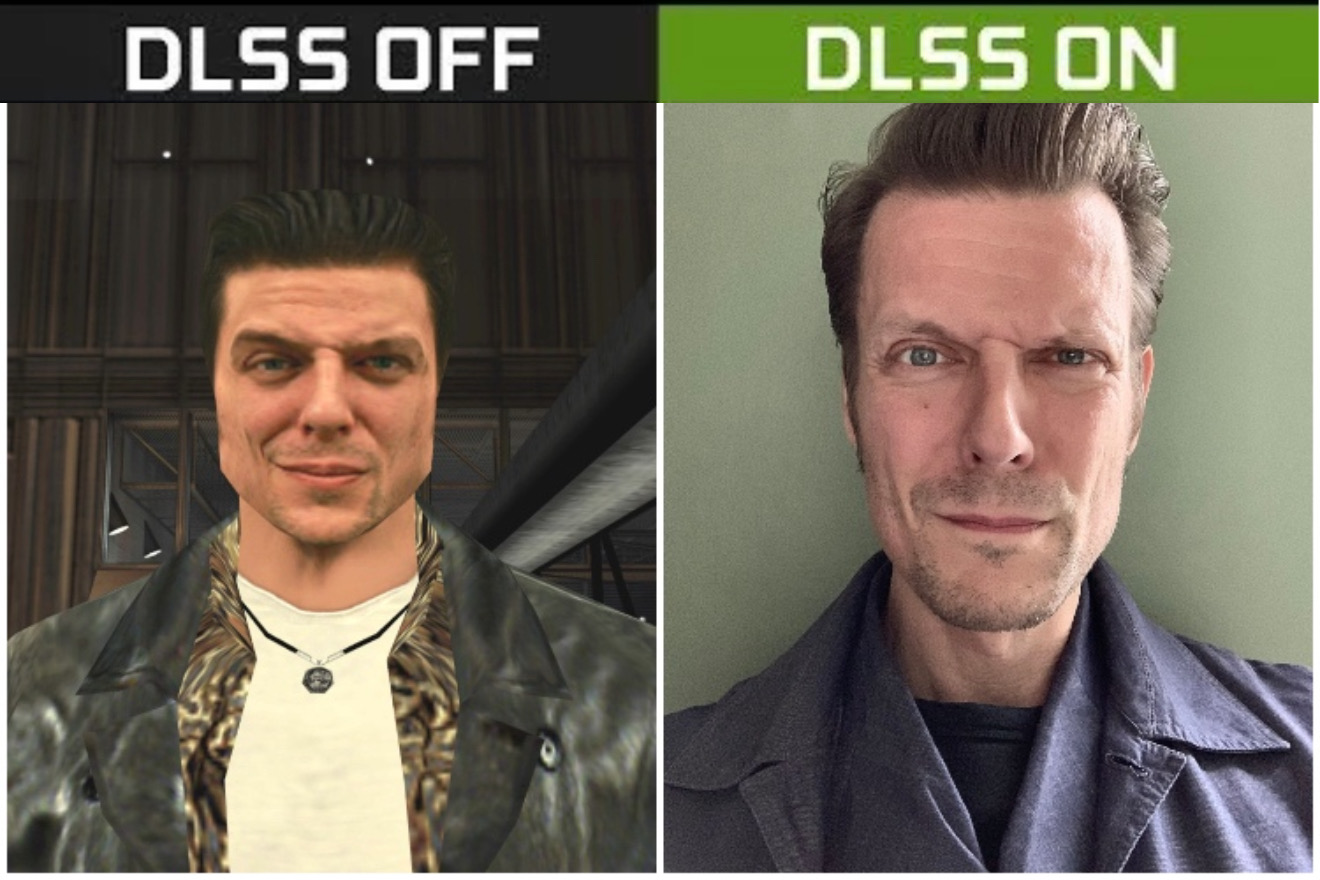

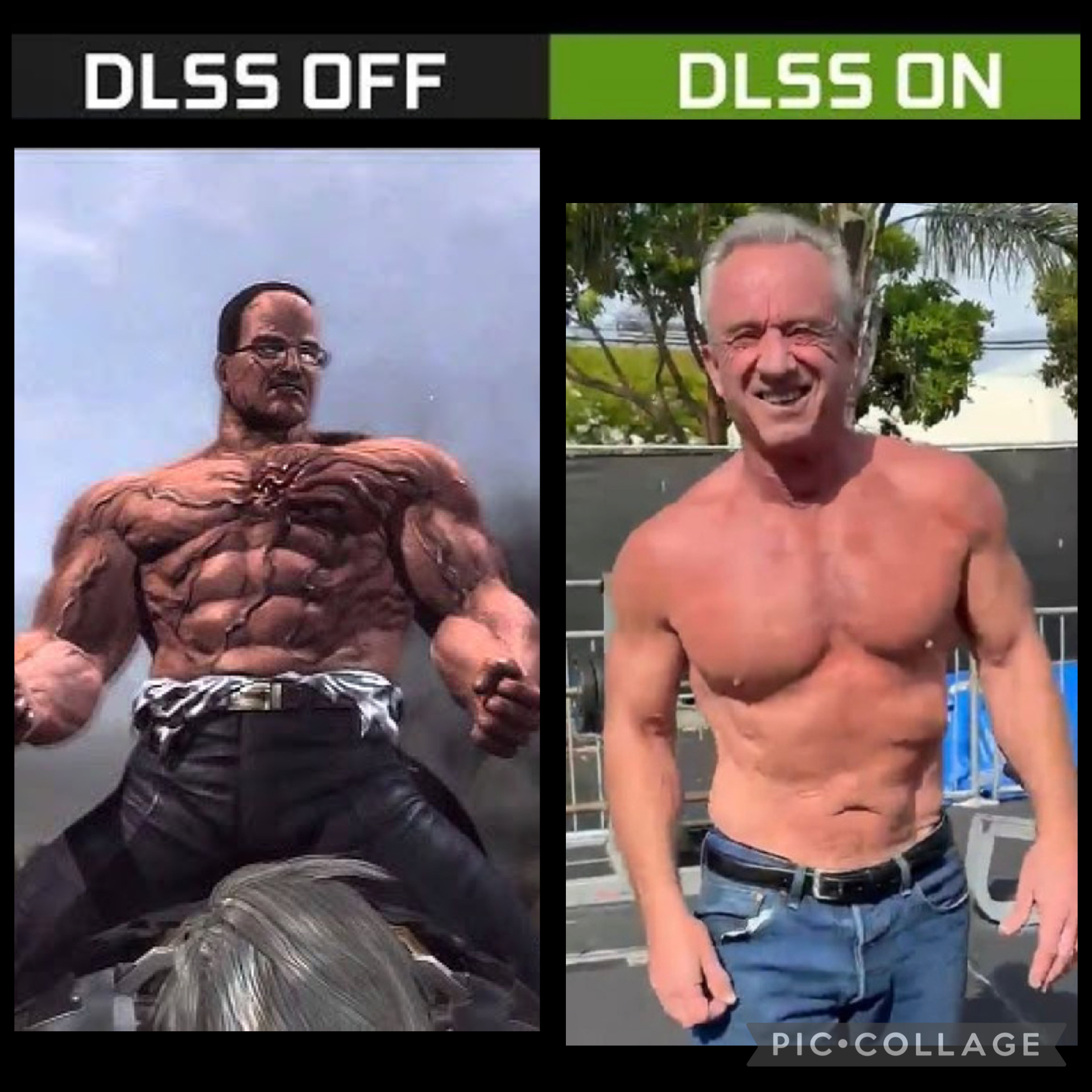

yall remember this?

I think this is rad. I don't want it for every game but man. A replay of Ghost Recon Wildlands were it look like a real movie will be insane.

The full face as a texture on blocky heads was a wild time in videogame history

ZehDon

Member

Those heavy temporal instability issues were present in the only game using UE5. So, I suspect Lumen and UE5 in general to be a big part of that problem. As I said, Lumen doesn't play nice with DLSS at all.... But Lumen's not the point. Lumen + DLSS 4 causes small artifacts sure, but in the showcase there weren't just small artifacts but the shadows in the world being completely wrong. I doubt that has anything at all to do with Lumen...

I'd probably watch the video before discussing it.... Haven't watched it all...

Last edited:

MagusMajul

Member

i think it looks incredible i love it

Mr.Phoenix

Member

I can only conclude that a lot of people do not understand this tech. And as usual, and just like it happened with the OG DLSS... are shitting on it and all trying to come up with the best hot takes.I am completely dumbfounded, how did anyone thought this was a good idea? holy shit...

Things to consider: developers can control how strong or soft the effect will be. you will not need ridiculously beefy hardware shooting an ungodly amount of rays into a scene for RT... it will actually let them use significantly less rays but end up with an overall better lighting effect.

I honestly would have thought everyone would have learned their lesson after DLSS 1.0 launch when it comes to AI anything.

Wolzard

Member

Doubt anyone will read that, but looks like Nvidia are just showing you how things could look. Devs have complete control.

Most people nowadays are incapable of reading anything longer than two lines. Even with clear explanations, they will continue to complain about things that are completely nonexistent.

//DEVIL//

Member

I gotta be honest, i Like what they showed ? I dont care if its AI or not AI. i dont have hate for AI or frame generation. if it gets me a better image, i will fucking take it. anyone who wants to cry about it then go ahead and cry as much as you want.

Its optional for the devs. not mandatory in the suit.

The only people complaining really are the shitty artists. AMD fanboys, Nvidia haters, and the sad losers that are afraid to lose their job because of the AI.

TheDarkPhantom

Member

This has birthed legendary memes

TintoConCasera

I bought a sex doll, but I keep it inflated 100% of the time and use it like a regular wife

So your take is that the whole shadow cast on some building being wrong was because of Lumen? Alright.Those heavy temporal instability issues were present in the only game using UE5. So, I suspect Lumen and UE5 in general to be a big part of that problem. As I said, Lumen doesn't play nice with DLSS at all.

Am I mistaken and they have shown some heavy action gameplay? Could you point me towards it?I'd probably watch the video before discussing it.

So far I've seen RE, asscreed, Oblivion and Starfield and they were all like I described, still shots or the camera panning very slow.

LiquidMetal14

hide your water-based mammals

LiquidMetal14

hide your water-based mammals

"That's a fundamentally different approach to how the final image gets assembled. And it's deterministic and consistent from frame to frame, which is a hard requirement for games."

J Required

Gold Member

nope it won't.

it already uses a shit load of memory, if you wanted to make it consistent you'd literally have to keep previous generation in memory and even save it into storage.

this will literally look different every time you start the game, or just open the menu and close it again.

Topher

Identifies as young

That's a good read. Copying the content here:

NVIDIA just dropped DLSS 5 at GTC 2026, and the internet already has opinions.

I was in the room and I went hands-on. Not watching a sizzle reel, not scrubbing through a carefully curated 30-second trailer, but sitting in front of multiple games with DLSS 5 toggling on and off in real time. Hogwarts Legacy. Starfield. Assassin's Creed Shadows. Oblivion Remastered. The Zorah tech demo. The visual improvements are significant. Not incremental. Significant.

But if you've been scrolling social media, you'd think NVIDIA just shipped an Instagram beauty filter for video games. And I get why that's the first reaction. But it misses the true picture by a wide margin.

Why Faces Get All the Attention

We've had photorealistic environments in games for a while now. Water reflections, volumetric lighting, incredibly detailed cityscapes and forests. The hardware and the rendering techniques have gotten us to a place where environments can look stunning under the right conditions.

But faces have been the holdout. Getting a human face to look truly photorealistic in real time has been one of the most expensive problems in computer graphics from a compute standpoint. Subsurface scattering on skin, the way light interacts with individual strands of hair, the micro-expressions that make a character feel alive rather than like a wax figure. All of that requires an enormous amount of rendering horsepower..

I've probably seen ten different "floating head" tech demos over the course of my career. That's not an exaggeration. They're always a single head with no hair, no body, no environment, because rendering a photorealistic face at that level of quality is so expensive that it can only be done in isolation. You never see it inside an actual game, because the performance budget won't allow it.

Note: these are photos taken of a screen, so expect some glare/lighting impact.

DLSS 5 closes that gap in a pretty dramatic way. And because that's the area where the delta between "before" and "after" is most visible, that's what everyone is reacting to. The NVIDIA team put it well during my demo. It's a psychological effect. You've seen environments rendered really well before. When you suddenly see a character rendered at that same photorealistic level, your brain flags it immediately. It stands out.

Note: these are photos taken of a screen, so expect some glare/lighting impact.

Fair enough. But focusing only on the faces is wrong.

It's Happening Everywhere, Not Just on Character Models

What I saw in the demos was a comprehensive improvement across the entire scene. And the moment that really drove this home wasn't a face. It was a coffee maker.

In Starfield, there's a countertop scene with a coffee machine, some paper towels, a cup, napkin holders. Standard environmental clutter. With DLSS 5 off, everything looks flat. The coffee maker fades into the background. Toggle it on, and suddenly the objects have shape. The lighting wraps around them naturally. The spatial relationships between the items and the surfaces they're sitting on become clear. It goes from "assets placed in a scene" to "objects that actually belong in a room."

Note: these are photos taken of a screen, so expect some glare/lighting impact.

The same thing played out across every title. In Oblivion Remastered, the water went from good video game water to something that could pass for real, with the kind of light interaction and shimmer you'd expect from an offline render. In Assassin's Creed Shadows, the trees and distant foliage gained dramatically better depth and separation in how light moved from the canopy down through the branches. In the Zorah tech demo, which is a 300 GB courtyard scene built by 20 full-time artists, the subsurface scattering on foliage was just as impressive as anything happening on character faces. Leaves picked up that translucent glow from backlighting that is incredibly difficult and expensive to model and render through traditional means.

Note: these are photos taken of a screen, so expect some glare/lighting impact.

The AI model powering DLSS 5 is a single unified model. Same model for every game. It's not trained per-title, per-face, or per-object type. It takes the raw color buffer and motion vectors as input, analyzes the scene semantics from that single frame, and enhances the lighting and material response while staying anchored to the original 3D content. It recognizes the difference between skin and metal and water and stone and foliage, and it processes each of those materials differently based on how light should interact with them.

That's not a filter. That's a fundamentally different approach to how the final image gets assembled. And it's deterministic and consistent from frame to frame, which is a hard requirement for games.

The Developer Angle Matters More Than People Realize

One of the things I came away most encouraged by is the developer control story. This is critical. If DLSS 5 were a black box that slapped a one-size-fits-all enhancement over every game, the artistic intent concerns would be completely valid. But that's not what this is.

Note: these are photos taken of a screen, so expect some glare/lighting impact.

During the demo, the DLSS research talked through the level of granularity available. Developers don't just get an on/off switch. They get intensity controls that can be dialed anywhere, not just full strength. They get spatial masking, so they can set the water enhancement to 100%, wood to 30%, characters to 120%, all independently within the same scene. They get color grading controls for blending, contrast, saturation, and gamma. All of this runs through the existing SDK, which means studios already using DLSS and Reflex have a familiar pipeline to work with.

Note: these are photos taken of a screen, so expect some glare/lighting impact.

The developer support list tells you something. Bethesda, CAPCOM, Ubisoft, Tencent, Warner Bros. Games, and others have already signed on. But what struck me more than the names was what the NVIDIA team shared about the reactions inside those studios. When developers previewed the technology, their technical artists were apparently co-advocating for it internally, because it gets them closer to what they actually intended their characters and environments to look like when they were designing them in their authoring tools. Then those assets get dropped into a real-time game engine with a finite performance budget, and compromises happen. DLSS 5 lets them claw back some of what gets lost in that translation.

I think that's the right framing. DLSS 5 isn't NVIDIA applying its stylistic choices on top of someone else's game. It's providing a tool that helps developers close the gap between what they can render in 16 milliseconds and what they actually want the player to see. That's a meaningful distinction, and it's a big reason why the developer response has been positive.

The Hardware Story Is Interesting Too

The demos I saw were running on a pair of RTX 5090 GPUs. One was handling the game rendering, the other was dedicated entirely to running the DLSS 5 AI model. NVIDIA was upfront that there's still significant optimization work to do, and the plan is to ship DLSS 5 running on a single GPU when it launches later this year.

Note: these are photos taken of a screen, so expect some glare/lighting impact.

But I think the dual-GPU setup itself is worth mentioning. For years, multi-GPU gaming has been effectively dead. SLI is gone. CrossFire is gone. The idea that you'd run two graphics cards for a better gaming experience felt like a relic of the mid-2000s. And yet here we are, with a legitimate use case where a second GPU running an AI workload alongside a primary rendering GPU produces a dramatically better visual result.

Note: these are photos taken of a screen, so expect some glare/lighting impact.

Is that where this ends up for enthusiasts? Probably not at launch. But the concept of dedicating GPU compute specifically to AI-driven visual enhancement, separate from the rendering pipeline, is an interesting architectural idea. It wouldn't surprise me if that becomes a real conversation again as neural rendering matures.

Where This Goes From Here

DLSS 5 is targeting a fall 2026 launch, which means we've got several months of optimization and refinement ahead. Developers are just getting their hands on it now, and they'll need time to work with the controls and dial in the right settings for their specific titles. First-wave games include Starfield, Assassin's Creed Shadows, Resident Evil Requiem, Hogwarts Legacy, Phantom Blade Zero, The Elder Scrolls IV: Oblivion Remastered, Delta Force, and more.

It's also worth noting that this works across rendering approaches. Rasterized games, ray-traced titles, and path-traced experiences all benefit. And the higher the fidelity of the input, the better the output. DLSS 5 isn't replacing good rendering. It's amplifying it.

The early social media reaction is predictable. New technology that changes how games look will always generate strong opinions, especially when AI is involved. But the knee-jerk "it's just a face filter" take doesn't hold up once you've actually seen the full scope of what DLSS 5 is doing across an entire scene, across multiple games, in real time. Go look at a coffee maker. Go look at stone textures. Go look at the way light passes through a leaf. That's where the real story is.

What do you think, is neural rendering the next big unlock for game visuals? I'd love to hear from people who have spent time with these games.

Last edited:

Esquie

Member

yall remember this?

I think this is rad. I don't want it for every game but man. A replay of Ghost Recon Wildlands were it look like a real movie will be insane.

Lol at this AI interpretation of a car reverse driving down the highway:

Guardian Monkey

Member

Well, that answers if that's gonna be in the next GEN consoles.

Hawk The Slayer

Member

Fuck this A.I shit!

I'm perfectly fine with Pathtracing DLAA Framegen X2 or Pathtracing DLSS Quality Frame gen off in the future when things starts being more demanding than RE Requiem.

Basically I'm good with Pathtracing forever and have no interest in this ultra demanding A.I crap but I'm also not a man of my words cause I always said I was fine with 1080p and 60 fps forever with my pervious PC but went 1440p, over 60 fps, and pathtracing now with my new PC. So I'll see if I'll still keep this "promise" when I buy my future PC 6 to 8 years from now.

I'm perfectly fine with Pathtracing DLAA Framegen X2 or Pathtracing DLSS Quality Frame gen off in the future when things starts being more demanding than RE Requiem.

Basically I'm good with Pathtracing forever and have no interest in this ultra demanding A.I crap but I'm also not a man of my words cause I always said I was fine with 1080p and 60 fps forever with my pervious PC but went 1440p, over 60 fps, and pathtracing now with my new PC. So I'll see if I'll still keep this "promise" when I buy my future PC 6 to 8 years from now.

Fuck this A.I shit but I'll keep a eye out for it over the years to see how it improves, still fuck this shit.

Last edited: