-

Hey Guest. Check out your NeoGAF Wrapped 2025 results here!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Nvidia at Live GTC : DLSS 5

- Thread starter peish

- Start date

ResurrectedContrarian

Suffers with mild autism

The environments look fantastic in most of the demos.

The demo clearly wasn't as integrated into the games, but when they release the tooling, you'd easily be able to send to this effect prior to overlaying your UI etc.

I find it oddly funny that color in UI changes at 7:24

maybe we should reconsider this tech when it can actually tell what is UI and what is not

The demo clearly wasn't as integrated into the games, but when they release the tooling, you'd easily be able to send to this effect prior to overlaying your UI etc.

They said it's essentially just the color values + motion vectors, but it feels absolutely trivial to keep training these models on additional inputs from the engine like distance etc. I think they went for "very universal to plug in" for their first demos and version here, but the direction this is going, tighter integration will produce some amazing things before long.This really shows that's a screen space effect.

dotnotbot

Member

Current DLSS5 look is basically this:

exaggerated lighting, fucked up contrast, oversharpening

It could look a lot better with some tweaking but Nvidia desperately wanted that wow effect. Now looking at comparison shots closely it doesn't seem to reinvent things but exaggerates them way too fucking much.

exaggerated lighting, fucked up contrast, oversharpening

It could look a lot better with some tweaking but Nvidia desperately wanted that wow effect. Now looking at comparison shots closely it doesn't seem to reinvent things but exaggerates them way too fucking much.

Last edited:

Unknown Soldier

Banned

John Bilbo

Gold Member

Current DLSS5 look is basically this:

exaggerated lighting, fucked up contrast, oversharpening

It could look a lot better with some tweaking but Nvidia desperately wanted that wow effect. Now looking at comparison shots closely it doesn't seem to reinvent things but exaggerates them way too fucking much.

The toned down effect makes much more sense.

mileslongthe3rd

Member

The first thing I will play with DLSS5 on will be Mass Effect Andromeda

Last edited:

John Bilbo

Gold Member

Gonna need DLSS6 for that oneThe first thing I will play with DLSS5 will be Mass Effect Andromeda

adamsapple

Or is it just one of Phil's balls in my throat?

Current DLSS5 look is basically this:

exaggerated lighting, fucked up contrast, oversharpening

It would be good if people know what those words means before posting.

What you don't like is actually rim lighting (which is ARTIST DRIVEN standard across all media, like movies etc.)

Picture is heavily tonemapped because again ARTIST aka colorists want it to look like that which is pretty much again standard across media.

And finally same people also add contrast because people like contrasty pictures in media.

AI didn't came with that look. AI learned it from movies/photos etc. and that look is literally how "professional" grading looks like.

And the only reason why you talk about is because you people hate AI not because you can spot anything of value. That kind of look is completely standard across whole media for like 30 years now.

Also this is not artist intent:

this was at the time:

ps1 version was limitation of hardware and if artist could have access to modern graphics they would make her look as real life as possible, not this anime look.

So something like DLSS5 providing proper lighting is natural continuation in graphics rise.

Just ton of angry people threatened by AI or tired of it venting out that it goes major way into their hobby.

If any of those shots would be shown as native game none of you would even say anything and instead you would claim this is next gen game etc. without hint of rejection.

this was at the time:

ps1 version was limitation of hardware and if artist could have access to modern graphics they would make her look as real life as possible, not this anime look.

So something like DLSS5 providing proper lighting is natural continuation in graphics rise.

Just ton of angry people threatened by AI or tired of it venting out that it goes major way into their hobby.

If any of those shots would be shown as native game none of you would even say anything and instead you would claim this is next gen game etc. without hint of rejection.

Last edited:

StreetsofBeige

Gold Member

Current DLSS5 look is basically this:

exaggerated lighting, fucked up contrast, oversharpening

It could look a lot better with some tweaking but Nvidia desperately wanted that wow effect. Now looking at comparison shots closely it doesn't seem to reinvent things but exaggerates them way too fucking much.

Thats the problem with the graphics effects.

Remember way back back bloom? Everything is blinding bright in your face. 100% unrealistic.

Same goes for RT and DLSS. It can technically make it look cooler, but sometimes (as you said) it's overdone like the studio has to jack up the effects every second to make a point of "Hey look at me!"

RT promos were the worst. Everything is shining and reflecting like everything is a mirror. In real life, just because something is glass or metal doesn't mean every light source bouncing off it is a giant reflection. I'm looking out my back room window right now. There's zero reflection. All I see is my own and my neighbours backyard in clear view. Not one thing is reflecting off it like a mirror image.

So the whole thing about "realism" is total BS. It might be there if done more modestly. But to promote the feature and Wow gamers, the new effects could be amped up to stupid levels.

Last edited:

dotnotbot

Member

It would be good if people know what those words means before posting.

What you don't like is actually rim lighting (which is ARTIST DRIVEN standard across all media, like movies etc.)

Picture is heavily tonemapped because again ARTIST aka colorists want it to look like that which is pretty much again standard across media.

And finally same people also add contrast because people like contrasty pictures in media.

AI didn't came with that look. AI learned it from movies/photos etc. and that look is literally how "professional" grading looks like.

And the only reason why you talk about is because you people hate AI not because you can spot anything of value. That kind of look is completely standard across whole media for like 30 years now.

So it's exaggerated lighting and contrast, gotcha

And the only reason why you talk about is because you people hate AI not because you can spot anything of value.

"I know something and therefore I'm better than you".

I can instantly see something looks way off, don't need to be photographer or colorist to see that for example TV in vivid mode looks garbage and the same goes for those "realistic" AI images. Maybe I can't name it well but I can see it.

Last edited:

I can instantly see something looks way off, don't need to be photographer or colorist to see that for example TV in vivid mode looks garbage and the same goes for those "realistic" AI images. Maybe I can't name it well but I can see it.

That's the point. The only reason why you think it looks off is because it was nvidia presentation on their use of AI in the new DLSS5. If this was presented by capcom as upgrade to their graphics going from them you would hail them as best studio ever that create insane graphics.

anti-AI is pretty much circe jerk these days. DLSS2-3-4 is also AI but now that people go used to it no one has issues with it despite the fact that it literally does exact same thing DLSS5 does. It increases detail that was not originally in picture same way DLSS5 does. The difference is that DLSS5 model learned how to properly simulate lighting on various surfaces in the game instead of only resolving low resolution detail to high resolution.

If I would do Cyberpunk2077 npc head comparison with pathtracing on off and slap onto it dlss5 people would say path traced heads would look worse simply because anti-ai circlejerk.

Mr1999

Member

Honestly I'm more surprised they didn't flex it properly. I'm also not one of those guys clutching their pearls like this violates the sacred artistic vision, does it look off, yes, there's a creepy jitter there but im sure they will get it sorted out. I watched some of the videos on this and the guy thought it was just weird to have grace dolled up like that when her you know what just died, but that's just an accessory that you can change. Im actually waiting for full ai gameplay like some of those youtube videos.

Last edited:

vanillaFace

Member

when the model of the video card is also the price of the video card, Nvidia.

yamaci17

Member

of course I know that. but the way devs implement these features doesn't really spark much of a confidence, no?The demo clearly wasn't as integrated into the games, but when they release the tooling, you'd easily be able to send to this effect prior to overlaying your UI etc.

even something basic like this needs a new model for it to get fixed. you can actually fix it with a mod too (it happens with HDR)

Last edited:

roosnam1980

Gold Member

Deep-Learned Super Slop

Last edited:

Rentahamster

Rodent Whores

It looks like ass now, but this is the worst it's ever going to look, and it will only get better. I think that with the right references to train on, it might turn out well. For example, if the training data was the same graphics, but rendered at higher resolution with full lighting effects and bells and whistles enabled, the theoretically, DLSS5 would create a filter that looks more authentic, would it not?

analog_future

Banned

Also this is not artist intent:

this was at the time:

ps1 version was limitation of hardware and if artist could have access to modern graphics they would make her look as real life as possible, not this anime look.

So something like DLSS5 providing proper lighting is natural continuation in graphics rise.

Just ton of angry people threatened by AI or tired of it venting out that it goes major way into their hobby.

If any of those shots would be shown as native game none of you would even say anything and instead you would claim this is next gen game etc. without hint of rejection.

You really think if the artists could've chosen they would've done this instead of the anime look?

Do you think artists only draw because they can't take a picture? Do you think Studio Ghibli only exists because they don't have the skills to make their films look more like real life?

Are you retarded?

Last edited:

ResilientBanana

Member

I agree. How does one look at this and say DLSS5 OFF was the artists intent? Very clearly, they were limited by the Creation Engine.Starfield is legitimately one of the ugliest AAA games this gen. It's baffling how a company with the backing of Microsoft can make something that looks so awful. DLSS5 was such an obvious improvement.

I mean, this comparison alone sells me on the tech by itself. If Bethesda had brought this out as an RT or PT update, people would be coming all over themselves to praise them for finally making a good looking game. Anyone who calls this a "Yassify" filter or whatever is absolutely lost and should have all their opinions ignored.

StreetsofBeige

Gold Member

Bottom pic looks like last gen dogshit. I'm surprised some people think it looks better than DLSS On.I agree. How does one look at this and say DLSS5 OFF was the artists intent? Very clearly, they were limited by the Creation Engine.

Only way I can see it is if someone is simply just an anti-AI gamer. They prefer worse looking visuals for sake of preferring a graphic artist handcrafting something like DLSS On themselves than having the algorithm do it for them.

Basketball

Member

I feel like yesterday was complete Bizarro world. Everything was a ragebaitStarfield is legitimately one of the ugliest AAA games this gen. It's baffling how a company with the backing of Microsoft can make something that looks so awful. DLSS5 was such an obvious improvement.

I mean, this comparison alone sells me on the tech by itself. If Bethesda had brought this out as an RT or PT update, people would be coming all over themselves to praise them for finally making a good looking game. Anyone who calls this a "Yassify" filter or whatever is absolutely lost and should have all their opinions ignored.

Almost every example they showed were better than the previous images.

It turned Grace from PS3 Quality to almost the real life face model. Everything from Starfield improved instantaneously.

I remember the days of the ICE Enhancer mods for GTA 4 and people trying to make that game look like real life

and now the moment we get closer to it. It's apparently just slop. Like do people's eyes don't work anymore.

It feels like it's just a collective effort from the AntI AI crowd and the dying feminist in games movement (Which is every single fucking Game Dev on Bluesky) mad that Grace got hotter somehow + All the Laid off and soon to be laid off Game devs, and Furry $%$ Artists who lost to AI generators

but it's from all over

Even the anti woke side hates it because Indians, and Nigerians on Twitter abused AI so much, and it's just a big mess

just this image alone should shut everyone up. I don't give a fuck. This looks amazing.

I really hope Nvidia, Bethesda keep going and don't listen to these fucking losers and cancel this great work, or water it down due to crybabies online.

If they came out with a PS6 and debuted Starfield or Re9 without saying the word AI. They would all be screaming from the hills that we were in the future of Games.

Last edited:

You really think if the artists could've chosen they would've done this instead of the anime look?

Do you think artists only draw because they can't take a picture? Do you think Studio Ghibli only exists because they don't have the skills to make their films look more like real life?

Are you retarded?

If you would show this artist in 90s for how good their character can look like they would shit their pants and cry from joy.

Also this is modern aeris from remake:

Which still is limited by graphics because they don't have access to pathtracing in that game and path tracing is still not complete lighting to give you real life graphics (that would be proper non rasterized raytracing).

Notice that this aeris wears makeup just like the ai one you presented.

Last edited:

While I do agree that the tonemapping/color correction/exposure is off, and I would bet there's even some sharpening going on, I'm not necessarily seeing any actual new lights place in the above shot. To be fair, I think Starfield is an incredibly ugly game and needs reshade to look good in stock form.An RT or PT update wouldn't place a random sun in front of the scene and blow out the contrast though. PT and RT often make scenes darker with highlights.

It'll improve but the lighting on the majority of the examples is awful. I'd like to see what it does on a game with RT lighting already embedded to get a better idea.

I'll try to markup the images. Please excuse my poor MS paint skills. I'll point out what stands out to me between the images.

- Enhanced reflections. It appears that the floor does have shiny material properties, but the DLSS5 pass is bringing them out further than the stock image.

- Significant enhancements to the specular highlights on her jacket, specifically how light is interacting with the materials. If you notice, the highlights are following the same direction and overall look as the original, but the jacket's texture appear now interacts with the light, rather than being smoothed over. You can actually see the texture in the original image in 2a, so it's not like DLSS5 is inventing anything here.

- Significantly improved specular highlights on her lips and inclusion of more details in those highlights. There's clearly light coming from in front of her, as you can see with the specular highlighting circled in 3a, but the original shot doesn't do much with it on her lips. There's also more occlusion of the her lower teeth. There's more easily visible on the original shot, while the DLSS5 image has them occluded by her mouth. This could just be due to animation, though.

- Improved occlusion of her skin by her eyebrow hair. The eyebrow rendering looks very next gen, which gives them a somewhat straw-like appearance. The DLSS5 image adds more occlusion, which helps with this problem.

- Significantly improved occlusion around the eyes. This is, to me, the single biggest enhancement the DLSS5 image brings. The eye rendering in Starfield is horrific and behind a lot of last-gen games. RDR2, TLOU2, etc. look like they come from different planets. In the DLSS5 image her eye sockets and eyelids are able to add shadows and occlusion that gives her eyes a dramatically more human-like appearance. They also deemphasize the specular highlights, which are incredibly bright in the original image

- Hair highlights and lighting. This could be due to the aforementioned color/contrast issues that you pointed out, but it appear that light is able to capture the differences in her hair color more in the DLSS5 image. Again, could be due to color changes, but I do like the effect.

- Overall skin texture and light interaction. Her skin in the original image is very flat and doesn't interact with light. There appears to be limited if no subsurface scattering, and a lot of the texture detail is washed away. Her facial features are more affected by light in the DLSS5 image, which gives her face a lot more depth and makes it look more realistic, IMO.

Last edited:

manlisten

Member

This shot really highlights the issue for me. What you have here is very realistic texturing and lighting via DLSS, on top of very unrealistic anatomy. It just looks weird! The lighting, texture and anatomy of the character don't match up! You can't just cobble tech together like this. I'll wait to see what devs can do with these tools in their own hands, but nvidia really did a poor job of showcasing this tech.

Last edited:

Unfortunately I'm a UK pauper so can't see your artistic skills!While I do agree that the tonemapping/color correction/exposure is off, and I would bet there's even some sharpening going on, I'm not necessarily seeing any actual new lights place in the above shot. To be fair, I think Starfield is an incredibly ugly game and needs reshade to look good in stock form.

I'll try to markup the images. Please excuse my poor MS paint skills. I'll point out what stands out to me between the images.

- Enhanced reflections. It appears that the floor does have shiny material properties, but the DLSS5 pass is bringing them out further than the stock image.

- Significant enhancements to the specular highlights on her jacket, specifically how light is interacting with the materials. If you notice, the highlights are following the same direction and overall look as the original, but the jacket's texture appear now interacts with the light, rather than being smoothed over. You can actually see the texture in the original image in 2a, so it's not like DLSS5 is inventing anything here.

- Significantly improved specular highlights on her lips and inclusion of more details in those highlights. There's clearly light coming from in front of her, as you can see with the specular highlighting circled in 3a, but the original shot doesn't do much with it on her lips. There's also more occlusion of the her lower teeth. There's more easily visible on the original shot, while the DLSS5 image has them occluded by her mouth. This could just be due to animation, though.

- Improved occlusion of her skin by her eyebrow hair. The eyebrow rendering looks very next gen, which gives them a somewhat straw-like appearance. The DLSS5 image adds more occlusion, which helps with this problem.

- Significantly improved occlusion around the eyes. This is, to me, the single biggest enhancement the DLSS5 image brings. The eye rendering in Starfield is horrific and behind a lot of last-gen games. RDR2, TLOU2, etc. look like they come from different planets. In the DLSS5 image her eye sockets and eyelids are able to add shadows and occlusion that gives her eyes a dramatically more human-like appearance. They also deemphasize the specular highlights, which are incredibly bright in the original image

- Hair highlights and lighting. This could be due to the aforementioned color/contrast issues that you pointed out, but it appear that light is able to capture the differences in her hair color more in the DLSS5 image. Again, could be due to color changes, but I do like the effect.

- Overall skin texture and light interaction. Her skin in the original image is very flat and doesn't interact with light. There appears to be limited if no subsurface scattering, and a lot of the texture detail is washed away. Her facial features are more affected by light in the DLSS5 image, which gives her face a lot more depth and makes it look more realistic, IMO.

I agree with most of your comments about highlights and I think that's where the future of the tech is. It's a screen space technology, so for adding highlights to hair, clothes etc it's potentially a bit of a gamechanger long term.

The negative is the lighting in general though, (as well as the contrast / tone mapping etc which I think they will work on). The Starfield example may not be the best one due to the poor lighting anyway, but there's definitely hints of shading on the left hand side of the face and background which indicate a light source on the right in the original. That's blown out with DLSS 5 and it becomes hero lighting for want of a better word.

It definitely does introduce light sources that aren't there, check the Hogwarts background in the street where it's a slightly murky subdued scene in the original and becomes vibrant and full of light in DLSS 5. That's definitely not the intention of the background, as they'd just have made it light if they wanted. Where is the light source in the original? It doesn't exist, and it's been added by DLSS.

The issue is the same though really - it's a screen space feature, so won't be able to accurately place lighting on an environment. The best use of it would be to use path tracing to create the lighting at a base level and use DLSS 5 for all the benefits that you've listed. On it's own, I can't see how Nvidia can get it working as there's a fundamental issue with where it gets the data from unlike RT / PT.

Edit - the tone mapping and contrast can be improved, but Nvidia have still got the same issue where it's working from the pixels on the screen rather than when building the scene. They can tone it down and make it more accurate but iI can't see how it can accurately represent it. They've gone full-on vivid to try and impress people, and that can change but it hasn't got the information it needs for real accuracy.

Last edited:

Stafford

Member

In some videos I really like the lighting such as in Hogwarts Legacy, but it doesn't really look all that great for Oblivion and AC to me. Photo realistic? Yes. Especially so AC. But do these games need to look photo realistic? Not for me, not at all.

Same with the faces, some games just don't need it at all, Starfield definitely does but even then it looks....off? I have a hard time adjusting to this.

Same with the faces, some games just don't need it at all, Starfield definitely does but even then it looks....off? I have a hard time adjusting to this.

Honey Bunny

Member

I have to wonder if this was a pre AI technology would it be getting half the backlash

Trilobit

Absolutely Cozy

YeulEmeralda

Linux User

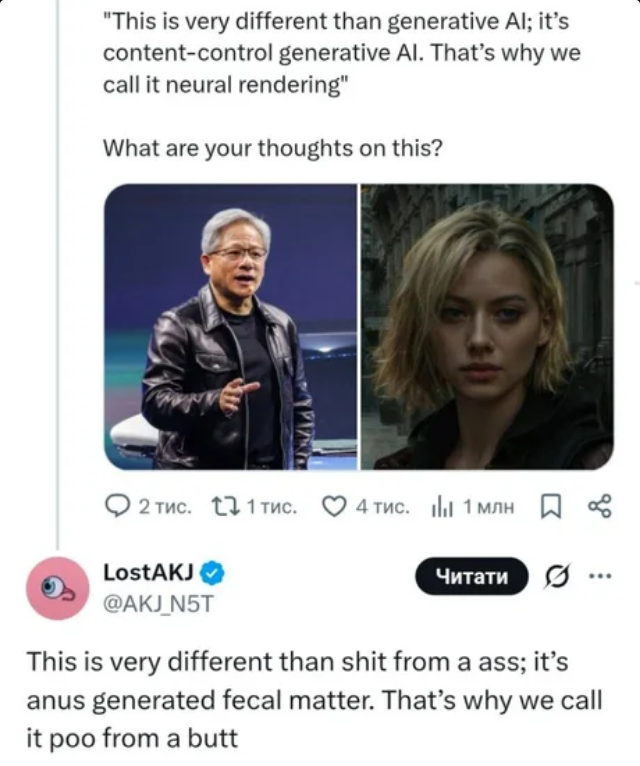

At least we got a lot of funny memes from Nvidia.

Wolzard

Member

I really hope Nvidia, Bethesda keep going and don't listen to these fucking losers and cancel this great work, or water it down due to crybabies online.

If they came out with a PS6 and debuted Starfield or Re9 without saying the word AI. They would all be screaming from the hills that we were in the future of Games.

Nvidia and Bethesda are already used to the hate, but they observe that this is not reflected in reality, with sales and market share remaining high.

I think they'll continue talking about the technology, probably explaining it in more detail.

Nvidia's YouTube channel has featured several tutorials on neural rendering since last year. It's the future of graphics, and they're going to continue with it, especially since other companies are working on it too.

analog_future

Banned

I have to wonder if this was a pre AI technology would it be getting half the backlash

Most of the backlash it's receiving is because it looks bad.

bbeach123

Member

My reaction when I first saw Grace in the trailer was "WTF". At this point it's just pattern recognition ,you look at it and immediately recognize the "AI slop" . It doesn't look bad by any means , it's just the same boring AI gen face you've seen a million times before. The Leon comparison actually looked pretty good, I'm okay with that.

Then they hit me with that Hogwarts Legacy and Starfield shit and I gave up. The tech is kind of cool, but the showcase was horrible , a mistake. If they did remove all the face comparison , just do the background , enviroment stuff then everyone will freaking love it .

Then they hit me with that Hogwarts Legacy and Starfield shit and I gave up. The tech is kind of cool, but the showcase was horrible , a mistake. If they did remove all the face comparison , just do the background , enviroment stuff then everyone will freaking love it .

Last edited:

IlGialloMondadori

Member

Do you really have to wonder?I have to wonder if this was a pre AI technology would it be getting half the backlash

People reacted because it looked bad. They made Grace from Resident Evil yassified, and all the lighting was blown out.

It looked bad.

When nVidia's response is, "Yeah, well, we think it looked great and you're all wrong. And also, this amazing best thing ever? You can turn it off, okay?" tells you everything you need to know.

SportsFan581

Member

I knew you guys would have fun with this one, and the thread doesn't disappoint.

Starfield is by far the best advertisement for it, since the models, particularly the faces, were visually weak in that one to start with. Hogwartz though, LOL, a bunch of old wizards in school.

Starfield is by far the best advertisement for it, since the models, particularly the faces, were visually weak in that one to start with. Hogwartz though, LOL, a bunch of old wizards in school.

Johnny Concrete

Member

You'll need a 3rd 5090 to let an AI fix the artefactsThat looks really bad.

Sethbacca

Member

Imagine looking at a game designed by Tetsuya Nomura and Yoshitaka Amano and calling it "this anime look". Not everything needs to look like real life, and frankly I'd prefer it doesn't always either. There's always room for style.ps1 version was limitation of hardware and if artist could have access to modern graphics they would make her look as real life as possible, not this anime look.

Absolute sacrilege. Might as well shit on Akira Toriyama and Dragon Quest while you're at it.

Last edited:

viveks86

Gold Member

This is correct. There are no new lights placed in the above shot because there is no actual lighting or light physics. They are pixel predictions based on the original screen space. And clearly the model has been trained with too many images that have front facing lights. So yes, every face will get "lit" like they are in a studio, but the lights themselves are non-existent. You are not going to see any side effects from front lighting because it is not being simulated or even approximated. It is being guessed wherever it thinks it is pleasing to the viewer, like how a professional wedding photographer would setup a scene with reflectors, ring lights and light boxes. You will see that behavior with every model, indoors or outdoors, with this technique. And that's a bit of a rubbish approach, because everyone's bullshit detector will go up in a 3D game where you would expect some logic to what should be lit depending on where they moved within the world. Most are seeing it already, but others will see it when they get their hands on it. Everyone will float around like FW Aloy with hero lighting, everywhere, all the time. Unless their movements were hand choreographed throughout the game with the intensity sliders built into the SDK.While I do agree that the tonemapping/color correction/exposure is off, and I would bet there's even some sharpening going on, I'm not necessarily seeing any actual new lights place in the above shot.

What the model does well though is guess better hair strands and SSAO around extremely complex objects like hair and foliage, and so you'll consistently see benefits from that. But all AO is screen space here. So the moment the models change positions, the results will change and exhibit artifacts. That's simply how screen space techniques work.

Outside of the "photorealistic" facial features and hair, literally everything else can be matched with traditional screen space techniques. So if you remove the facial "lighting" enhancements that even proponents of this technique are questioning, the whole remainder of techniques can be written into a single reshade filter (SSAO + SSR + screen space shadows + tone mapping and contrast) without any use of AI. You don't need a 50 series graphics card for that. Or even 40 or 30. This thing lives or dies by how much people really want hero lighting and "AI-enhanced" facial features on all their characters.

Last edited:

adamsapple

Or is it just one of Phil's balls in my throat?

Esquie

Member

Jensen's biggest mistake was leading with the Grace comparison. Every "AI slop" spammer on the internet is reee'ing about Grace. They should've started with environment examples or even Starfield. Just never should've done the Grace one.People reacted because it looked bad. They made Grace from Resident Evil yassified

Honey Bunny

Member

Are there any pictures in your view where it looks good? Do you think it might improve over time?Do you really have to wonder?

People reacted because it looked bad. They made Grace from Resident Evil yassified, and all the lighting was blown out.

It looked bad.

When nVidia's response is, "Yeah, well, we think it looked great and you're all wrong. And also, this amazing best thing ever? You can turn it off, okay?" tells you everything you need to know.

I don't think humanity has developed the technology required to fix Andromeda. There are some things even Leather Jacket Jensen cannot fix.The first thing I will play with DLSS5 on will be Mass Effect Andromeda

The environments, especially the exterior ones have the same fundamental issue though, there's no light source on screen, so it's deriving one instead and is thinking there's a bright sun in these sort of scenes.Jensen's biggest mistake was leading with the Grace comparison. Every "AI slop" spammer on the internet is reee'ing about Grace. They should've started with environment examples or even Starfield. Just never should've done the Grace one.