What is bad? The tech? Or how its being used. Because those sre two totally different things.

Well, the biggest problem for now is how it's being used for sure. It's like a free 'get out of jail' card and Starfield is a great example of that (oher would be Mass Effect Andromeda

). For the tech itself - for now I'm not sure, I would need to get more details and see how it work and if it can be used in a good way. Still it is a bit like cheating (the whole lighting part) and this is something that needs to be examed. Maybe it can be used in a good way (but then would be less flashy for marketing).

Becomes older... lol. Thats not because the lighting changed, thats becxause for whatever reason devs sucj at making good pre teen or teen models. This has been going on for decades.

I know something about those stuff

. I have some experience working on games and especially on faces. Making a good teen or a child is hard on many levels and skill is usually less of a problem. Sometimes is just when you see a young human in game it looks bad (especially for management) and you need to change that. Sometime it is more about the tech and tweaking it (but the question is do you have time for that because children aren't usually main characters or even secondary). It can be even a artistic decision.

This new tech doesnt make them look olderr, its makes certain things obvious. Like take that Hogwarts screenshot going around of the guy. Be honest, look at the DLSS off image... does that really look like a 15yr old to you?

It makes those subtle things (like wrinkles or skin imperfections) exaggerated and that's the problem. In original shots it is fine and consistent. I can't say about the age (like USA teens look more adult then in my country

) but it looks somewhat believable/acceptable even if it's not actually a 15 yr old boy. It looks like a high pass filter that is being used for making something look more detailed. There where lots of pictures on the web that looked like that and it was taged as 'HDR'.

What I can say for sure is that it changed the character of the face. Shadows are deeper and this is what defines shapes. It even looks more glossy. The thing is that you could try to tweak many things to match this - make roughness/gloss smaller/higher, bump up a bit specular, maybe make the normal map for pores more aggressive or use a cavity mask to make them more noticeable (or that also might be subsurface scattering being too aggressive for normal maps). Still I bet it would look differently because it changed some characteristics of the face. I can't find any high poly screens for this face to by 100% sure. Those information aren't on the mesh, maybe they are on normal map but I doubt it.

However, like I said for DLSS back in 2019, and for PSSR... what I will say is whatever shgort comngs there are... will get better in time. That's kinda how AI works.

That is true.

Ok now this is bullshit. First off, RT (or more specifically, lighting) is the absolute holy grail or most important thing in graphic rendering. Its the single most valueable asset to how a game actually looks. And any new tech... has a cost.

Yes, it is very important and makes everything looks more real. And RT has a big cost to it, PT even bigger. Sure, thanks to Nvidia it is possible in real-time to some extend but still you need to make some exceptions, simplifications, sometimes degradations. I do like RT and PT and I would like to be in time when every single GPU can handle it with good results so that we don't need to have fallback to old methods (that was also one of the proclaimed pros for RT that lighting in game will be easier and less time consuming - for now it is the opposite). But we aren't it that time yet. This is only my opinion - RT was pushed out to early but this is what Nvidia needed at that time to grow bigger and stronger on the market. Now it's the same with AI although they don't need gaming any more for that (maybe for making people feel better about AI when it works). Sure, it works, but maybe the cost is actually to big. But it was already decided and people get used to it/expect it even though it has some negative effects (like Game Pass

).

Do you think when we went from sprites to 3D it didnt come at a cost? Do you think when we ent from 460p to 4K it didnt come t a cost? When we went from forward renderring to deffered it didnt come with a cost? There has always been a cost associated with innovation, and then that is always followed with innovative ways to alleviate that cost.

Yes, I'm not saying you are wrong with this thinking but then the question is, when the cost is too much? Maybe you should slow down (I know the corporations are afraid of those words

). For sure the AI market should stop (but it won't) and rethink the whole approach - even some of the people who helped the development of AI are saying that. It is driven by the corporations greed and not by common sense. And this is what have a big influence on our word. I feel like the tech upgrade (like GPU power) has became small and now AI became this easy solution for growth. Maybe I am wrong and this is the right way, but it feels wrong in a way. But as I said, this is my opinion and I can't force anyone to have the same.

And your takes are actually questionable. Gaming has ALWAYS been about smoke and mirrors. Its like using sprites to look like smoke or grass rather than actually render grass or volumetric fog. Or culling polygons or using LODs....reconstruction is no different. Its a better way to utilize hardware, why spend 100% of your resources vs 30% for a less than 5% visual gain?

I understand what you mean. Yes we are using tricks from the start, even normal maps are just that. But every trick has it weaknesses. I thought about reconstruction as this magic thing that can make a 1080p game to 4k. When I was reading opinions or watching videos about the subject it looked that way. Then when I started to play those games (well even a work in engine showed me that DLSS can damage simple thing like navigation and UI). I noticed that it's not that great. RE9 or FFVII Rebirth are good examples of games that are taunted as great on that front, but I see many downsides. And sure with RE9 there is a problem with denoiser (so RT) but even without it there are issues. And I'm not looking for them on purpose, they are just there saying hello and waving

. So I'm not sure if it's really better way if it makes a image less stable. But to be fair, there where many screen space effects before that and they also made the image less enjoyable, especially in motion.

I love AI... I do not like how it's used sometimes. But that's the issue here. There is a difference in that.

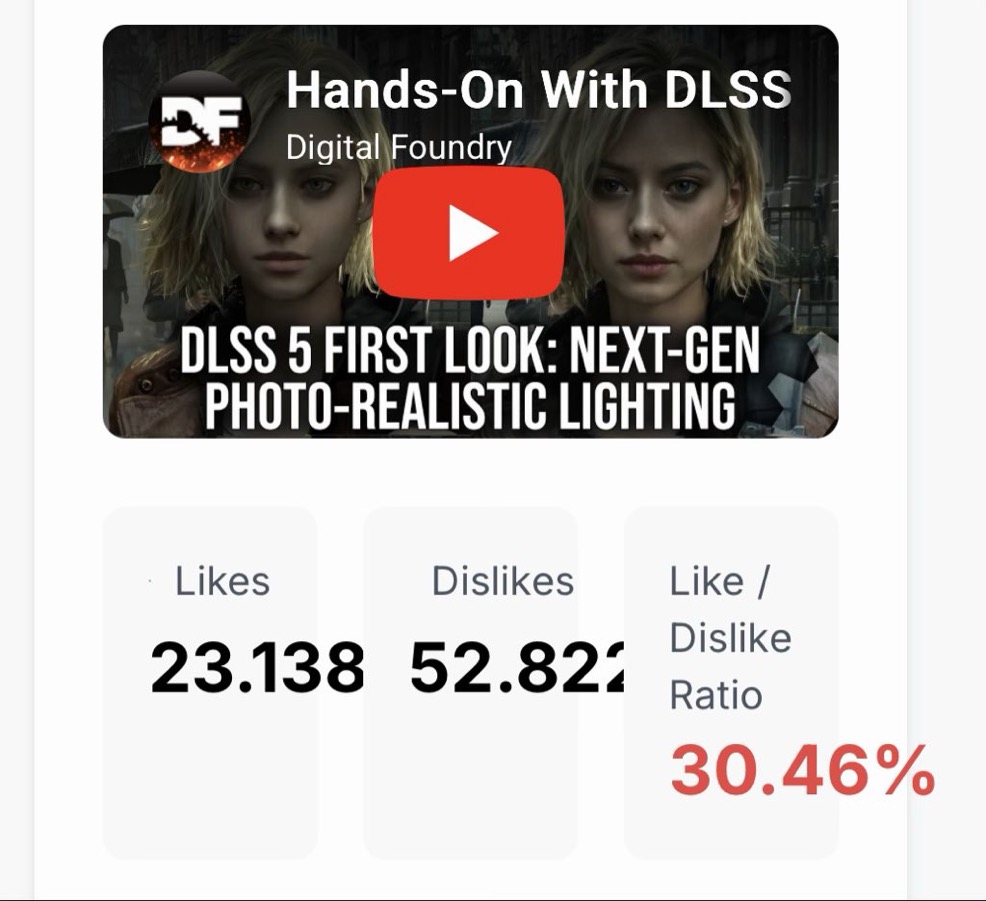

Agreed. Maybe not about loving AI, but I know there is a potential in that. I just don't think that what DLSS5 shows is what I want from that. Anyway, future will tell. I only hope that people that are also talking about problems will be in a high numbers so that it will force developers/engenires at Nvidia to make it good. For now, what they showed looked bad.