-

Hey Guest. Check out your NeoGAF Wrapped 2025 results here!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

daninthemix

Member

Also, this Grace would appeared to have been authored true to what the developers wanted anyway

The authors intent is irrelevant, it's what the pitchfork wielding masses want that matters.

Arachnid

Member

I'm with you tbh. I like it.Yeah this explains why your takes are so bad on this. You see it and it reminds you of AI generated porno girls and that's that: urggh gross no good take it away.

You just refuse to engage your brain and think about it rationally.

It radically enhances lighting quality (or the appearance of it in a digital image, whatever). This is good, but has certain implications because it's going to radically change how some assets present. So the effect might be more or less pleasing depending on preference. But the tech is still fantastic.

Also, this Grace would appeared to have been authored true to what the developers wanted anyway

In other words, social media keyboard warrior gamers overreacted, as usual.

Every picture I've seen so far has been an improvement.

Last edited:

jasondefaoite

Member

Not really - no. DLSS is reprojecting samples from past into current frames and using AI to drive the heuristics of the algorithm instead of being analytical.I think my inference is basically accurate: this is to lighting detail as DLSS 2-4 is to resolution. Right?

While some data gaps do get filled in - the ground truth (if we were to have fully rendered samples without realtime constraints) exists, and is always possible to compare with.

If you say source image is not it - then there's no defined 'accurate' / ground truth to begin with. Nor - as apparent by some of the examples - is there ground truth for detail on screen - as that gets made-up on the spot too.It's using inputs to predict a more accurately lit image.

Calling it 'prediction' let alone 'higher accuracy' is circular reasoning. It's simply an artist re-interpretation of the source image. "Artist" in this case being the AI model (or maybe NVidia scientists that trained it - if we need human to call it art).

Not from an RGB buffer alone. Also specific design intents will be more murky yet. If you're trying to argue that human creative outputs are fundamentally algorithmic and should be ignored because prediction by 'best guess' is good enough - be my guest, but now we're squarely in the realm of 'who needs humans in product creation loop at all' as an argument.Surely this is possible from inference right?

And I'm not having that debate on a forum.

Last edited:

CuteFaceJay

Member

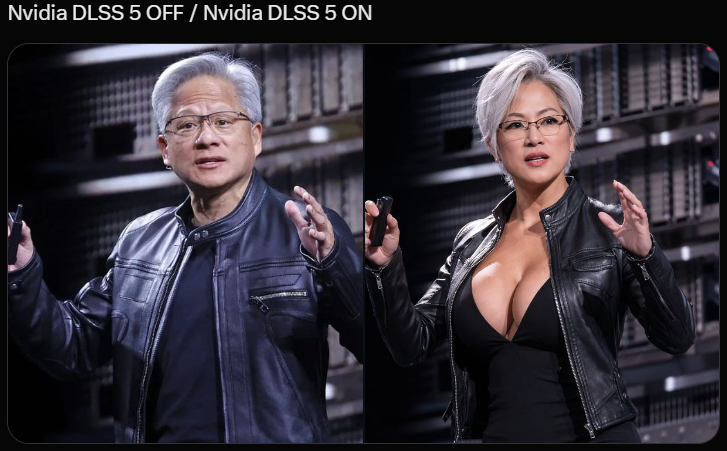

Our Lord with DLSS5 Enabled!

Tomorrow

Member

did you use AI to make that?DLSS5 already old. DLSS6 is coming

funking giblet

Member

You could get lighting direction by generating millions of high detailed models, with lighting of different quality, directions, intensity etc, and label it as such. So imagine you took Leon, and generated an image by putting a soft light into the scene, and a certain direction and distance. Then capture an image, repeat and move the light in all directions until you get thousands of images, then change the lighting style, colour, poses etc. While doing this, you can also label what your settings were and feed this into your AI, repeated for thousands and thousands of models, scenes, backgrounds etc, until your training corpus is an automatically generated encyclopedia of millions of images, all labelled with the lighting information, colour, vectors etc. Then when you generate your new image, you provide this data (vectors might be all you need) along with the source image, and watch it go!

Last edited:

Stafford

Member

She turned into Amber Heard!

Reizo Ryuu

Member

I think this is very important to state, because people are already running with "well capcom devs worked on the demo so this is what they wanted", even though that's impossible.And they manually achieved this on a Console at 60fps.

Capcom has thousands of employees, there's absolutely no way they gathered the dozens of artists that all worked on the dozens of aspects that are required to make the final scene all come together, and then asked them all the go over the DLSS 5 controls to make sure it's what they intended.

And then have the art director and cutscene director sign off on the final result.

I mean look at the image of Leon, he's much more sharply lit than the original image that, as you said, capcom manually achieved; they have all the controls in-engine to adjust cutscene lighting to their choosing, so if the cutscene director actually wanted that sharper/brighter lighting, he obviously would've done so, manually.

So no, "capcom" didn't work on this, the more likely scenario here is that nvidia contacted PR at capcom, who dropped it at the producers, who then dropped it at their engineers because this relates to "tech", who then in turn just implemented it and went

And then sent it back to nvidia.

The result in lighting, especially in the leon image, is too drastically different from what the cutscene director manually set for the light intensity/brightness

Not really - no. DLSS is reprojecting samples from past into current frames and using AI to drive the heuristics of the algorithm instead of being analytical.

While some data gaps do get filled in - the ground truth (if we were to have fully rendered samples without realtime constraints) exists, and is always possible to compare with.

If you say source image is not it - then there's no defined 'accurate' / ground truth to begin with. Nor - as apparent by some of the examples - is there ground truth for detail on screen - as that gets made-up on the spot too.

Calling it 'prediction' let alone 'higher accuracy' is circular reasoning. It's simply an artist re-interpretation of the source image. "Artist" in this case being the AI model (or maybe NVidia scientists that trained it - if we need human to call it art).

Not from an RGB buffer alone. Also specific design intents will be more murky yet. If you're trying to argue that human creative outputs are fundamentally algorithmic and should be ignored because prediction by 'best guess' is good enough - be my guest, but now we're squarely in the realm of 'who needs humans in product creation loop at all' as an argument.

And I'm not having that debate on a forum.

I really feel like you're just trying to blind me with science. I don't think the underlying logic of this tech is as subversive as you're making out. I think it's using some huge set of training data (or whatever the precise term would be) to take a somewhat accurately lit image and add more lighting detail to come to an overall more accurate version, ie closer to what an advanced path tracer would produce.

That has to be something close to what this does. All the comparisons show no adjustments to anything in the image that couldn't in some way be described as "lighting" - shadows, ao, reflections, gi etc. And indeed that is exactly what Nvidia says it does!

So I dunno really. Not sure what else I have to say.

deeptech

Member

There is something quite queer going on with the way some people reacted to this, do they really hate it or are jealous and not having a good PC or w/e? I mean this tech is literally on the level of reshade mods (only bit more hibrow), you don't have to use it, it won't be pushed on to you, and even if it was, how would you know? The amount of forced/fake/impotent cynicism and hate is crazy.

daninthemix

Member

Bullying someone because you don't agree with their opinion. Yes, that's definitely the higher ground here.

MiguelItUp

Member

When I first saw images of all this, I thought it was completely fake. I mean, in some cases of materials, lighting, and the overall environment it looked pretty damn good. But the character models look so bizarre to me, especially in motion. It creates this bizarre uncanny valley feeling, especially when you notice some of the character models appear to be reconstructed in a way that just looks or feels bizarre. In other cases it really does just look like a Instagram / Snapchat filter that just covers everything in that particular AI look that you can immediately recognize, and I think that looks absolutely awful. I'm happy for those that are excited about this, but for me, I'm much happier sticking to the look of the game that was initially intended, not some layer that can "improve it" while completely changing the look of certain characters and models. I still can't believe how Grace looks in that one image, lmaooooo.

adamsapple

Or is it just one of Phil's balls in my throat?

One more for the road.

BennyBlanco

aka IMurRIVAL69

adamsapple

Or is it just one of Phil's balls in my throat?

TheAmazingSpiderMan

Member

There is something quite queer going on with the way some people reacted to this, do they really hate it or are jealous and not having a good PC or w/e? I mean this tech is literally on the level of reshade mods (only bit more hibrow), you don't have to use it, it won't be pushed on to you, and even if it was, how would you know? The amount of forced/fake/impotent cynicism and hate is crazy.

lol, I didn't realise GAF had this many PC gamers, it's either A- their rigs won't be able to achieve this as most steam users are still on older cards, or B - console fanboys are upset they won't be able to access this tech any time soon, but don't worry when AMD and Sony do it, it will be amazing to them lol.

And yeah for an 'option' this is getting a lot of hate for no reason. I for one cannot wait to see this on games in the future.

Guilty_AI

Member

This literally needed two rtx 5090s to run, so i think its safe to say no pc or console users are getting this any time soonA- their rigs won't be able to achieve this as most steam users are still on older cards, or B - console fanboys are upset they won't be able to access this tech any time soon, but don't worry when AMD and Sony do it, it will be amazing to them lol.

s_mirage

Member

lol, I didn't realise GAF had this many PC gamers, it's either A- their rigs won't be able to achieve this as most steam users are still on older cards, or B - console fanboys are upset they won't be able to access this tech any time soon, but don't worry when AMD and Sony do it, it will be amazing to them lol.

And C, AI, and AI art in particular, is a current cause célèbre for the terminally online to performatively whine about.

Reckheim

Member

heh, thats good.

I didn't see it before but thanks to nVidia...

ChorizoPicozo

Member

The hate towards DLSS 5 Needs to be studied

Throttle

Member

What is going on here?

TheAmazingSpiderMan

Member

I believe by the time they release it, it will run on 1 card, and they must be making big strides for the 60 series cards if they want this to be adopted.This literally needed two rtx 5090s to run, so i think its safe to say no pc or console users are getting this any time soon

Last edited:

Vick

Member

Such as?And sure with RE9 there is a problem with denoiser (so RT) but even without it there are issues. And I'm not looking for them on purpose, they are just there saying hello and waving. So I'm not sure if it's really better way if it makes a image less stable

Could you make examples, because aside from the RT noise there's not a single "issue" as you call it that I've seen in 4 consecutive playthroughs. Aliasing on DOF edges are not due to ML upscaling. I found it virtually flawless all the time, and for damn sure much more stable and clean than any alternative there has even been, including native resolution that needs to be temporally stable. All I've seen is perfect motion clarity, perfect hair strand rendering, no ghosting, no trails, no hallucinations, razor sharp detail.

This is the extent AI should be used for. Nvidia paved the way for this incredible achievement, before deciding to taint the legacy entirely by attacching this GenAI to the DLSS name.

DerivedDataCache

Member

The memes oh my lord lmao.

DF thought they had the scoop of the century and it turned into a tidal wave of hate.

DF thought they had the scoop of the century and it turned into a tidal wave of hate.

roosnam1980

Member

Kokoloko85

Member

Wolzard

Member

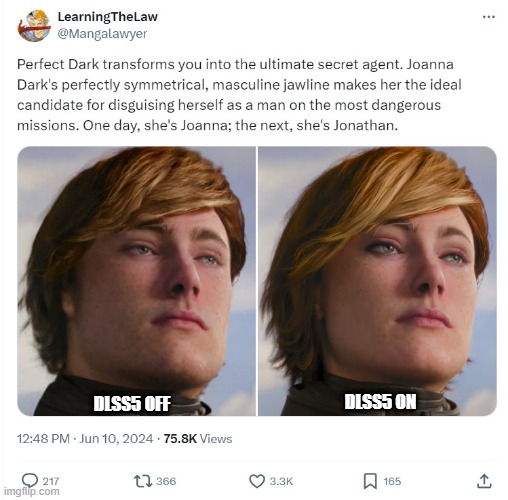

Same people who crap on DLSS5 , were praising this basic, trash ass update, Pissr 2, just hours ago.

How can you say this is amazing

And this is terrible?

We are done here. Some folks need new eyes. Or are just trolling.

Large amount of "gamers" didn't like dlss or frame generation.

Now same large amount of "gamers" use those two almost constantly and rage if game doesn't support them.

When AMD added FSR it was "a total win fucking nvidia." and whole artificial frames/lolresolution completely died off.

It's basically mix of people who don't have nvidia or won't be able to use it (console gamers) feeling threatened. Once it arrives to them they will change 180.

Sony is working precisely on that. When it's revealed on the PS6, we'll see many people saying that Mark Cerny is a genius.

Visual Computing Group: An Exciting New Chapter

Sony Interactive Entertainment’s Visual Computing Group is advancing game rendering and streaming to power the next generation of play.

Fair enough, the way you say it now it's more nuanced. I'm calling out the youtubers who say looks like shit. If the Fifa or AC shots look like shit, what does that make the non DLSS ones?

In the end we're all just nerds and it's fun to argue about this.

Just for the heck of it asked my 9 year old daughter which shots she thought looked better and she consistently chose the DLSS ones.

I guess we need more footage before judging and I wanna see how it looks in motion, hope they release a lenghty video soon.

But seriously, you think Nvidia would be invested in this tech if the face changed every fucking minute or at the different angles? C'mon now.

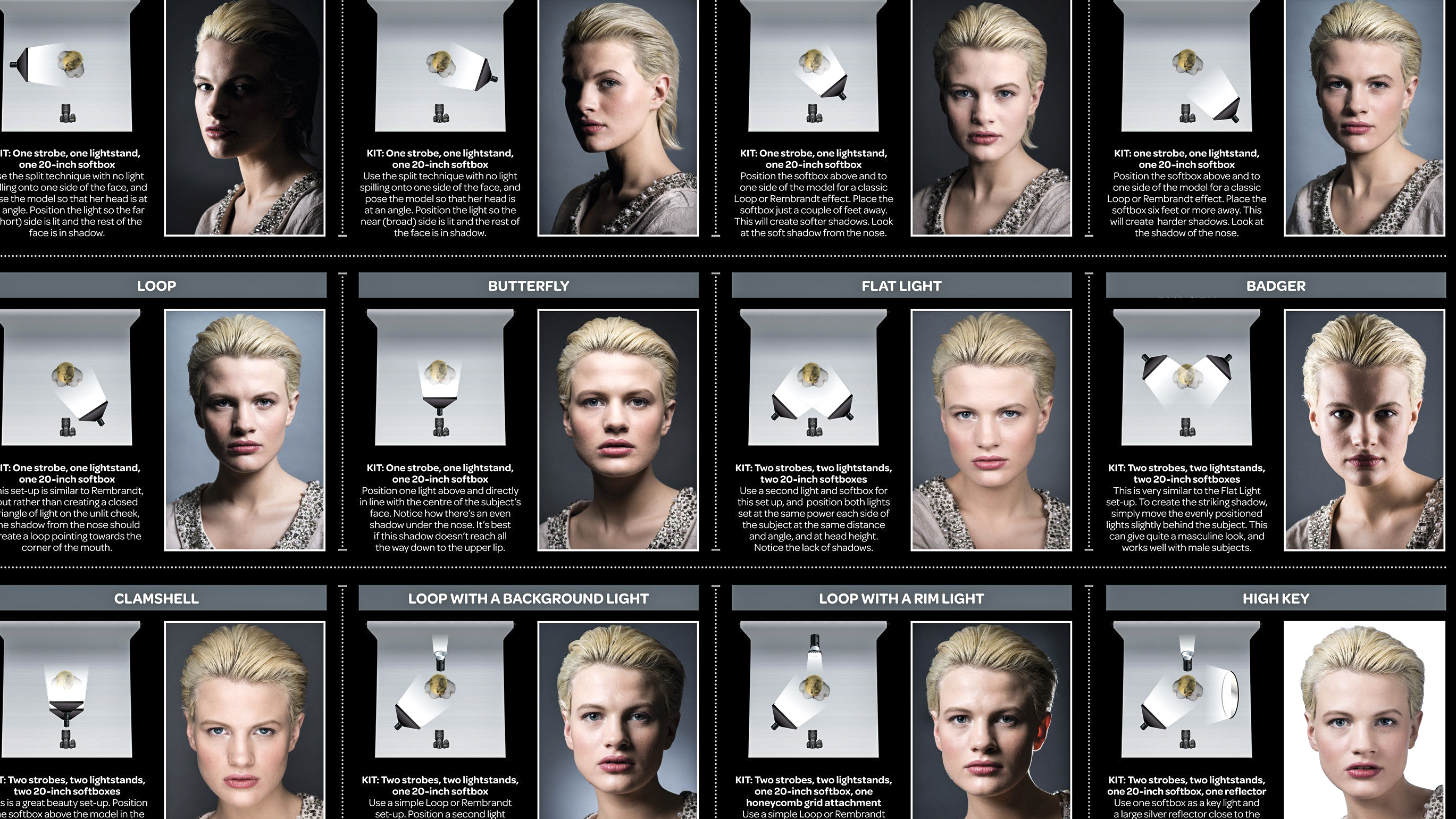

Just different lighting to me :

Most people have no idea how much of a difference lighting makes in a scene. Many of those who complain are simply going along with the crowd, they don't understand what they're talking about.

Gamer_By_Proxy

About to beat off

I'm starting to think this only seems somewhat good cause Bethesda won't change engines. Makes their character models not look like complete ass for once.

LQX

Member

Reminds me of when HL2 mods became a thing. Some made the environments look amazing and more hyper-realistic but then made Alex look like Adriana Lima which threw shit off. I still think it is impressive though and I wonder what it will due for games like Forza, Flight Simulator and Ace Combat which are already close to photorealistic.

Larxia

Member

I saw a post on reddit where they explain that the tonemapping difference is the biggest issue.

They made some comparison images where they kept the DLSS5 but with original tonemapping:

Image comparisons

I still hate it, but it does look less AI mobile / Fake game Ad / Grok-ish.

They made some comparison images where they kept the DLSS5 but with original tonemapping:

Image comparisons

I still hate it, but it does look less AI mobile / Fake game Ad / Grok-ish.

Last edited:

Wolzard

Member

Epic Games developer:

All you guys roasting DLSS 5 like it doesn't look better/is detracting from art direction are absolutely insane. The lighting and shading improvements are bonkers. If that was shown as a next-gen hardware reveal and not "AI" you guys would be going nuts like the Watch Dogs demo.

I get that some very vocal people don't like AI. But guess what. Technology doesn't care if you like it. It is a tool. AI isn't coming. It is here. Just this morning my oncologist was telling me all the ways it is helping cancer treatment and research.

We aren't far from the day where it will never be easier to make the dreams in your head a reality. Are you so ignorant as to not recognize what that will enable for marginalized creators? Or any number of industries!? Yes I get it. The transition that it will cause in the economy will be painful for some people, just like it was painful when the Internet destroyed the mail order business. Or just like it was painful when cell phones eliminated most of the landline business in the country. Or just like it was painful when candlemakers were put out of business by Edison.For instance, what do you think is a more likely outcome, the entire industrialized world suddenly puts aside their own self interest to stop climate change, or AI models working alongside scientist, figure out novel ways of removing carbon from the atmosphere. spoiler alert it's the later. Anyways, to end my rant, maybe it's a better idea to quit being chronically online and complaining short sightedly about incredible technological advances that you don't fully understand

Last edited:

Vick

Member

I saw a post on reddit where they explain that the tonemapping difference is the biggest issue.

They made some comparison images where they kept the DLSS5 but with original tonemapping:

Image comparisons

I still hate it, but it does look less AI mobile / Fake game Ad / Grok-ish.

At first it felt like "Yeah, this is it", but the result we see is actually not just tonemapping. The before and after have been simply merged, like 50% transparency, very noticeable in the Starfield one by looking at the person on the back.

What this does prove however is that the current DLSS5 would look substantially better with 50% intensity, and maybe no change in tonemapping.. which would make no sense however, as this is apparently born as a lighting enhancer (and screw real lighting progress such as ray tracing/path tracing).

Kokoloko85

Member

i wonder how many people crying about this are console owners or don't have a 5000 series gpu.

i'll be enabling this as soon as it's available. what an improvement. i'd hate to be a console only gamer next gen.

Its not just console owners though. And Im sure the tech will be improved and used properly, but for now, its funny to see how they thought this looks good.

Console gamers will be fine with their graphics and art styles.

Stafford

Member

It really isn't all bad.

Obviously Grace on the street is just weird, but the later part isn't bad at all. For some reason it really isn't too bad at all for Leon either.

And then when you see Oblivion and also Hogwarts with the better lighting ....I honestly can't hate. Locations and lighting do look better. The old lady and student from Hogwarts on the other hand....nah!

Obviously Grace on the street is just weird, but the later part isn't bad at all. For some reason it really isn't too bad at all for Leon either.

And then when you see Oblivion and also Hogwarts with the better lighting ....I honestly can't hate. Locations and lighting do look better. The old lady and student from Hogwarts on the other hand....nah!

Larxia

Member

Yeah I thought about that on the starfield screenshot too, the faces too are much less disturbing, so it's just a lowered strenght. And you're right, it looks better if it's less strong, more subtle, but then at that point it's less noticeable, so it's just pointless and proves that it looks bad and the original is betterAt first it felt like "Yeah, this is it", but the result we see is actually not just tonemapping. The before and after have been simply merged, like 50% transparency, very noticeable in the Starfield one by looking at the person on the back.

What this does prove however is that the current DLSS5 would look substantially better with 50% intensity, and maybe no change in tonemapping.. which would make no sense however, as this is apparently born as a lighting enhancer (and screw real lighting progress such as ray tracing/path tracing).

Last edited:

Isn't it about time we get 5090SLI back*? on one card? Those were all the rage back in the day.This literally needed two rtx 5090s to run, so i think its safe to say no pc or console users are getting this any time soon

That 5000$ MSRP is just there - ripe for the picking.

*Ram still sold separately.

If you think about it, we really shouldn't even have settings in games, as it could ruin the artistic intent.I saw a comment about how this ruins artists intentions, I agree, we should stop optimizing games because that is ruining the artist intentions. Any downgrade from a reveal trailer should be illegal too

Uh, mommy?I like when auntie from nvidia comes for a visit