Rocinante618

Member

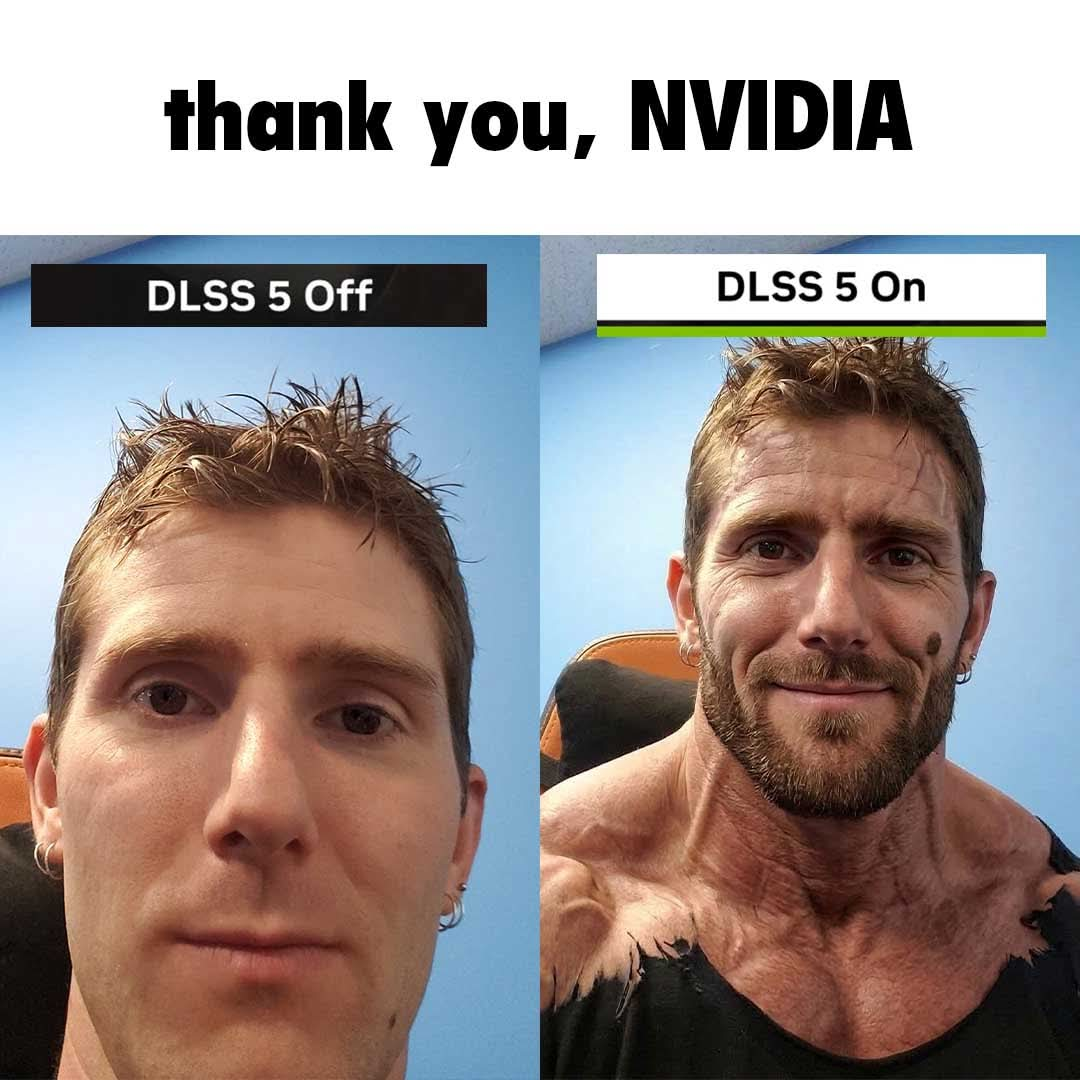

Wait till DLSS 6 or 7, it'll be what most will prefer.

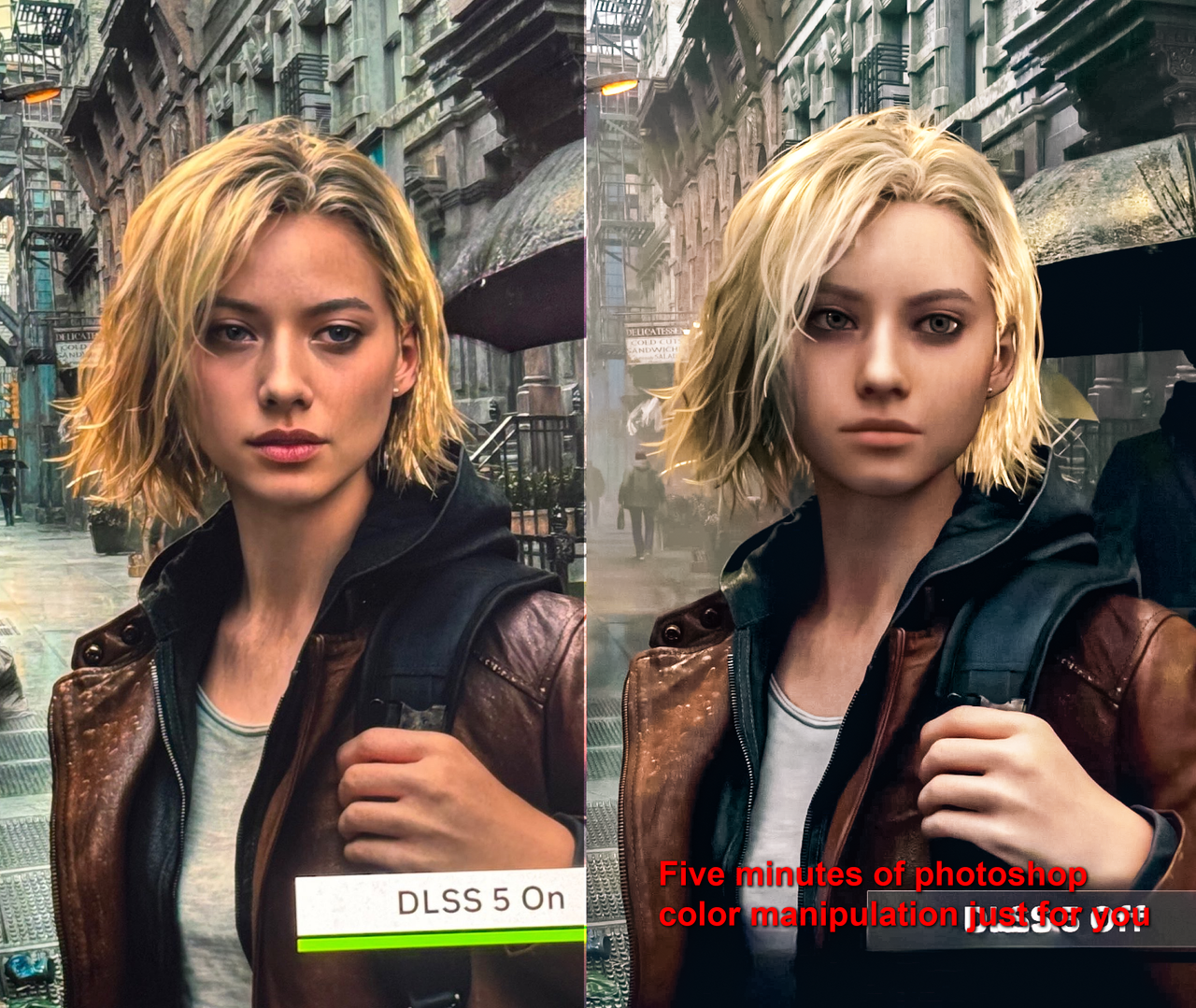

DLSS 5 right now is super early. DLSS 1 sucked, DLSS 2 also was kinda meh, DLSS 3 was a huge improvement, DLSS 4 is almost perfect now. This has a long, long way to go, I'm glad the technology exists and is developed actively. Anyway, I'm positive there will be sliders whether you want the GenAI filter or just up-scaling or both or whatever. It's just melodrama now, let it release and then get optimized in scale and finally tuned by gamedevs and Nvidia.

I'm waiting and will use this in 2030.

DLSS 5 right now is super early. DLSS 1 sucked, DLSS 2 also was kinda meh, DLSS 3 was a huge improvement, DLSS 4 is almost perfect now. This has a long, long way to go, I'm glad the technology exists and is developed actively. Anyway, I'm positive there will be sliders whether you want the GenAI filter or just up-scaling or both or whatever. It's just melodrama now, let it release and then get optimized in scale and finally tuned by gamedevs and Nvidia.

I'm waiting and will use this in 2030.