Not a worry, this is already confirmed.

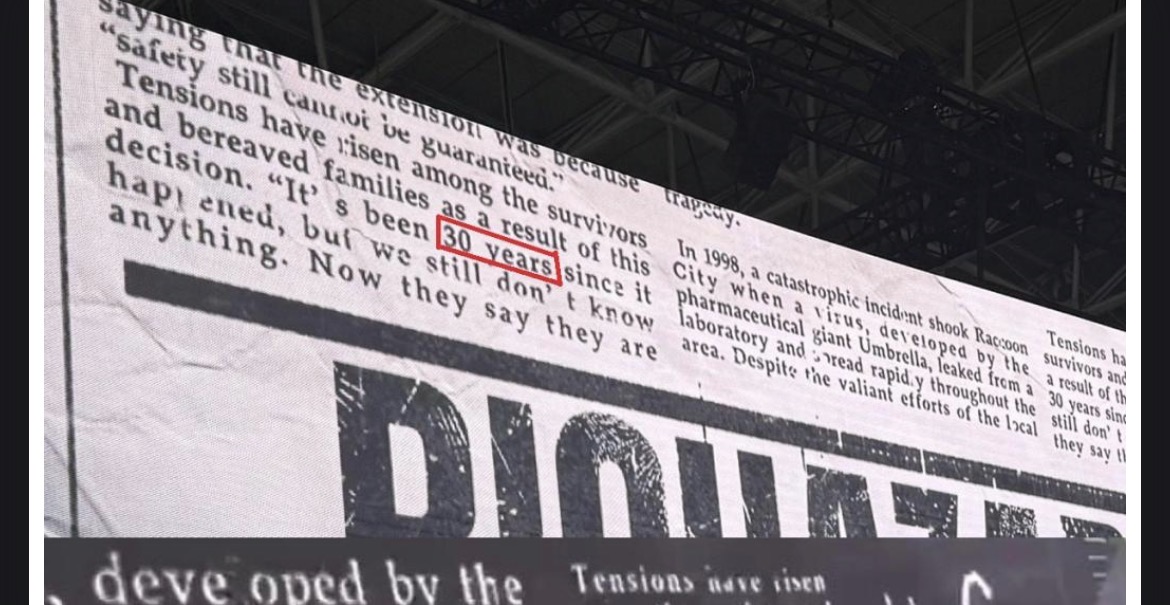

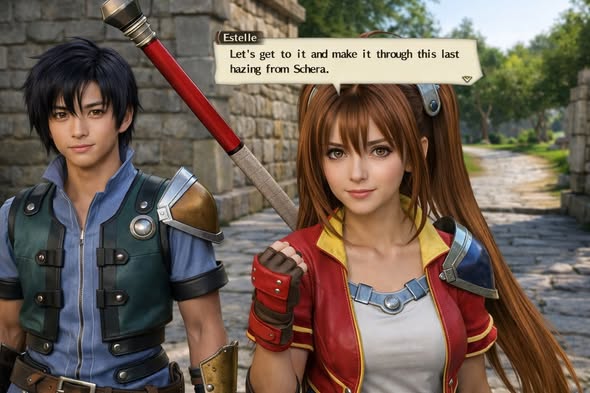

Games using DLSS5 model are indeed about look the same. A Bethesda game, a Rockstar one, a Capcom, a Naughty Dog, a Ubisoft, a CDPR, a From.

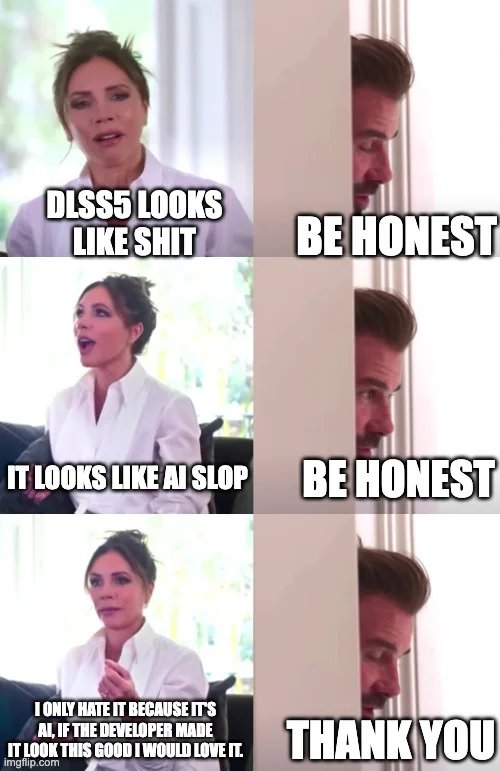

Can't actually believe so many people here are okay with this. It's a whole different story outside of these virtual walls, but still very surprised.

It's not that we don't see the potential for good, it's just too hard to appreciate being a tiny little fraction of the catastrophic potential there is for the entire industry.