SmokedMeat

Gamer™

Anyone saying anything under $1800 for decent pc that won't be obsolete in a week is a liar.

You have no idea what you're talking about. Zero.

Anyone saying anything under $1800 for decent pc that won't be obsolete in a week is a liar.

i'm not chasing ultra. i just want ultra textures and optimized settings. look at ps4, it runs rdr2 with ultra textures but all other settings are jank. yet you can't fit ultra textures into the buffer of 2 gb gtx 770 or 3 gb 1060 despite both card being more than capable than ps4, no?.

It does, at least according to DF.PS4 in no way shape or form runs RDR2 with "ultra" textures. Lmfao!

We'll see. I don't think it'll be much of an issue (especially considering where i see gaming as whole going) but i do hold the philosophy of upgrading my pc during console mid-gen, so i guess i can't argue much.we will see how 10 gb cards perform at native 1440p. i'd stay away from them. yes i m overreacting because i dont want others to do the same mistake I did. nextgen will see 16 gb 4070, 12 gb 4060 as baseline. when i gloss over old forums, i see peopel reassuring others that " 2 gb 770 would be fine, it destroys ps4". fast foward to 2017, it cant even run ac unity properly without using medium textures which is a far cry from ps4

It actually does, RDR2 settings are all over the place.PS4 in no way shape or form runs RDR2 with "ultra" textures. Lmfao!

there are no doubts, ps4 uses ultra textures. go set the game to high textures, it will look marginally worse than ps4 even if you have everything cranked up to the maximumIt does, at least according to DF.

Now if you decide to believe them... I have my doubts, although I remember the game looking great when I played it back then on my Pro.

Hi all, in my long "carrer" as a videogamer I've been a PC gamer, but since x360/PS3 era I started playing only on consoles. Months ago I ordered a PS5, with the intention to buy a Series X sometimes in the future too to have the full package (I've a Switch yet). Anyway, until today the PS5 still has not yet arrived and since Sony started to port their games on PC and MS already do, I was wondering if was a good idea to just buy a decent gaming PC.

I've not followed the PC hardware since very long time, so I'd like to know: how much I'd have to spend for a PC where I can play "next gen" games? I'd like something to play at 1440p/medium-high details.

It actually does, RDR2 settings are all over the place.

Ultra textures are console settings, while we have other settings on consoles are lower than the lowest on PC.

(think how long ago Horizon ZD, Uncharted etc came out and then when they came to PC).

DF has a video on it, and unless you think they're straight up making stuff up, the video evidence they show makes it fairly obvious.Do you have a source, because I'm curious to see for myself. I used to have it on the Pro, and just find it very out of the ordinary.

That's not "decent" but pretty high end.Anyone saying anything under $1800 for decent pc that won't be obsolete in a week is a liar.

DF has a video on it, and unless you think they're straight up making stuff up, the video evidence they show makes it fairly obvious.

"Ultra" is just a name in the end. If dev wants to call standard settings "ultra" thats what it'll be. RDR2 settings are particularly bad for these reasons, since ultra settings like these are the "normal", while other ultra settings are horribly optmized ones that improve almost nothing.I was just watching some if it and the checkerboard rendering on the Pro gave textures more of a blurred look. Digital Foundry had some still shot comparisons. The Xbox X had better looking textures because it was native 4K.

Still pretty astounding, because ultra textures on consoles are something you just didn't see.

I wont backtrack nothing.

Including that the 10GB VRAM fearmonging is nothing but VRAM fearmongering.

Not really. A moderately good PC with a mid/low end specs can play PS5/Series X games no problem.

A PC with a modern CPU, 16GB of ram and a RX6600 could easily last you this (console) generation.

ps5 will run maximum possible textures in all nextgen games. if i already expperience this weird problem on a crossgen ray tracing game, I just shudder to think what will happen in future. Its not like I'm talking without owning a card. I myself own a 8 GB card. if this card cannot let me have ultra textures with ps5 equivalent settings, might as well let it burn.

i've always said it, im neutral when it comes to pc vs consoles. i will always call a spade a spade.This quotewill agehas aged very poorly and we haven't even seen PC ports of true next gen games.

Nope.

Nope. Hell, PC gamers with current gen cards better pray cross gen GoW Ragnarok doesn't implement RT, otherwise they will need to upgrade to cards with larger VRAM (not system memory) in order to have a chance in maintaining specs-to-performance parity. Even with out RT it's questionable because dev has already confirmed PS5 version is especially dependent on texture streaming.

Whoa, sounds like you agree with what I was saying before in the Spiderman thread!

Lol no I only have 4 PCs a 10gb home network, with 2 nas ( work from home as a software developer ) and have had to buy pc parts for the past 2 years.You have no idea what you're talking about. Zero.

If you're a software developer, no shit you'll need to keep buying pc parts all the time.Lol no I only have 4 PCs a 10gb home network, with 2 nas ( work from home as a software developer ) and have had to buy pc parts for the past 2 years.

Pc parts are still expensive and with new cpus, gpus, ddr5 coming out … building a ( whole ) pc that's going to last 4 years just isn't feasible for under $1800.

But none of those new gpus and cpus or ddr5 are needed to match consoles? If you want a PC that is gonna still be playing with 'ultra' settings in 4 years then sure you are going to need top of the range stuff, but in 4 years medium/high on PC will be console settings.Lol no I only have 4 PCs a 10gb home network, with 2 nas ( work from home as a software developer ) and have had to buy pc parts for the past 2 years.

Pc parts are still expensive and with new cpus, gpus, ddr5 coming out … building a ( whole ) pc that's going to last 4 years just isn't feasible for under $1800.

Nope. Hell, PC gamers with current gen cards better pray cross gen GoW Ragnarok doesn't implement RT, otherwise they will need to upgrade to cards with larger VRAM (not system memory) in order to have a chance in maintaining specs-to-performance parity. Even with out RT it's questionable because dev has already confirmed PS5 version is especially dependent on texture streaming.

Plus the ray tracing on consoles isn't even close to the level of that on PC already going from the Spiderman thread.I said playable. The PS5/Series consoles are only limited to 16GB GDDR6 shared VRAM. The CPU is fairly mediocre to current generation PC CPU's with a 3.5-3.7Ghz Zen 2 processor. You don't need RT to for a game to be playable. Just like I wouldn't expect to enable RT on the Series S. Of course you'd be looking at the higher end of the GPU stack for RT, and PS5/Seires X performance and settings.

I was replying to the guy who thinks that $1800 is the bare minimum needed to run PS5/Series games, which is simply untrue. Especially now with the GPU crash and the availability of high performance of lower end CPU's like the 5600, the 11400, 12400 etc that you get nowadays without breaking the bank.

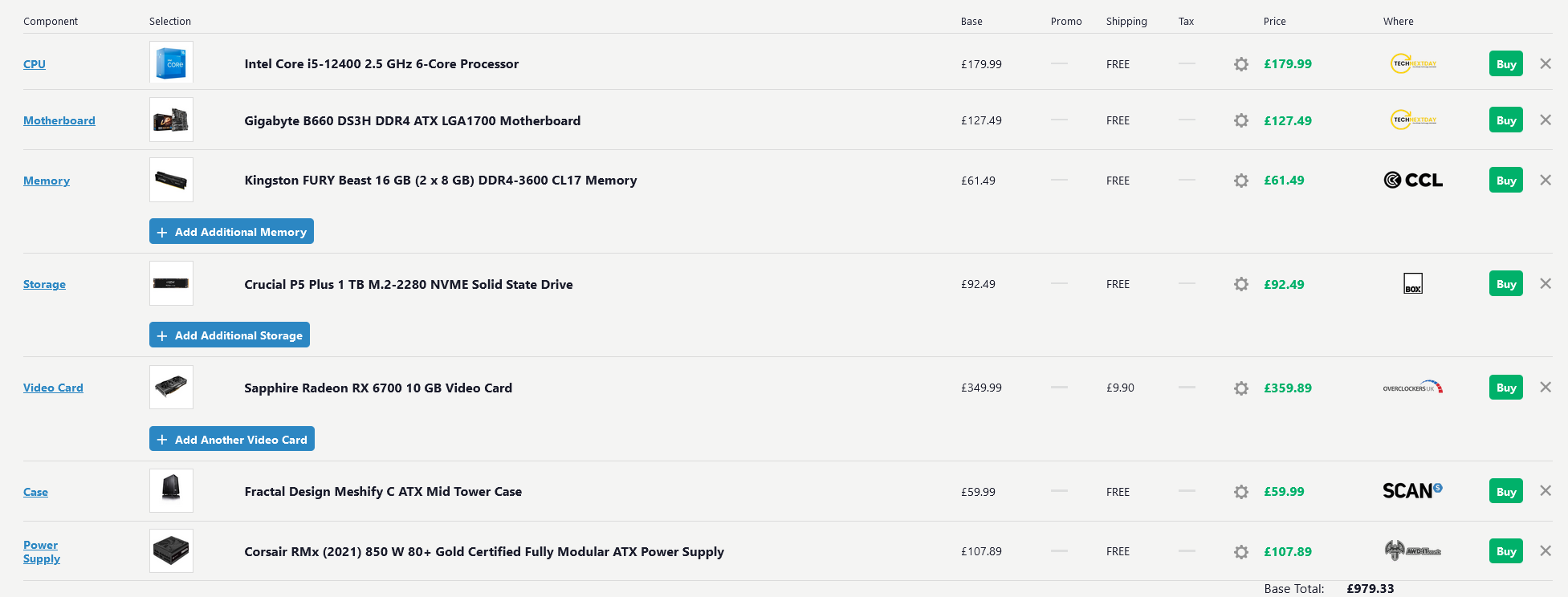

Well for a decent PC right now you would be spending roughly $1800 - $2000. While GPU's have come down in price, Other components still seem to be inflated in my experience.Hi all, in my long "carrer" as a videogamer I've been a PC gamer, but since x360/PS3 era I started playing only on consoles. Months ago I ordered a PS5, with the intention to buy a Series X sometimes in the future too to have the full package (I've a Switch yet). Anyway, until today the PS5 still has not yet arrived and since Sony started to port their games on PC and MS already do, I was wondering if was a good idea to just buy a decent gaming PC.

I've not followed the PC hardware since very long time, so I'd like to know: how much I'd have to spend for a PC where I can play "next gen" games? I'd like something to play at 1440p/medium-high details.

time to settle our scores. are you ready?Sure prove it me.

Ive seen enough benchmarks of 3080 10Gs doing 4KRT as best the chip can.

Yeah, RDNA 2 Raytracing performance leaves a lot to be desired. Its behind even Turing (core for core) which is kinda bad.Plus the ray tracing on consoles isn't even close to the level of that on PC already going from the Spiderman thread.

This isn't the 1990s anymore. So not true.Anyone saying anything under $1800 for decent pc that won't be obsolete in a week is a liar.

The PS5/Series consoles are only limited to 16GB GDDR6 shared VRAM.

Not really relevant as most games run are designed to still run on PC in all sorts of configurations including on hard drive. There's hardly any games out there that actually require the I/O of an SSD, never mind a NVMe..... in addition to I/O that allows the SSD to perform as extended memory. You forgot that important detail. And before you say "SSD isn't a substitute for VRAM", VRAM to GPU bandwidth isn't what we're talking about. The data transfer we're comparing is storage to VRAM. If you can't move data in and out of storage/VRAM quickly then you need large VRAM to compensate. With Spider-Man Remastered, we are seeing how easy it is to saturate VRAM quickly as next gen features are piled up, such as RT and Ultra quality textures in this case. Next gen geometry and asset diversity will further compound this issue.

Not really relevant as most games run are designed to still run on PC in all sorts of configurations including on hard drive.

There's hardly any games out there that actually require the I/O of an SSD, never mind a NVMe.

There isn't a magical GPU hidden underneath those SSD's

it isn't going to work the way you envisioned it.

For I/O needs there's Direct Storage for Direct X, RTX IO

AMD probably has an equivelent in the works.

only in short bursts of play time. once you swing around a bit, 8 gb becomes a limint factor and causes huge performance slowdowns. naturally alex won't have guts to expose this. as you can see, even here people are in heavy denial even against solid evidence

watch 44:14. see how the gpu is vram constrained with ps5 equivalent ray tracing settings. once he sets textures to high (worse than ps4 in terms of texturing detail) it locks to a rock solid 60. this is solid evidence that 3060ti has the grunt the run native 1440p 60 fps. it cant do so with very high textures because it runs into VRAM limit. simple as that.

if high textures did not look hideous, I could cut it some slack. but they're hideous. they're beyond how game looks like on a ps4

Its also worth mentioning OP made up his mind long ago already...In any case.. going way off topic. This isn't a PC vs console thread.. its how much a PC would cost that could last a generation of games. Not system wars or whatever this is.

it doesWould a 12gb 3060 solve the problem?

That's true lol. Must've missed it before. But yeah settling for a console rn is probably a wise bet considering that next generation hardware is right around the corner.Its also worth mentioning OP made up his mind long ago already...

Acting like the SSD i/o is nigh and be all is just silly.

Once we get optimisations from future engines and API's and handlings of DirectStorage we should see much better i/o on PC. Atm, its just dealing with a few streaming issues and hitches.