analog_future

Resident Crybaby

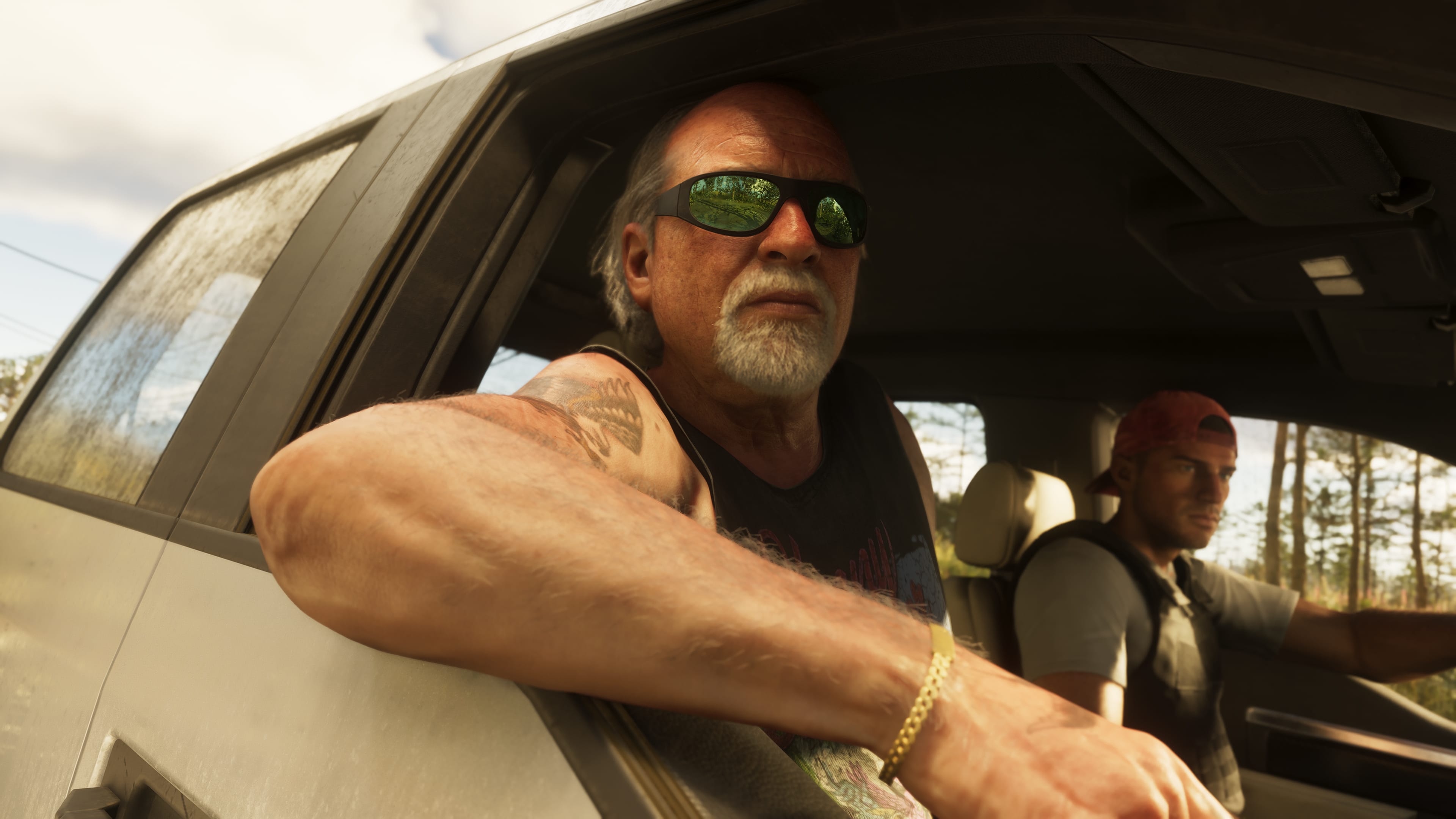

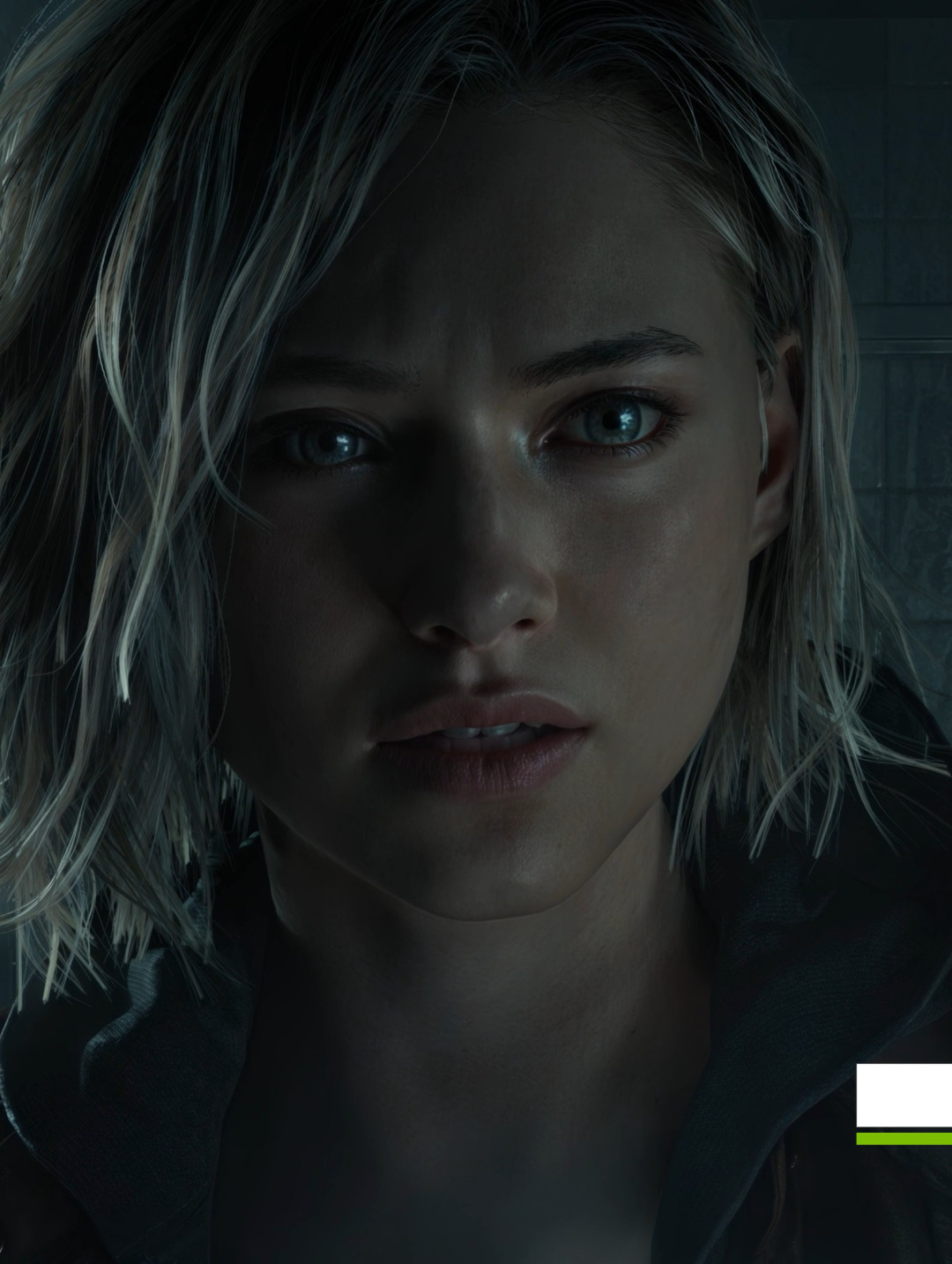

That top pic is from Love, Death, and Robots, right? So it was rendered offline. It does look more convincing than Grace to me, but Grace was done in real-time. And today is the first time we've seen the DLSS 5 tech, which is only going to improve.

in film, yes. in games, no.

Also in movies, you have artists making sure the CG facial animations are accurate. there is a reason why these CG movies cost $200 million. A lot of work goes into it.

starfield NPCs have zero work put into their facial animations. AI is going in there with the photorealism filter but it cannot change the facial animations or rather add them. hence, the uncanny valley effect which creates this disconnect that makes our brain simply reject it as fake.

the few cutscenes they showed of resident evil looked ok. no issues whatsoever because capcom animators mocapped and likely handkeyed in facial expressions.

The point I'm making is that the Twitter dude is trying to claim these faces look "bad" to so many people because we're not used to seeing faces in such high fidelity and our brains don't know how to process it. Which is patently false.