BloodFree

Member

Elaborate. What is not matching in my edit other than the face filter and the rim light ?Lol you can't be serious.

You don't even need my bootleg edit to judge, here it is done better:

Elaborate. What is not matching in my edit other than the face filter and the rim light ?Lol you can't be serious.

What if they just ignore you and buy the hardware anyway?I absolutely hate DLSS 5. I will never use it, nor will I ever buy any piece of hardware that supports it - and I will tell all my frriends to do the same.

This is such bullshit, really.

They aren't even close.They're more comparable than before when the values match up. I never said they were the same.

yeah it's just normal good old talentless video game artist slop.Yes, it doesn't look like AI deepfake slop.

Thanks.

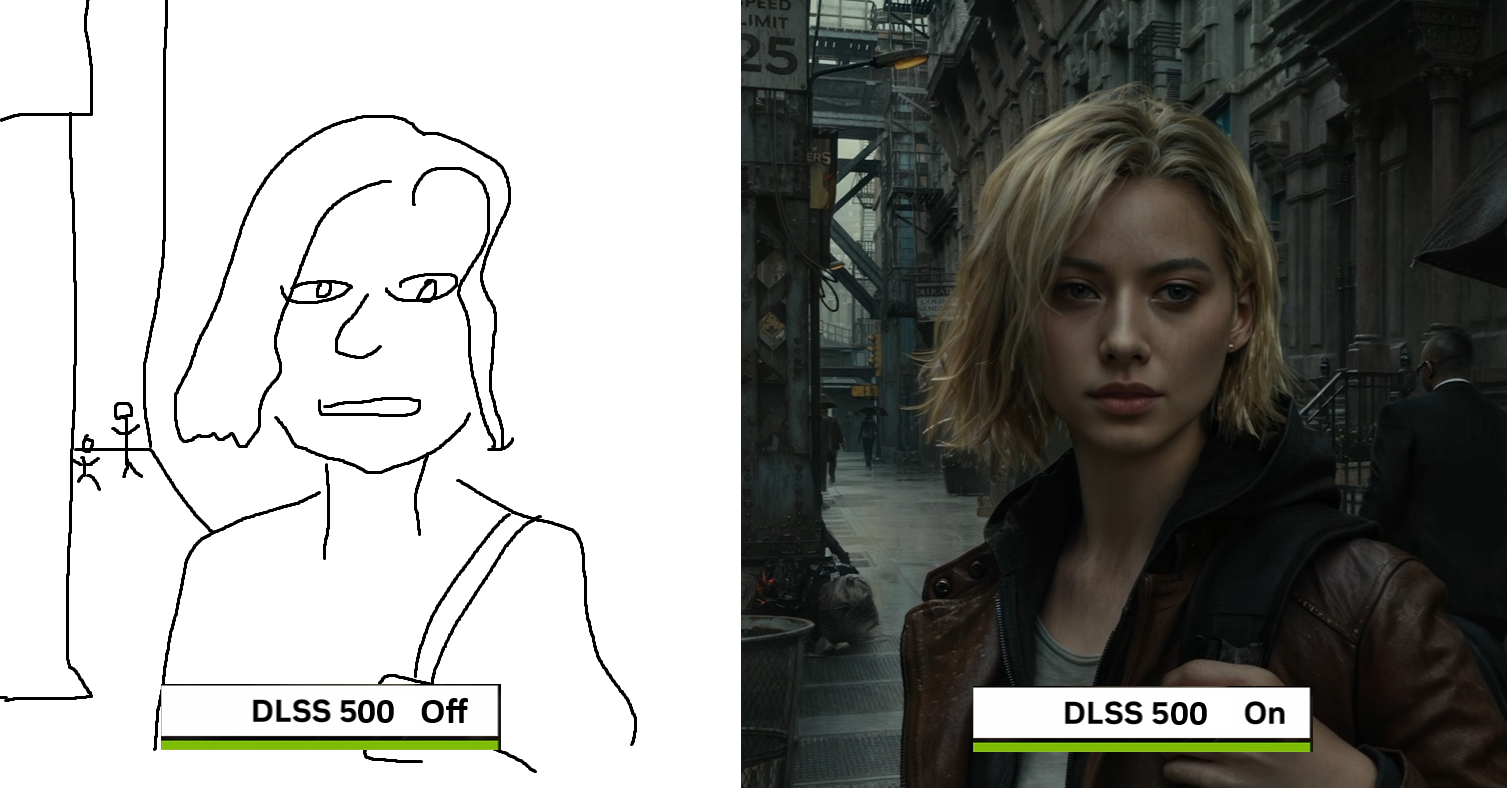

It's fucking disingenuous as shit lol. But it's not even the DLSS off pics being the issue, it's the DLSS on pics that are "wrong". Nvidia just pumped up the contrast and that makes a HUGE difference in getting something to "pop". Sure there's other differences, but if you eliminate the color adjustments, it's a lot closer than it seems.I like how all the DLSS Off pics are all super dark. lol

I absolutely hate DLSS 5. I will never use it, nor will I ever buy any piece of hardware that supports it - and I will tell all my frriends to do the same.

This is such bullshit, really.

What if they just ignore you and buy the hardware anyway?

Reminder of what the "limitations of the current technology" and "last extra mile" can appear right now in gaming, culmination of a decades of progress, without the assist of GenAI rape.

But yeah, let's endorse the one thing that would objectively kill gaming as we know it, while standardizing the output of every single studio out there regardless of skills, sensibilities, talent and artistic merits.

What's different other than what I already aknowledged in my original post. Yes the colors don't match exactly, I'm not a professional color grader and getting an 100% match is a pointless effort anyway in proving the point.They aren't even close.

If Capcom wanted her model to look like that AI Girlfriend ad, they would have made it look like that.The one on the left looks 2 generations better.

Not even remotely close.

What's different other than what I already aknowledged in my original post. Yes the colors don't match exactly, I'm not a professional color grader and getting an 100% match is a pointless effort anyway in proving the point.

Are you saying my edit is FURTHER apart than the original DLSS OFF image that I quoted ? If the answer is no, it's closer, than I have proved my point. The goal is to eliminate the difference in image levels.

Indeed. We were this close..Yeah, path tracing is the true future of visual fidelity. Most exciting breakthrough in years, and makes games genuinely appear a generation ahead.

And speaking of laughs, LOL at all of those prefering that puke-inducing altered image version. I remember readingRepresent. unironically using Dynamic mode on their panels, it all makes sense now.

999 (lol at that value comparison are these still 999: https://slow.pics/s/vatet6Fp , but I'll grant it) is still closer to destination than 1000 by basic math. We're done here. My edit is closer per your own admission.If one person is 1000 miles away from there destination and the other person is 999 miles away from their destination. Relatively speaking one is not much closer than the other to their destination. Comparable in the same realm to the original non-DLSS5 version vs your edited, they aren't very different when compared to the dlss5 version.

Yes everyone use reshade because it is .1% closer. It's basic math. lol999 (lol at that value comparison are these still 999: https://slow.pics/s/vatet6Fp , but I'll grant it) is still closer to destination than 1000 by basic math. We're done here. My edit is closer per your own admission.

FTFY. These are but minor detoursIndeed. We are this close..

Well I can use made up values too. I think my edit is 400 miles from the destination instead of 999. Point is and was that one is closer than the other. You admitted it is so.Yes everyone use reshade because it is .1% closer. It's basic math. lol.

And speaking of laughs, LOL at all of those prefering that puke-inducing altered image version. I remember readingRepresent. unironically using Dynamic mode on their panels, it all makes sense now.

I had a similar thought earlier today:

You need to stop using ChatGPT. All Textures are sampled during rasterization / modified via shading. Those textures are compressed with NTC. (Textures can feature Albedo, Normal Maps, Roughness, whatever else). This allows a nice high quality base image to be composited, but there is only so much high resolution textures will give you, there is additional lighting passes, post processing etc done on the composited image. However this image will have game like lighting, and other compromises that a texture alone cannot provide. Then the entire image as a whole, is fed into the upscaler, and it samples the image and generates new pixels, throwing away all of that texture detail from the rasterization. They are different stages of the image generation, and the highest res textures you can imagine, are not enough to give the scene more quality than the lighting and art direction provide.what? I dont understand now....what is a filter? what is a post processing here?

I thought it still have wireframe with its own animation routines computed by the cuda cores. The NTC is supposed to provide better than conventional texture quality on these skeletal meshes, why need another layer of filter?

This is mixed AI rendering, best of all worlds

Can a game ever be majorly rendered by the tensor cores?

Hate, hate, HATE dynamic tonemapping. It ruins so much content, and is part of the reason why HDR implementation is so inconsistent across the industry. I posted these on AVSForum last year to showcase why dynamic tonemapping sucks balls to folks who wouldn't listen, may as well share them here too. Below are two pictures I snapped of a night scene in The Division 2 on a Bravia 9, with exposure locked to be consistent for both photos. First photo is with dynamic tonemapping kept off:Dynamic mode

For real. Like the laziness with raster now for those who rely on RT. Flat blank textures, no SSR or cube maps, or even crafted light source placements.

I'm looking at you, Remedy.

At least Insomniac provides good raster still even without RT. For now.

If Capcom wanted her model to look like that AI Girlfriend ad, they would have made it look like that.

This is the amount of skin detail in the game..

You're supposed to believe they would have had trouble giving her eye bags and fuller, redder lips if they wanted to?

But especially what you see in that shameful comparison is the Path Tracing character rendering completely smoothing out all detail originally there and removing all the subtle lighting nuances and texture detail present in the other rendering modes.

It's a laughably flawed comparison to begin with.

And speaking of laughs, LOL at all of those prefering that puke-inducing altered image version. I remember readingRepresent. unironically using Dynamic mode on their panels, it all makes sense now.

Yep, dynamic tone mapping on TVs are doo doo for gaming. HGiG is the most accurate with what the developers intended and is processed internally by the console/GPU itself.Hate, hate, HATE dynamic tonemapping. It ruins so much content, and is part of the reason why HDR implementation is so inconsistent across the industry. I posted these on AVSForum last year to showcase why dynamic tonemapping sucks balls to folks who wouldn't listen, may as well share them here too. Below are two pictures I snapped of a night scene in The Division 2 on a Bravia 9, with exposure locked to be consistent for both photos. First photo is with dynamic tonemapping kept off:

And here's the same scene with dynamic tonemapping on (Sony calls it Brightness Preferred):

Everything in the scene "pops" but it looks like dogshit to anyone not suffering from moon brain. Dynamic tonemapping has zero context for how scenes are established by talented artists and will over-brighten everything.

I had a similar thought earlier today:

Purposely playing most games in Vivid mode, 30fps on my OLED.

Not giving a shit about performance at all

I play everything on Vivid. Shit looks way better and is perfectly smooth.

This game needs to be seen on Vivid mode.

This is how the game looks on my TV, in vivid mode.

Bruh. Stop listening to guys like DF and TV manufacturers and just try it for yourself

Do this test right now.

Go turn on Horizon burning shores in 30fps mode. First try it in game mode. Then, switch to vivid mode.

Tell me it doesn't look SIGNIFICANTLY better in vivid mode.

This goes for LG OLED tvs. I have no say on other tvs

Oh lord, I see now that I was trying to argue with an insane person. That checks out I guess.<snip>

I had a similar thought earlier today:

In the same week we heard more about people talking about how studios/developers are going to have to get better with optimization and performance due to the nonsense with PC components. Which is a great thing, especially after the kind of performance we've seen over the last couples of years. Then we get this. You can best believe some studios are going to take advantage of this so that it does a lot of the "heavy lifting." I actually find it pretty comical that Bethesda and Ubisoft are talking it up so hard, because of course they are.For real. Like the laziness with raster now for those who rely on RT. Flat blank textures, no SSR or cube maps, or even crafted light source placements.

I'm looking at you, Remedy.

At least Insomniac provides good raster still even without RT. For now.

You're supposed to say the line: "AI Slop". All the cool kids are saying it.I don't understand the meltdowns. This is technology in its infancy that will obviously get better as DLSS did. It's going to be optional as DLSS is. It's going to be niche and super expensive. What am I supposed to be mad about?

Stop gargling Huang's AI balls, he's already a billionaire. The demo looked like shit.You're supposed to say the line: "AI Slop". All the cool kids are saying it.

You want to be cool, don't you?

I had a similar thought earlier today:

well at least nothng changed.And it will run like ass.

You're supposed to say the line: "AI Slop". All the cool kids are saying it.

You want to be cool, don't you?

This is the best one yet.

I just have this worry that games are all going to start looking the same. It's like looking at a wall of AI-generated images on Pinterest. They were each generated by a different user who applied a different set of prompts, but because they're all being fed through the same pipeline they all start to take on a similar look.

Even with the examples nvidia used yesterday, RE9, Hogwarts and Starfield all start to kind of look like they're using the same game engine, even though they aren't.

Look at these characters' faces, they look like they could have been taken from the same game, because they have the same post processing applied by DLSS. There's no identity.

Just generic, homogenous slop. Just not a fan of it.

Not a worry, this is already confirmed.I just have this worry that games are all going to start looking the same. It's like looking at a wall of AI-generated images on Pinterest. They were each generated by a different user who applied a different set of prompts, but because they're all being fed through the same pipeline they all start to take on a similar look.

Even with the examples nvidia used yesterday, RE9, Hogwarts and Starfield all start to kind of look like they're using the same game engine, even though they aren't.

I also cannot help this trend of not hating bullshots anymore (sorry but this trend of images turning into soup or having plenty of visual artefacts when the camera moves can be best summarised by calling them bullshots… most of these examples have a still camera or have artefacts at the edges of the screen).It's fucking disingenuous as shit lol. But it's not even the DLSS off pics being the issue, it's the DLSS on pics that are "wrong". Nvidia just pumped up the contrast and that makes a HUGE difference in getting something to "pop". Sure there's other differences, but if you eliminate the color adjustments, it's a lot closer than it seems.