-

Hey Guest. Check out your NeoGAF Wrapped 2025 results here!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Jensen Huang says gamers are 'completely wrong' about DLSS 5 — Nvidia CEO responds to DLSS 5 backlash

- Thread starter IbizaPocholo

- Start date

- Opinion Business Platform

Jensen Huang says gamers are 'completely wrong' about DLSS 5 — Nvidia CEO responds to DLSS 5 backlash

The CEO says artistic control remains with developers.www.tomshardware.com

ripYeah, this is exactly what I said.

It's mind-blowing tech and it's pretty lame to pretend not to see the potential.

Last edited:

General Lee

Member

People are misguided in thinking the tech is just coming up with a new face when it's just changing the lighting. The photorealism look is off-putting when they're not used to it and expect video game characters to look stylized and cartoony. And that's the only valid criticism I see with the tech, photorealism in stylized game brings it into uncanny valley territory, and people react to that with inherent disgust.

Nvidia could've avoided all this backlash without changing anything but being a bit more selective of not picking the most AI-sloppy looking end result as the headline showcase. Despite Grace looking like your average AI gen 1girl, the tech isn't actually doing the typical image-to-image diffusion that's happening with the genAI image generators.

Considering this won't come out until fall, and will likely be limited to Blackwell cards, most people can't experience it on their own and will continue to just repeat the AI slop line. There's a lot of hatred out there towards AI and Nvidia currently, and some have pretty much made it their life purpose to spread the message in social media. No matter what form it takes, AI = evil to these people. Facts won't matter over their feels. AI has come to destroy their jobs and their entertainment, so they must attempt destroy it first. They're suffering from AI derangement syndrome.

Nvidia could've avoided all this backlash without changing anything but being a bit more selective of not picking the most AI-sloppy looking end result as the headline showcase. Despite Grace looking like your average AI gen 1girl, the tech isn't actually doing the typical image-to-image diffusion that's happening with the genAI image generators.

Considering this won't come out until fall, and will likely be limited to Blackwell cards, most people can't experience it on their own and will continue to just repeat the AI slop line. There's a lot of hatred out there towards AI and Nvidia currently, and some have pretty much made it their life purpose to spread the message in social media. No matter what form it takes, AI = evil to these people. Facts won't matter over their feels. AI has come to destroy their jobs and their entertainment, so they must attempt destroy it first. They're suffering from AI derangement syndrome.

Last edited:

majestix1988

Member

well he pushed AI things so sloppy thing to do is counter its AI powered stupidity

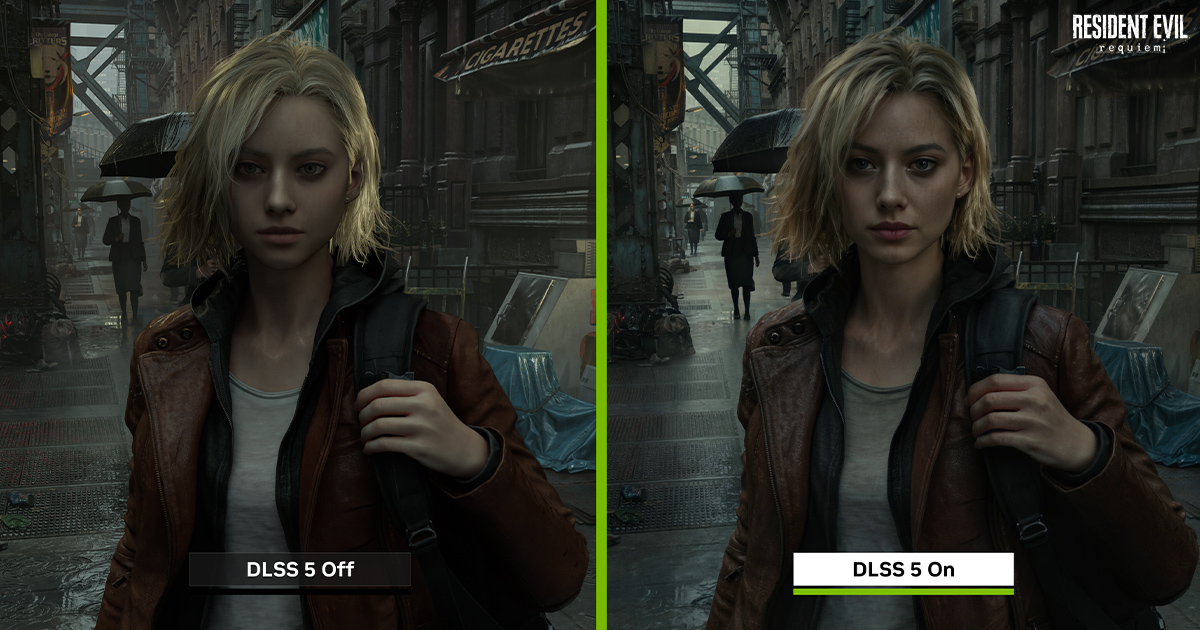

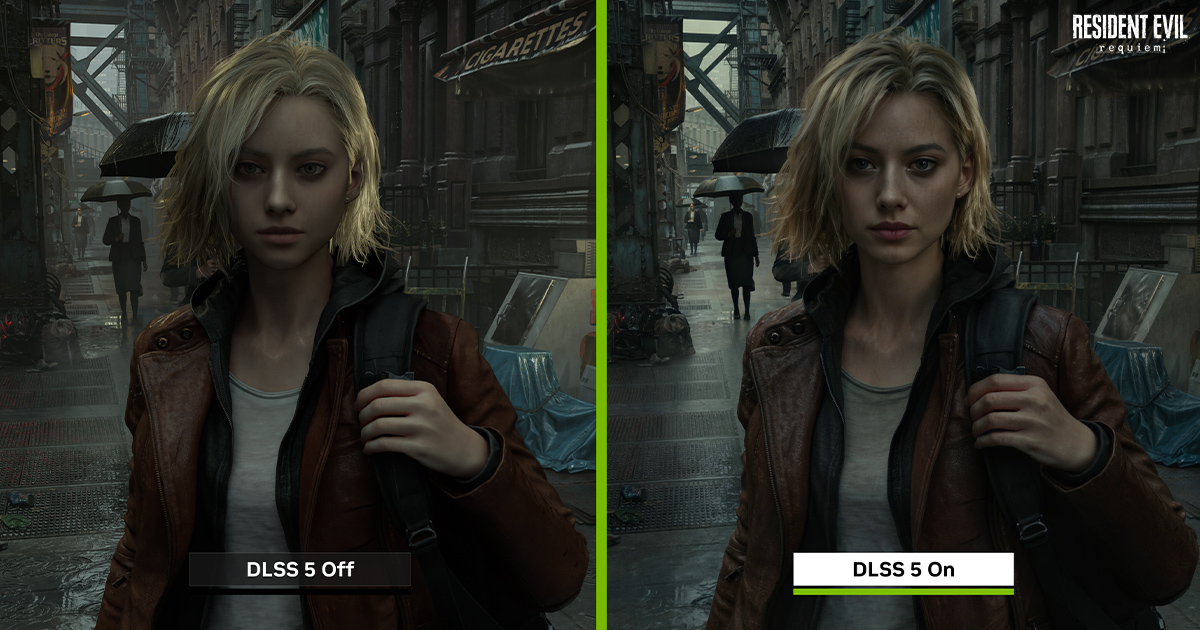

I disagree with that and the thing that proves you're wrong there is that this didn't get a similar reaction:People are misguided in thinking the tech is just coming up with a new face when it's just changing the lighting. The photorealism look is off-putting when they're not used to it and expect video game characters to look stylized and cartoony. And that's the only valid criticism I see with the tech, photorealism in stylized game brings it into uncanny valley territory, and people react to that with inherent disgust.

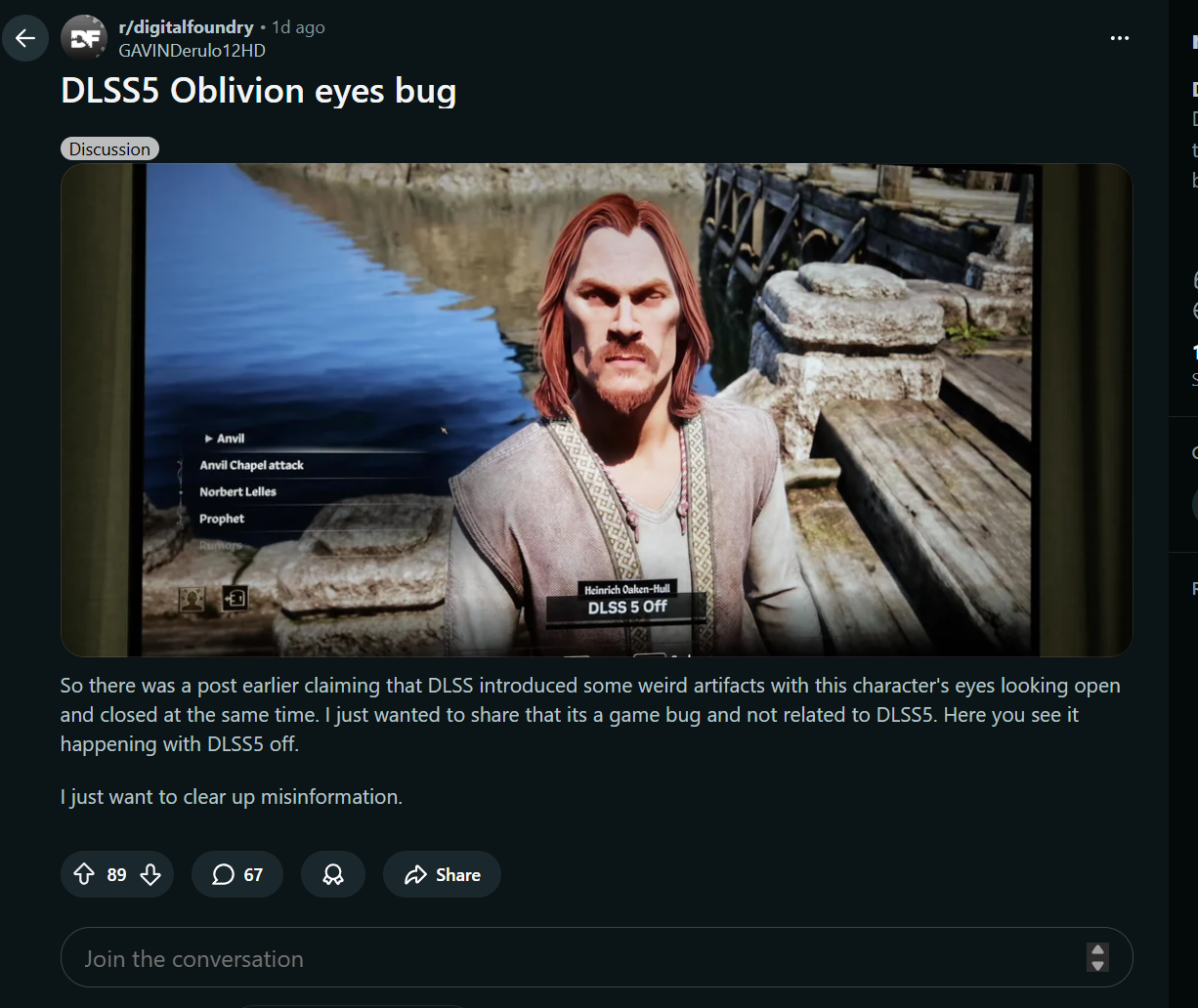

It's not just a lighting change you can very obviously see that is not true from the demo where the guys eyes are completely wrong.

How can you claim that people are "misguided in thinking the tech is just coming up with a new face when it's just changing the lighting" with this as evidence?

Last edited:

General Lee

Member

I disagree with that and the thing that proves you're wrong there is that this didn't get a similar reaction:

It's not just a lighting change you can very obviously see that is not true from the demo where the guys eyes are completely wrong.

How can you claim that people are "misguided in thinking the tech is just coming up with a new face when it's just changing the lighting" with this as evidence?

The eyelids clipping through the model is an issue visible in the non DLSS5 video too. It's not the proof you're looking for. The added lighting simply makes it more visible.

There's also one other shot from the FC game with the football breaking up in front of the guy. That's an issue with the frame gen not keeping up with the fast movement.

People on average have zero technical insight into any of this, and are making claims they aren't able to back up.

That's absolutely horseshit. This is the side by side of it replacing the face:The eyelids clipping through the model is an issue visible in the non DLSS5 video too. It's not the proof you're looking for. The added lighting simply makes it more visible.

This is not just replacing lighting. You have not been able to back up your claims at all. there is a literal side by side showing you that it affects more than lighting.There's also one other shot from the FC game with the football breaking up in front of the guy. That's an issue with the frame gen not keeping up with the fast movement.

People on average have zero technical insight into any of this, and are making claims they aren't able to back up.

General Lee

Member

That's absolutely horseshit. This is the side by side of it replacing the face:

This is not just replacing lighting. You have not been able to back up your claims at all. there is a literal side by side showing you that it affects more than lighting.

What is it you think it's changing besides the lighting? Lighting has a deep effect on how a human face ends up looking like. Basic photographic lighting principles apply, and light behaves according to the laws of physics. Artistry comes from how you light the scene. That is in the developers artistic control.

Take for example this Grace shot with the cut scene model of her.

NVIDIA DLSS 5: Resident Evil™ Requiem GeForce RTX Comparison: NVIDIA DLSS 5 On vs. NVIDIA DLSS 5 #002

NVIDIA DLSS 5: Resident Evil™ Requiem GeForce RTX Comparison: NVIDIA DLSS 5 On vs. NVIDIA DLSS 5 #002

www.nvidia.com

What is changing there besides the lighting? There is no lip fillers, no bigger eyes, no nose job etc. what some people claim is happening. The ML model changes the lighting, and it affects the subsurface scattering of the skin, making it more photorealistic.

Last edited:

Xtib81

Member

He's right though. People jump the gun because they hear the word AI.The downfall of a company starts when they think their customers are wrong.

cormack12

Gold Member

I think the ginger Jesus eyes have been proven to be a still from when the character was blinking. Obviously blinking is not time synced across every game, but someone captured the same behaviour using DLSS X.x as well. Plus this is all off screen footage so you're likely to also capture any TV artifacting as well.

I think we need to wait for raw footage.

I think we need to wait for raw footage.

SmokSmog

Member

I disagree with that and the thing that proves you're wrong there is that this didn't get a similar reaction:

It's not just a lighting change you can very obviously see that is not true from the demo where the guys eyes are completely wrong.

How can you claim that people are "misguided in thinking the tech is just coming up with a new face when it's just changing the lighting" with this as evidence?

You can apply this to most discussions about modern gaming tech. People are unrelentingly stupid.People on average have zero technical insight into any of this, and are making claims they aren't able to back up.

You're using a lot of big words and asking someone to actually take a second to process information, think critically, and evaluate the evidence in front of them. You can't expect that from people.What is it you think it's changing besides the lighting? Lighting has a deep effect on how a human face ends up looking like. Basic photographic lighting principles apply, and light behaves according to the laws of physics. Artistry comes from how you light the scene. That is in the developers artistic control.

Take for example this Grace shot with the cut scene model of her.

NVIDIA DLSS 5: Resident Evil™ Requiem GeForce RTX Comparison: NVIDIA DLSS 5 On vs. NVIDIA DLSS 5 #002

NVIDIA DLSS 5: Resident Evil™ Requiem GeForce RTX Comparison: NVIDIA DLSS 5 On vs. NVIDIA DLSS 5 #002www.nvidia.com

What is changing there besides the lighting? There is no lip fillers, no bigger eyes, no nose job etc. what some people claim is happening. The ML model changes the lighting, and it affects the subsurface scattering of the skin, making it more photorealistic.

Last edited:

Kokoloko85

Member

Serve you farts right.

You gave this overpriced company money for graphics, now he tells you whats right and wrong

You gave this overpriced company money for graphics, now he tells you whats right and wrong

Last edited:

There is little difference in that image because the source is pretty clear. When things aren't very clear though generative ai can see things a little differently. It's very subtle but look at the guys chin change when things aren't so clear.What is it you think it's changing besides the lighting? Lighting has a deep effect on how a human face ends up looking like. Basic photographic lighting principles apply, and light behaves according to the laws of physics. Artistry comes from how you light the scene. That is in the developers artistic control.

Take for example this Grace shot with the cut scene model of her.

NVIDIA DLSS 5: Resident Evil™ Requiem GeForce RTX Comparison: NVIDIA DLSS 5 On vs. NVIDIA DLSS 5 #002

NVIDIA DLSS 5: Resident Evil™ Requiem GeForce RTX Comparison: NVIDIA DLSS 5 On vs. NVIDIA DLSS 5 #002www.nvidia.com

What is changing there besides the lighting? There is no lip fillers, no bigger eyes, no nose job etc. what some people claim is happening. The ML model changes the lighting, and it affects the subsurface scattering of the skin, making it more photorealistic.

General Lee

Member

There is little difference in that image because the source is pretty clear. When things aren't very clear though generative ai can see things a little differently. It's very subtle but look at the guys chin change when things aren't so clear.

Nothing is changing about the geometry of the face. In the non DLSS shot you're simply not perceiving the normal map differences so the chin ends up looking more flat. The lighting accentuates the shape of the chin. I think it should be obvious at this minute of details that the model does not change the shapes of the face in any way, the lighting simply changes how the viewer perceives it.

I'm not going to repeat myself on the matter any further, anyone bothering to look into it can do it on their own. I don't have a horse in the race, I'm just pointing what I think is the correct factual reasons why things look the way they do, instead of deriving it from ai=slop logic.

YeulEmeralda

Linux User

Our customers are wrong!

Branded

Member

Nvidia no longer caters to gamers DONTCHAKNOW?Our customers are wrong!

cripterion

Member

well he pushed AI things so sloppy thing to do is counter its AI powered stupidity

Dunno what's up with the Oblivion eyes ,could be a bug due to DLSS or another one of Bethesda, either way, it's something to iron out.

People also have been critical of the FC Football footage but analyse frame by frame the non DLSS bit and you'll find the same faults so something else at play there.

What do you expect him to say.

"This age of AI is the age of abondance so free GPU's in 2026 for all!"

Last edited:

It looks daft on the orginal when he blinks too but you can obviously tell this is not "just lighting":

You can actually see it replacing the face. That's not what clipping would look like either.

intheinbetween

Member

Can't wait for AMD and Sony to jump on this with their own approach, which will be most likely inferior, how many people will change their stake on this lol

Last edited:

I'm clearly seeing his chin becoming asymmetrical because the AI isn't perceiving it properly. You can clearly see the right side of the chin change shape.Nothing is changing about the geometry of the face. In the non DLSS shot you're simply not perceiving the normal map differences so the chin ends up looking more flat. The lighting accentuates the shape of the chin. I think it should be obvious at this minute of details that the model does not change the shapes of the face in any way, the lighting simply changes how the viewer perceives it.

Ok, put your money where your mouth is. how do you think this works to "change lighting"? Do you think it operates on the rendered output image or somehow on the 3D scene?I'm not going to repeat myself on the matter any further, anyone bothering to look into it can do it on their own. I don't have a horse in the race, I'm just pointing what I think is the correct factual reasons why things look the way they do, instead of deriving it from ai=slop logic.

uncreativename

Member

That's the thing, though, no one really knows how it works afaik outside of NVIDIA.Ok, put your money where your mouth is. how do you think this works to "change lighting"? Do you think it operates on the rendered output image or somehow on the 3D scene?

When I first saw the news about DLSS 5 I did some googling, hoping to find some additional in-depth explanation filled with programmer jargon, like how Khronos Group does when they make a big update.

I didn't find anything. Now, I might be wrong, but that makes me really skeptical of all their claims of how developers will have tons of control over how DLSS 5 works. If that were the case, why not prove it? Even Jensen is being extremely vague about how it works. Until I see otherwise, I'm gonna believe that this is mostly a closed technology and that the depth of choice compared to what developers were doing before will be like an artist using MS Paint (DLSS 5 in this metaphor) vs using Gimp or Photoshop.

Last edited:

deeptech

Member

People are mad this thing exist for absolutely no reason, no need to use it if you hate it, nor will you probably be able to hardware wise, quite probably. Like what are you doing here? Nobody wants your trash opinion, dlss5 isn't stealing your candy, brainlets. You look like impossible losers, trying to convince others it's just slop, I'll see for myself when it comes out.

JerryinSoCal

Member

He's way over the top on the artistic stuff, these are consumer products being made to sell to people for the entertainment and enjoyment they aren't paintings or sculptures where only one gets made. This is a tool to help developers, they get to dictate how it's used if it's used at all. We'll see how it all shakes out in the end but I'm getting a bit annoyed of the whole "art" thing when talking about games.If its so tuned into the geometry of these game engines then I'd like them to show an example where the character turns away from the screen and back without the face morphing or changing like an instagram filter.

Even with graphical features that use camera/player perspective to fake geometry complexity (e.g. parallax occlusion mapping) that are tied to the core of game engines have artifacts when they get challenged by other parts of the engine or acute angles.

"I deeply emphasise with people..."

Anyway, the problem is most people don't care about the journey, they care about the end result, the average person loves ultra high contrast reshade filters and Vivid/Dyanmic picture modes & maximum motion smoothing in TVs.

You have most people now just taking google search AI answers as truth without even processing that theres massive contradictions in the answer. They just read it and go: Thats the answer.

I asked google yesterday when it thought the "Switch 2 successor" would come out and no matter how many time I rephrased the question it kept just talking about the Switch 2, saying things like "It will come out in late 2025, midway through the PS6 cycle", because that PS6 bit was a part of my question.

Even if this is going to have the mad artifacts of video filters and the general AI sloppy look people will love it, they want to see "perfect" overtuned bullshit that makes no sense when you consider it for a second because they don't want to think at all.

I hate this in its current form.

Bingo.

DenchDeckard

Moderated wildly

Idon'tbelieveyou.gif

But, show us. If it looks better and the artists are doing it. We will come around.

But, show us. If it looks better and the artists are doing it. We will come around.

Disciple of MSSP

Gold Member

Hear youWhat does an AI company know about gaming?

I suspect it is like project genie but instead of using one reference image and then subsequent frames based on past ones it uses the underlying render as the reference between frames. It seems to interpret the eyelid cliping as painted eyelids so probably doing it over several frames too. Same reason you have occlusion artifacts it wouldn't do that if it was operating on 3d geometry. It very much doubt it even has 3D information in the model outside of the same motion vectors for DLSS.That's the thing, though, no one really knows how it works afaik outside of NVIDIA.

When I first saw the news about DLSS 5 I did some googling, hoping to find some additional in-depth explanation filled with programmer jargon, like how Khronos Group does when they make a big update.

I didn't find anything. Now, I might be wrong, but that makes me really skeptical of all their claims of how developers will have tons of control over how DLSS 5 works. If that were the case, why not prove it? Even Jensen is being extremely vague about how it works. Until I see otherwise, I'm gonna believe that this is mostly a closed technology and that the depth of choice compared to what developers were doing before will be like an artist using MS Paint (DLSS 5 in this metaphor) vs using Gimp or Photoshop.

Look at how project genie works with reference images:

This is Gemini doing a single frame aswell (from the source game image where the eyes look funny)

I suspect given multiple frames it too would think it's painted eyelids.

IlGialloMondadori

Member

"content-control generative AI...it's generative control at the geometry level"

"Yeah, no fucking shit," said everyone who has eyes.

We're not wrong about it, we knew that's what was going on because it's obvious.

And it looks like shit.

"Yeah, no fucking shit," said everyone who has eyes.

We're not wrong about it, we knew that's what was going on because it's obvious.

And it looks like shit.

Last edited:

General Lee

Member

Ok, put your money where your mouth is. how do you think this works to "change lighting"? Do you think it operates on the rendered output image or somehow on the 3D scene?

This has been officially explained by Nvidia:

DLSS 5 takes a game's color and motion vectors for each frame as input, and uses an AI model to infuse the scene with photoreal lighting and materials that are anchored to source 3D content and consistent from frame to frame. DLSS 5 runs in real time at up to 4K resolution for smooth, interactive gameplay.

DLSS 5 takes a frame's color and motion vectors as input to deliver photoreal lighting and materials that are deterministic, temporally stable and anchored to the game's content

The AI model is trained end to end to understand complex scene semantics such as characters, hair, fabric and translucent skin, along with environmental lighting conditions like front-lit, back-lit or overcast — all by analyzing a single frame. DLSS 5 then uses its deep understanding to generate visually precise images that handle complex elements such as subsurface scattering on skin, the delicate sheen of fabric and light-material interactions on hair, all while retaining the structure and semantics of the original scene.

DLSS 5 provides game developers with detailed controls for intensity, color grading and masking, so artists can determine where and how enhancements are applied to maintain each game's unique aesthetic. Integration is seamless, using the same NVIDIA Streamline framework used by existing DLSS and NVIDIA Reflex technologies.

NVIDIA DLSS 5 Delivers AI-Powered Breakthrough In Visual Fidelity For Games

NVIDIA DLSS 5 infuses pixels with photorealistic lighting and materials to bridge the gap between rendering and reality.

www.nvidia.com

I have no reason to disbelieve it. Why would I? I could try to put it in my own words, but that'll be just my interpretation. Shortly, it's a ML model that does a lighting pass just like Path Tracing does a lighting pass on the scene. Of course the methods how they achieve it are different. PT computes light rays in the engine, DLSS5 uses a ML trained model that takes its input from the engine and applies its weights to the end result, and the weights have been trained to achieve photorealistic lighting look. It should be pretty close to what you'd expect from an offline ray-traced version you could make in any of the professional software that do that sort of thing. Light in the real world behaves only according to the laws of physics, so comments like "all games will look alike due to this" are some of the dumbest shit I have ever heard, as if all movies look the same.

This model isn't intended for cartoon graphics, but just like with the stylistic look of that Harry Potter grandma scene, it can still be applied to something more stylistic, and the results are what they are. Doing it in post like this you can absolutely argue that the original artistic intent can be lost, since it was initially adjusted for a vastly different lighting scenario, so for example the excessive amount of wrinkles on the character may start to look bad, whereas originally it wasn't a problem because the lighting did not pick as much of the detail. That's where the artists will come in, and in the future the model will be made with that in mind, and the results will be according to artist intent.

One major technical caveat is - I think this operates in screen-space only, so it's probably not picking up on light sources outside the screen. For example the yellowish lamp sheen that's visible on Grace's hair with path tracing in that one example location. https://www.nvidia.com/en-us/geforc...equiem-geforce-rtx-comparison-screenshot-001/

While this technique does have its limitations obviously, for something approaching ground truth fully ray-traced scene I'm pretty sure it'll be the main way we're going to achieve photorealism with real-time graphics. Techniques like these will be used by the rest of the industry in the coming years, of that I'm fairly sure.

Last edited:

Unknown Soldier

Member

I have no dog in the DLSS fight, but you are categorically wrong hereThe downfall of a company starts when they think their customers are wrong.

A quote by Steve Jobs

Some people say, Give the customers what they want. But that's not my approach. Our job is to figure out what they're going to want before they do. I t...

www.goodreads.com

Some people say, "Give the customers what they want." But that's not my approach. Our job is to figure out what they're going to want before they do. I think Henry Ford once said, "If I'd asked customers what they wanted, they would have told me, 'A faster horse!'" People don't know what they want until you show it to them. That's why I never rely on market research. Our task is to read things that are not yet on the page.

Jensen is simply doing what Steve did, and what he has consistently done since he founded Nvidia

inb4 people who hate Apple and Steve Jobs show up to attack him and his company

Last edited:

This actually proves exactly what I was saying. This isn't based on 3D geometry. Path tracing absolutely is. DLSS5 isn't merely "changing lighting". It's using a 2D image to create generative AI images of what it sees. It can and does change the face based on what those frames show. Reference and inference don't always match.This has been officially explained by Nvidia:

NVIDIA DLSS 5 Delivers AI-Powered Breakthrough In Visual Fidelity For Games

NVIDIA DLSS 5 infuses pixels with photorealistic lighting and materials to bridge the gap between rendering and reality.www.nvidia.com

I have no reason to disbelieve it. Why would I? I could try to put it in my own words, but that'll be just my interpretation. Shortly, it's a ML model that does a lighting pass just like Path Tracing does a lighting pass on the scene. Of course the methods how they achieve it are different. PT computes light rays in the engine, DLSS5 uses a ML trained model that takes its input from the engine and applies its weights to the end result, and the weights have been trained to achieve photorealistic lighting look. It should be pretty close to what you'd expect from an offline ray-traced version you could make in any of the professional software that do that sort of thing. Light in the real world behaves only according to the laws of physics, so comments like "all games will look alike due to this" are some of the dumbest shit I have ever heard, as if all movies look the same.

This model isn't intended for cartoon graphics, but just like with the stylistic look of that Harry Potter grandma scene, it can still be applied to something more stylistic, and the results are what they are. Doing it in post like this you can absolutely argue that the original artistic intent can be lost, since it was initially adjusted for a vastly different lighting scenario, so for example the excessive amount of wrinkles on the character may start to look bad, whereas originally it wasn't a problem because the lighting did not pick as much of the detail. That's where the artists will come in, and in the future the model will be made with that in mind, and the results will be according to artist intent.

One major technical caveat is - I think this operates in screen-space only, so it's probably not picking up on light sources outside the screen. For example the yellowish lamp sheen that's visible on Grace's hair with path tracing in that one example location.

While this technique does have its limitations obviously, for something approaching ground truth fully ray-traced scene I'm pretty sure it'll be the main way we're going to achieve photorealism with real-time graphics. Techniques like these will be used by the rest of the industry in the coming years, of that I'm fairly sure.

I can show you the best example of this with this face:

I hope you understand what has happened there.

Last edited:

Same ol G

Member

He's not wrong, gamers always think they know best and understand how everything works and they also can program better than any dev out there.

I believe after everything Nvidia has given us with upscaling techniques they probably know better than any gamer or youtuber about the tech.

I believe after everything Nvidia has given us with upscaling techniques they probably know better than any gamer or youtuber about the tech.

In terms of technical background they absolutely know infinitely more than gamers. A cook knows a lot more about cooking too but you can't tell a person that "they're wrong" when people don't like the taste of your dish. That's silly.He's not wrong, gamers always think they know best and understand how everything works and they also can program better than any dev out there.

I believe after everything Nvidia has given us with upscaling techniques they probably know better than any gamer or youtuber about the tech.

Last edited:

fallingdove

Member

Agreed.If game companies listened to gamerrrrrz every time, we'd still be playing Super Mario World.

If we hadn't endured an endless volume of AI generated videos for the last year, minds would have melted with any one of the DLSS 5 videos.

"Looks like AI slop" is a lazy response to the tech and completely ignores that the art style of the games demoed thus far were meant to incorporate photo-realistic characters not plastic-y mannequins that stumble throughout the world. You can bet your ass that the devs would have incorporated the tech if it had been available on the devices they were shipping these games on.

General Lee

Member

It creates a "lighting layer" on top of the scene. That layer is formed on top of the 3D model and texture. It's not simply inpainting an entire face in 2D on top of the end result. It respects the 3D model, normal maps and textures, even though its result will be a 2D layer, as it will be when you're doing Path Tracing. Most of these various layers are just that, 2D layers on top of each other that make up the final image. SSAO, just another 2D layer that takes it inputs from the depth buffer.This actually proves exactly what I was saying. This isn't based on 3D geometry. Path tracing absolutely is. DLSS5 isn't merely "changing lighting". It's using a 2D image to create generative AI images of what it sees. It can and does change the face based on what those frames show. Reference and inference don't always match.

I can show you the best example of this with this face:

I hope you understand what has happened there.

I'm guessing you're implying that the AI is doing some sort of I2I diffusion with like a canny controlnet to make sure it adheres to the original model mostly, but not entirely? That would not work since every image would be slightly different, making it completely unstable for a real-time video game.

Hyet

Member

The reason the market is fucked is because data center construction. Developing this played a miniscule part in it, if any.The real question is: was this "improvement" in lighting on a face, worth destroying an entire hardware market and depleting of primary resources?

Reading people's takes on the tech, their lack of understanding of it and it's potential and seeing how emotionally overblown the response has been, I tend to agree with Jensen here; y'all retarded.

The Cockatrice

I'm retarded?

No shit sherlock.

People are just seeing the tech in its infancy state and start panicking.

If you really hate it, turn it ofc like you turn off dlss or framegen, easy peasy.

I disabled DLSS5, why are my graphics so weird?

cripterion

Member

The real question is: was this "improvement" in lighting on a face, worth destroying an entire hardware market and depleting of primary resources?

I think Jensen can eat a bag of dirt, but maybe that's me

The market situation we're in is not because the end goal is DLSS 5.0...

Gaming is an afterthought for Nvidia and Jensen at this point. Just take a look at their website.

IlGialloMondadori

Member

, I tend to agree with Jensen here;