Just tried a few UE5, Anvil and Snowdrops games to see where they land performance wise when running at native resolutions. My card is a 5080 which is around 13% faster than the 7900xtx used in Crimson Desert's native 4k 60 fps benchmark.

- MGS3 - 45 fps. Cant use FSR with native upscaling so had to use DLAA which has its own cost. also had hardware ray tracing enabled in the config files.

- Mafia - 43 fps native 4k fsr.

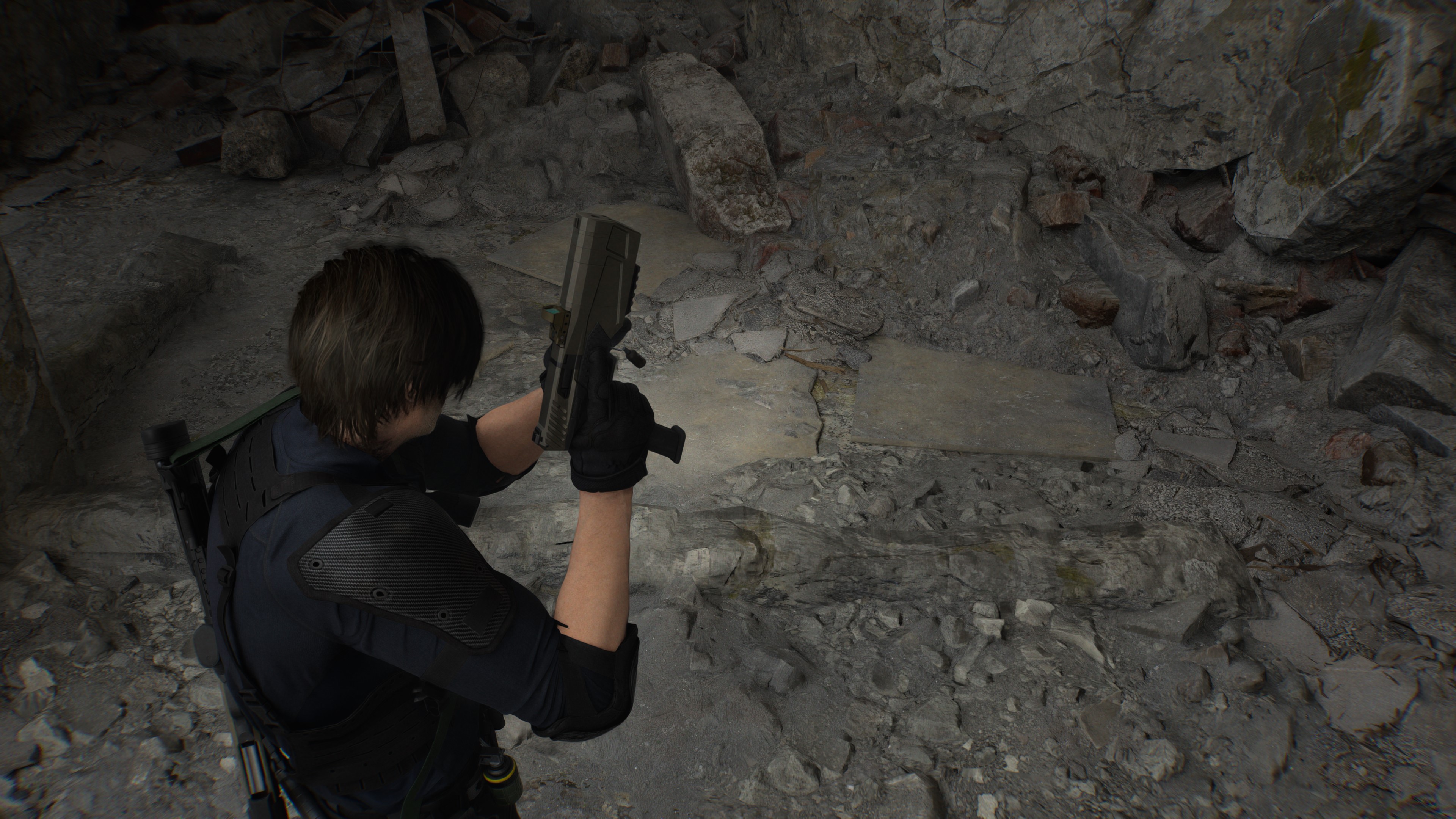

- Robocop - Easy native 4k 60 fps with dlaa with around 20% of the gpu left. didnt bother uncapping. Probably because its an older game that wasnt pushing the gpus a lot. Still looks fantastic though. Some new gifs below.

- Star Wars Outlaws - 37 fps native 4k dlaa. Didnt bother enabling path tracing.

- Avatar - 41 fps native 4k dlaa

- AC Shadows - 40 fps native 4k dlaa

- Cyberpunk - 30 fps native 4k dlaa (no path tracing)

- kingdom Come 2 - 68 fps native 4k dlaa

So basically Crimson Desert's engine is around 50% more performant than UE5, RED engine Anvil and Snowdrop. It shares the same performance profile of Kingdom Come 2, which is a very handsome looking game that uses software based RTGI. Could be they are using a slightly less intensive form of ray tracing, and are skipping on virtualized geometry to gain some more performance.

It IS kinda funny to see all these high end games all have virtually the same performance profile. Almost as if next gen graphics demand next gen specs.