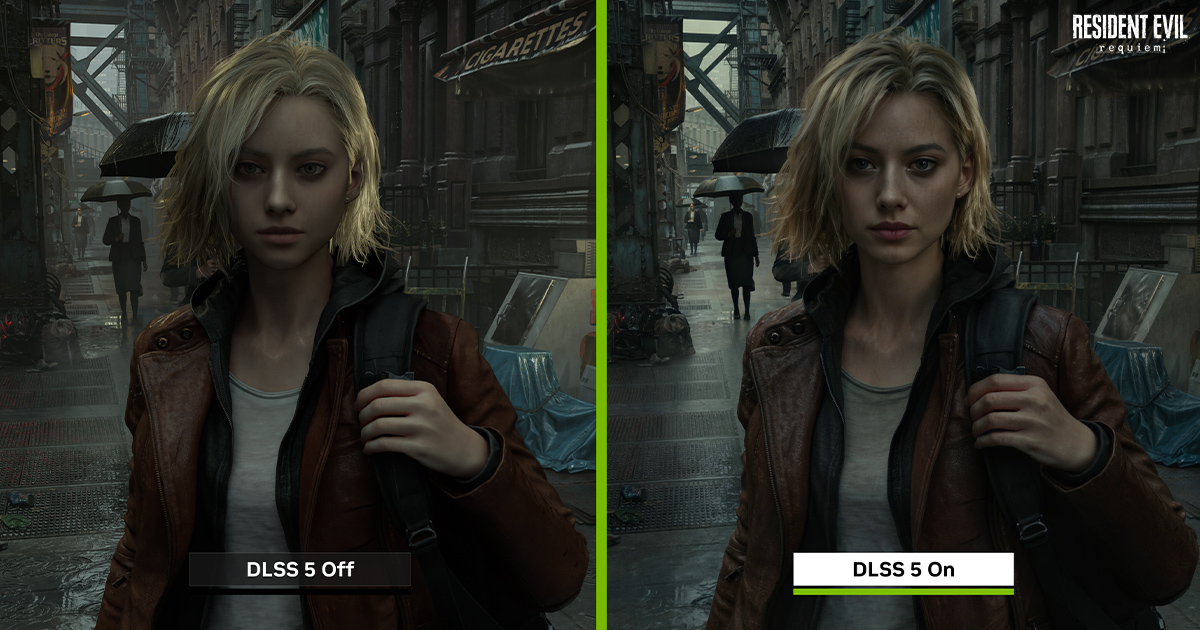

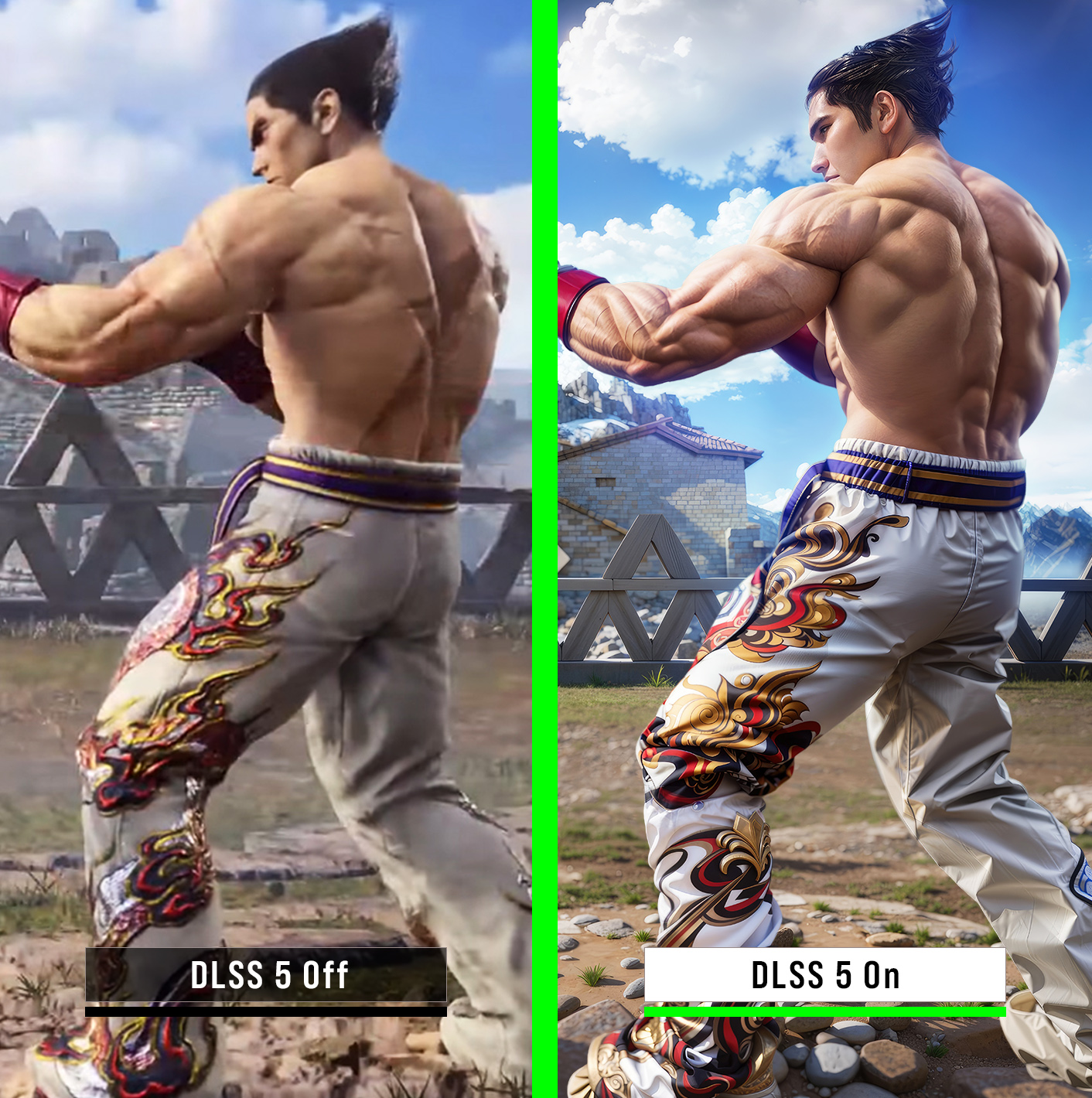

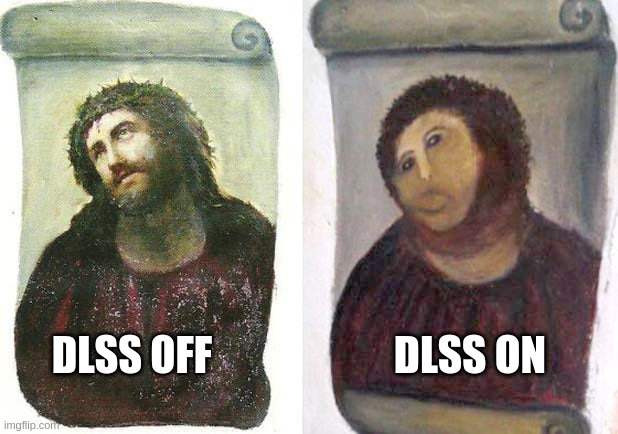

This is so bad. It literally looks like all that thumbnails for "Consoles vs PC" videos, where PC image is generated for bigger contrast and is a clickbait. Now we can have this in games, great!

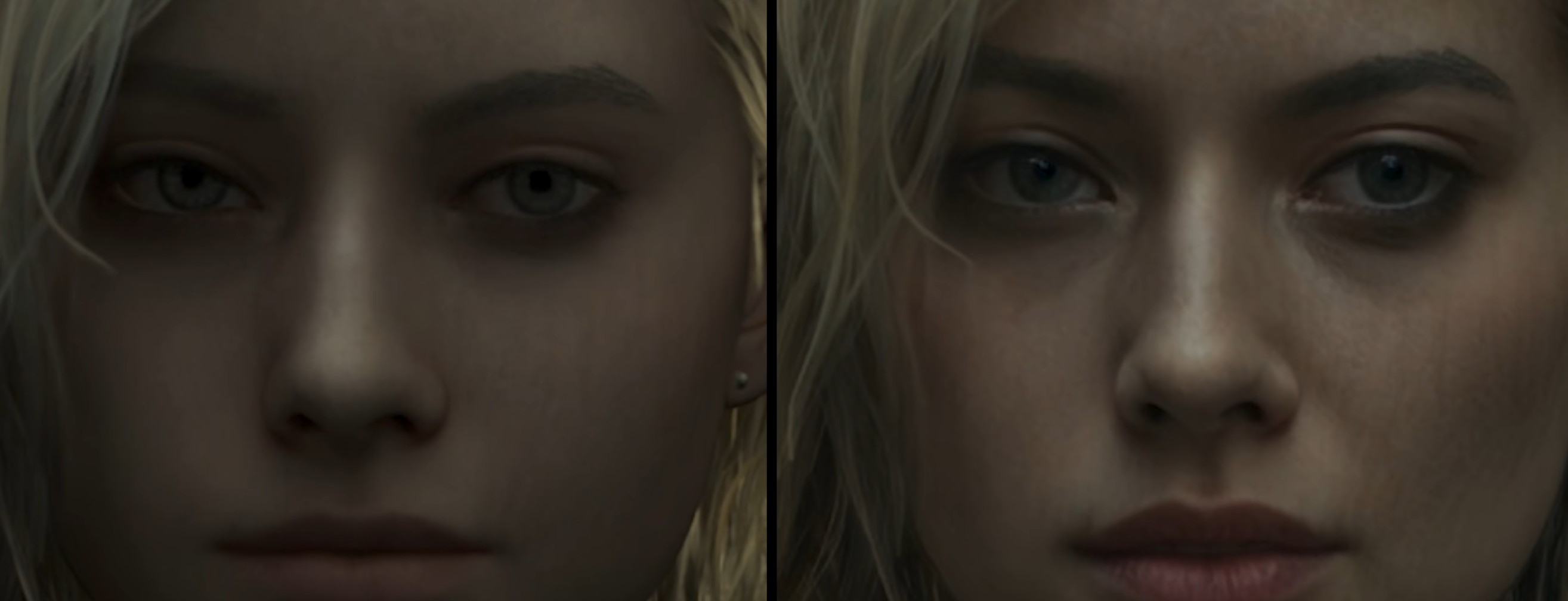

It looks bad, it makes everything look the same (I mean game by game), it changes the look because it's exaggerating everything (everyone becomes older). And it breaks the photorealistic feel when the animation starts because it still is an in-game animation. But probably soon they will 'fix' even this (but this will be harder to sell as 'game assets').

It was ok-ish when it was only for those funny pictures, but now…

And you are really cheering for that?

I don't like the direction in which corporations are pushing the mankind. Nvidia is forcing everything to sell it's AI dominance. They forced RT (that it was one of holy grails of graphics but it was and actually still is too early for that). They are forcing PT (which is even more problematic for hardware). One of the reasons we need upscaling/reconstructing from lower resolutions is that they are pushing too much. So now we need AI to do that. And even though that DLSS and FSR (now even PSSR) are becoming good at it, it still degrades the image. I was playing RE9 on PS5 Pro and the image has many instabilities, noises, strange behaviors. Pragmata's demo on PC (5090) also had issues. Now Nvidia will be forcing more 'realistic' character.

I see that at least some influencers are seeing the problems (at least for now

). On the other hand seeing other people cheering for that, it is already over, like the whole AI market. I don't think that betting everything on AI (like hardware and rendering development) will end well. Just like Game Pass wasn't good for Xbox but some people were thrilled about it.

So yeah, no for this deepfake, soft generative slop. And if characters in Starfield look bad, blame the developers and don't give them easy/lazy/cheap 'fix'.