-

Hey Guest. Check out your NeoGAF Wrapped 2025 results here!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Lux R7

Member

diffusionx

Gold Member

ok now I'm convinced

Fbh

Member

Also, this Grace would appeared to have been authored true to what the developers wanted anyway

It would be interesting for them to eventually show this detailed artistic control that devs have in more depth.

Using "but devs worked on it" as a way to deflect criticism isn't entirely valid IMO until they can show what level of control devs really have over the final output.

Otherwise it's like taking this:

And when people say it looks like AI slop you are like "nope, Darren Aronofsky had full creative control. This is EXACTLY what he wanted it to look like. If he had made this with real actors and traditional VFX it would look exactly like this because this is his vision"

Last edited:

deeptech

Member

Yea, over a damn option nobody needs to even use. Ironically the hatred seems more uncanny, more AI generated than what it hates.What a shitstorm.

I hate the general everyday "AI" look and feel to characters, the ultra-clean, spotless look etc. But this still seems to me a cool thing to try out, there's just so many possibilities with it.

leizzra

Member

Look at post above. This is the whole control devs have. The rest is AI interpretation.would be interesting for them to eventually show this detailed artistic control that devs have in more depth.

cormack12

Gold Member

It would be interesting for them to eventually show this detailed artistic control that devs have in more depth.

Using "but devs worked on it" as a way to deflect criticism isn't entirely valid IMO until they can show what level of control devs really have over the final out put.

Otherwise it's like taking this:

And when people say it looks like AI slop you are like "nope, Darren Aronofsky had full creative control. This is EXACTLY what he wanted it to look like. If he had made this with real actors and traditional VFX it would look exactly like this because this is his vision"

I think the central issue is that most people are not seeing the in game presentation as being 'compromised' to begin with.

If the devs/artists say actually this is exactly the level of fidelity and responsive lighting we were going for but couldn't achieve, would the discussion change?

I mean look at Sid in 1995. He looks like he fell out of a Bethesda game. And this was offline rendering. No way this was artistic vision. It was a limitation of the tech.

CamHostage

Member

lol although AI related I don't know what this has got to do with DLSS 5.

Oh, is that Tekken image user-gen? Yeah, looks like it's not in the sample set, so sorry to you DLSS 5... still don't like you much, but that was my bad.

diffusionx

Gold Member

Can't deny that NVIDIA is shrewd. For about 20 years they took advantage of the rapid advancement in chip manufacturing to deliver better and better hardware and as soon as that started to hit a wall they immediately pivoted to software algorithms that they are using to take more and more control over how a game looks and runs. So if their hardware runs the game and their software determines how the game looks and how well it performs, they are in total control.This is so bad. It literally looks like all that thumbnails for "Consoles vs PC" videos, where PC image is generated for bigger contrast and is a clickbait. Now we can have this in games, great!

It looks bad, it makes everything look the same (I mean game by game), it changes the look because it's exaggerating everything (everyone becomes older). And it breaks the photorealistic feel when the animation starts because it still is an in-game animation. But probably soon they will 'fix' even this (but this will be harder to sell as 'game assets').

It was ok-ish when it was only for those funny pictures, but now…

And you are really cheering for that?

I don't like the direction in which corporations are pushing the mankind. Nvidia is forcing everything to sell it's AI dominance. They forced RT (that it was one of holy grails of graphics but it was and actually still is too early for that). They are forcing PT (which is even more problematic for hardware). One of the reasons we need upscaling/reconstructing from lower resolutions is that they are pushing too much. So now we need AI to do that. And even though that DLSS and FSR (now even PSSR) are becoming good at it, it still degrades the image. I was playing RE9 on PS5 Pro and the image has many instabilities, noises, strange behaviors. Pragmata's demo on PC (5090) also had issues. Now Nvidia will be forcing more 'realistic' character.

I see that at least some influencers are seeing the problems (at least for now). On the other hand seeing other people cheering for that, it is already over, like the whole AI market. I don't think that betting everything on AI (like hardware and rendering development) will end well. Just like Game Pass wasn't good for Xbox but some people were thrilled about it.

So yeah, no for this deepfake, soft generative slop. And if characters in Starfield look bad, blame the developers and don't give them easy/lazy/cheap 'fix'.

MisterXDTV

Member

Can't deny that NVIDIA is shrewd. For about 20 years they took advantage of the rapid advancement in chip manufacturing to deliver better and better hardware and as soon as that started to hit a wall they immediately pivoted to software algorithms that they are using to take more and more control over how a game looks and runs. So if their hardware runs the game and their software determines how the game looks and how well it performs, they are in total control.

This is the problem here

Tech should serve games....

NVIDIA wants games to serve tech now, otherwise they can't charge you thousands of dollars for GPUs anymore and get sales

Last edited:

John Bilbo

Member

Technical limitations breed artistic vision.I think the central issue is that most people are not seeing the in game presentation as being 'compromised' to begin with.

If the devs/artists say actually this is exactly the level of fidelity and responsive lighting we were going for but couldn't achieve, would the discussion change?

I mean look at Sid in 1995. He looks like he fell out of a Bethesda game. And this was offline rendering. No way this was artistic vision. It was a limitation of the tech.

And yes the same can be said for AI / DLSS5 created stuff.

AI has its own limitations that breed into the artistic vision of the stuff created with AI.

BennyBlanco

aka IMurRIVAL69

viveks86

Member

Well said. It's crazy that the gaming community is even split on this. It seems the ground truth doesn't matter anymore. As long as it's gratifying, nothing matters. It doesn't look right most of the time, but there are so many red flags even before we can dispassionately analyze the output quality for academic reasons.Not really - no. DLSS is reprojecting samples from past into current frames and using AI to drive the heuristics of the algorithm instead of being analytical.

While some data gaps do get filled in - the ground truth (if we were to have fully rendered samples without realtime constraints) exists, and is always possible to compare with.

If you say source image is not it - then there's no defined 'accurate' / ground truth to begin with. Nor - as apparent by some of the examples - is there ground truth for detail on screen - as that gets made-up on the spot too.

Calling it 'prediction' let alone 'higher accuracy' is circular reasoning. It's simply an artist re-interpretation of the source image. "Artist" in this case being the AI model (or maybe NVidia scientists that trained it - if we need human to call it art).

Not from an RGB buffer alone. Also specific design intents will be more murky yet. If you're trying to argue that human creative outputs are fundamentally algorithmic and should be ignored because prediction by 'best guess' is good enough - be my guest, but now we're squarely in the realm of 'who needs humans in product creation loop at all' as an argument.

And I'm not having that debate on a forum.

And the sheer amount of gaslighting going on....

"It's the future of gaming, but also no one needs to turn it on. Ummm... but it's the future. So YOU are the problem. Just because you can't afford a 5090, you can't stop progress, angry woke communist hive mind!"

Last edited:

cripterion

Member

What games are these though? Google image search says it's the PoP Sands of Time Remake, heh, funny Google.

I believe it's from an Nvidia tech demo.

viveks86

Member

Yep. Zorah. Though I think theyv'e added a character in it for the new demo.I believe it's from an Nvidia tech demo.

cormack12

Gold Member

But then the question is which is closer to the original vision?Technical limitations breed artistic vision.

And yes the same can be said for AI / DLSS5 created stuff.

AI has its own limitations that breed into the artistic vision of the stuff created with AI.

adamsapple

Or is it just one of Phil's balls in my throat?

peish

Member

This is nothing to do with "skinning". It's a post processing step performed on the generated output. The input frame would already have all the NTC data encoded in it. i.e.: The "Before" image. While a GBuffer will have material data and other data encoded in it, "Textures" are just colours, and can be sampled arbitrarily. The Neural Net doesn't know where it came from.

what? I dont understand now....what is a filter? what is a post processing here?

I thought it still have wireframe with its own animation routines computed by the cuda cores. The NTC is supposed to provide better than conventional texture quality on these skeletal meshes, why need another layer of filter?

This is mixed AI rendering, best of all worlds

Can a game ever be majorly rendered by the tensor cores?

Darius87

Member

yes but training and running app like DLSS is different because LLM already trained and it won't require to change it's tokens, so running already trained apps doesn't require so much ram but I guess as models gets more advanced so it's requirements.Correct me if I'm wrong but doesn't the entire LLM model need to be loaded into RAM/VRAM to generate images? This is gonna require an absurd amount of money to function as things exist today. It's literally just gonna beOverHeat using this for the foreseeable future.

John Bilbo

Member

The vision is different in different mediums and between different artists.But then the question is which is closer to the original vision?

The tools the artists use are the building blocks of the vision. The vision can only be found within the artist: the final art piece is a simulacrum of the vision of the artist. There is no final art piece only simulacrums: this is the nature of human creativity.

Audience has their own vision about what the artist is going for with the art piece. Sometimes the vision of the audience and the artist never meet.

BennyBlanco

aka IMurRIVAL69

yes but training and running app like DLSS is different because LLM already trained and it won't require to change it's tokens, so running already trained apps doesn't require so much ram but I guess as models gets more advanced so it's requirements.

I think this is generating the image on the fly though. Why else did they need a seperate 5090 to run DLsS5.

BennyBlanco

aka IMurRIVAL69

Hoddi

Member

Yes, but if this is just for lighting then they can likely get away with much smaller models than you would need for text-to-image generation. It might not even need a language model for that matter.Correct me if I'm wrong but doesn't the entire LLM model need to be loaded into RAM/VRAM to generate images? This is gonna require an absurd amount of money to function as things exist today. It's literally just gonna beOverHeat using this for the foreseeable future.

IlGialloMondadori

Member

It looks like shit.Ignore the bashers especially from money grabbing content creators. This tech is a good stuff.

IlGialloMondadori

Member

There have been a few of us on #TeamNative since the beginning and while it's nice to see more users glom onto the movement.

Some of these are impressive. Still not buying anything.

Represent.

Represent(ative) of bad opinions

IlGialloMondadori

Member

This is moronic.

And I'll take that over DLSS5 without a moment of hesitation.

Last edited:

Darius87

Member

yes but most of image are already generated by raster+rt and dlss takes input of some parameters so it's definitely much lighter then generating video by prompt but overall it's very expensive.I think this is generating the image on the fly though. Why else did they need a seperate 5090 to run DLsS5.

cormack12

Gold Member

Hmmm, it's a fascinating topic but we're probably going down an interesting but non-related topic with this line of thinking - and how far back does this apply? Do we go back as far as paintings when less expensive shades of blue were used, affecting the vibrancy of the sky (and therefore the intention of the artist) or the strength of evoking an emotion.....from the viewer.The vision is different in different mediums and between different artists.

The tools the artists use are the building blocks of the vision. The vision can only be found within the artist: the final art piece is a simulacrum of the vision of the artist. There is no final art piece only simulacrums: this is the nature of human creativity.

Audience has their own vision about what the artist is going for with the art piece. Sometimes the vision of the audience and the artist never meet.

We can continue this in my room of brandy, cigars and prostitutes.

I would be interested for DLSS5 to be applied to that scene in the Witcher 3 specifically as I feel it would draw out some level of comparison to better frame the debate - given the lighting uplift which has similar traits to the scenes in Oblivion.

Represent.

Represent(ative) of bad opinions

No it isnt.This is moronic.

And I'll take that over DLSS5 without a moment of hesitation.

Good for you,

These are the best graphics I've seen in my life. I'll happily enjoy them while you complain about nonsense.

Mr.Phoenix

Member

This comes down to how its used... and unfortunately, devs will jump at anything that makes their jobs easier. I only hope that like any tool at their disposal, we actually start having AI lighting artists that can use such tech properly and maintain the artistic intent.Well, the biggest problem for now is how it's being used for sure. It's like a free 'get out of jail' card and Starfield is a great example of that (oher would be Mass Effect Andromeda). For the tech itself - for now I'm not sure, I would need to get more details and see how it work and if it can be used in a good way. Still it is a bit like cheating (the whole lighting part) and this is something that needs to be examed. Maybe it can be used in a good way (but then would be less flashy for marketing).

One can say every new graphic tech is pushed out too early... eg, we started the whole raster thing as far back as 1994 with the PS1N64 gen, it took all the way to the PS4 gen...almost 20 years later before the hardware was actually strong enough to give proper no limits rasterization. Same with the whole HD thing... so yes, we can say RT was pushed out early, but to me its just the natural way all gaming tech comes to market.Yes, it is very important and makes everything looks more real. And RT has a big cost to it, PT even bigger. Sure, thanks to Nvidia it is possible in real-time to some extend but still you need to make some exceptions, simplifications, sometimes degradations. I do like RT and PT and I would like to be in time when every single GPU can handle it with good results so that we don't need to have fallback to old methods (that was also one of the proclaimed pros for RT that lighting in game will be easier and less time consuming - for now it is the opposite). But we aren't it that time yet. This is only my opinion - RT was pushed out to early but this is what Nvidia needed at that time to grow bigger and stronger on the market. Now it's the same with AI although they don't need gaming any more for that (maybe for making people feel better about AI when it works). Sure, it works, but maybe the cost is actually to big. But it was already decided and people get used to it/expect it even though it has some negative effects (like Game Pass).

The only difference between now and then is that the hardware to do RT actual justice, to allow games to have a full suite of RT features while running at 60fps... will likely never be mainstream.

Which brings me to why I am happy we have AI. Because in the same way we got to a point where it became impractical to simultaneously push for native 4K while maintaining framerates over 60fps with all the bells and whistles modern game engines have, it's the same way it's impractical to be pushing for native RT or PT and expecting high framerates and high resolutions.

AI can solve all this, we have seen what AI can do for resolutions, and its been so effective there that its almost stupid to try and run a game using native resolutions when AI can give you results even better than native resolutions while hitting a higher framerate. With this new tech, AI can solve the single biggest thing and cost in game engines. And that's RT. If done right, this tech means you need only shoot a quarter or so of the amount or rays into a scene as you would have otherwise done, and the AI will take that data in addition to the data of the rest of the scene and give you lighting as if you shot 100% of the rays you actually needed to make that scene. And while it may take some time yet, this is how we get there.

I see AI as the inevitable next step. Yes some devs can attempt to use it as a cheat code, can attempt to use it as a way to not put in as much work... and those will always be there. Even without AI we still had bad or untalented devs. But there will be those who use it right. It became apparent in the PS4XB1 gen that we cannot just keep throwing more cores, RAM and higher clocks at GPUs and expect generational leaps. There had to be a massive shift in how we render games, in how we build our GPUs, a way to make up for the fact that GPU hardware was not scaling up in step with the demands of modern game engines.Yes, I'm not saying you are wrong with this thinking but then the question is, when the cost is too much? Maybe you should slow down (I know the corporations are afraid of those words). For sure the AI market should stop (but it won't) and rethink the whole approach - even some of the people who helped the development of AI are saying that. It is driven by the corporations greed and not by common sense. And this is what have a big influence on our word. I feel like the tech upgrade (like GPU power) has became small and now AI became this easy solution for growth. Maybe I am wrong and this is the right way, but it feels wrong in a way. But as I said, this is my opinion and I can't force anyone to have the same.

And while everyone points the finger at AI, devs have been trying to hack this issue long before DLSS, eg. checkerboard rendering. When Sony made the PS4pro with actual CBR hardware acceleration, everyone should have known the writing was on the wall. And mind you, the PS4pro launched 3 years before the first DLSS powered GPU. AI is just a much better way to do the very things devs have been trying to do for ages.

Made an image less stable... they found ways around that. Right now, the UI is a separate pass in the render pipeline for that very reason. But I get what you mean, I am just saying there will ALWAYS be a give and take when it comes to graphical rendering, unless you have the hardware to brute force everything... which is impossible to do.... affordably. So its really a question of picking the lesser of evils.I understand what you mean. Yes we are using tricks from the start, even normal maps are just that. But every trick has it weaknesses. I thought about reconstruction as this magic thing that can make a 1080p game to 4k. When I was reading opinions or watching videos about the subject it looked that way. Then when I started to play those games (well even a work in engine showed me that DLSS can damage simple thing like navigation and UI). I noticed that it's not that great. RE9 or FFVII Rebirth are good examples of games that are taunted as great on that front, but I see many downsides. And sure with RE9 there is a problem with denoiser (so RT) but even without it there are issues. And I'm not looking for them on purpose, they are just there saying hello and waving. So I'm not sure if it's really better way if it makes a image less stable. But to be fair, there where many screen space effects before that and they also made the image less enjoyable, especially in motion.

People are so dense, it's really hard to participate.

- Will make game development cheaper

- Will make game development faster

- Will make games look better

- In 1 generation will likely be accessible to all cards in the stack

- It's not a beauty AI filter, it's a tool that developers have full artistic control over

Is it perfect right away? Probably not! Guess that's just how everything new has ever worked ever. What is the issue?

- Will make game development cheaper

- Will make game development faster

- Will make games look better

- In 1 generation will likely be accessible to all cards in the stack

- It's not a beauty AI filter, it's a tool that developers have full artistic control over

Is it perfect right away? Probably not! Guess that's just how everything new has ever worked ever. What is the issue?

Last edited:

John Bilbo

Member

Dude we go back to monkeHmmm, it's a fascinating topic but we're probably going down an interesting but non-related topic with this line of thinking - and how far back does this apply? Do we go back as far as paintings when less expensive shades of blue were used, affecting the vibrancy of the sky (and therefore the intention of the artist) or the strength of evoking an emotion.....from the viewer.

We can continue this in my room of brandy, cigars and prostitutes.

I would be interested for DLSS5 to be applied to that scene in the Witcher 3 specifically as I feel it would draw out some level of comparison to better frame the debate - given the lighting uplift which has similar traits to the scenes in Oblivion.

neocycle

Member

Not until Switch 4.Can't wait to see what Nintendo does with this.

March Climber

Member

It is, because for some reason the ones using that Bethesda bulging eyes image to defend it don't realize that turning on DLSS5 with that image would just create nightmare fuel.No it isnt.

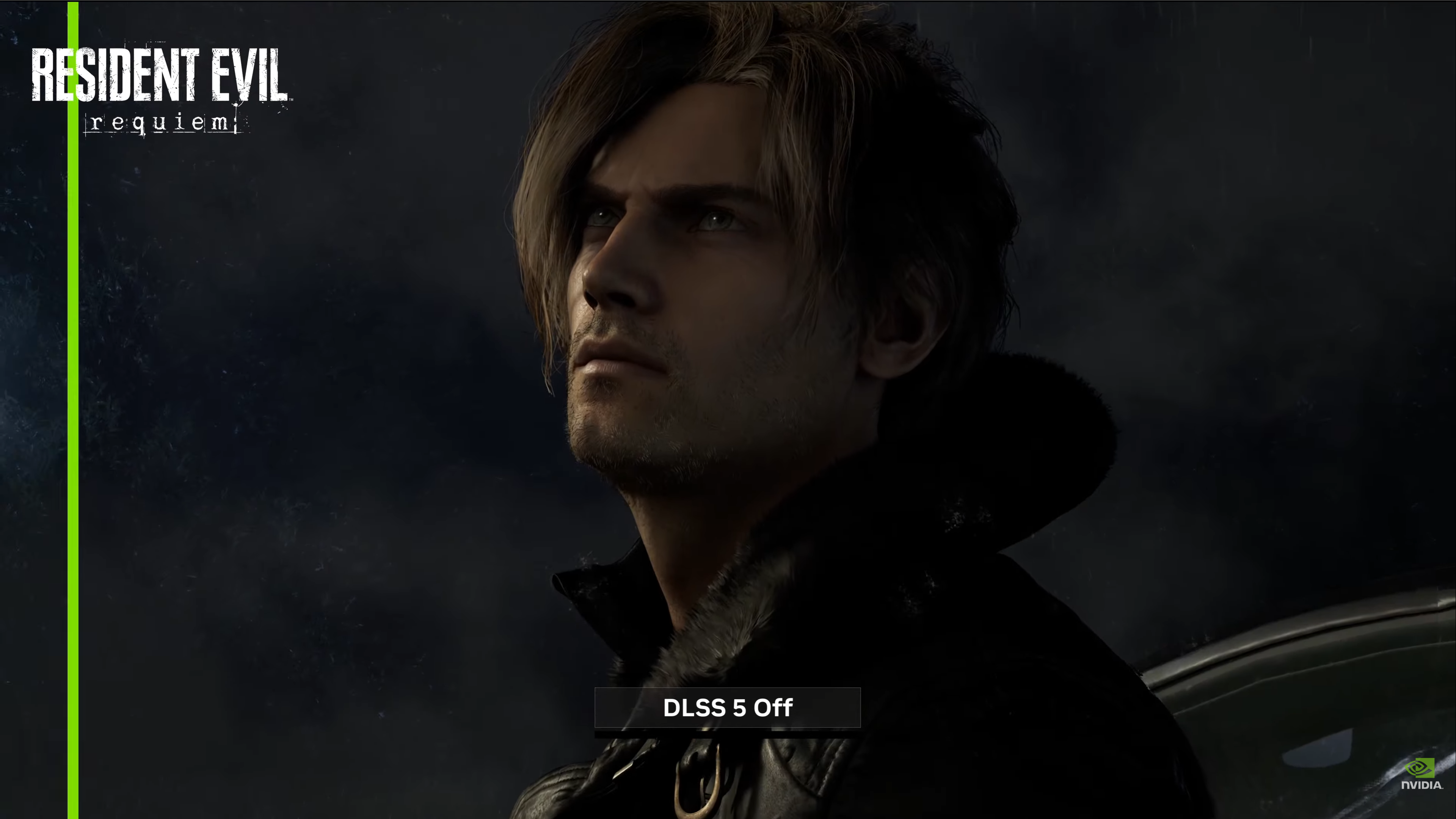

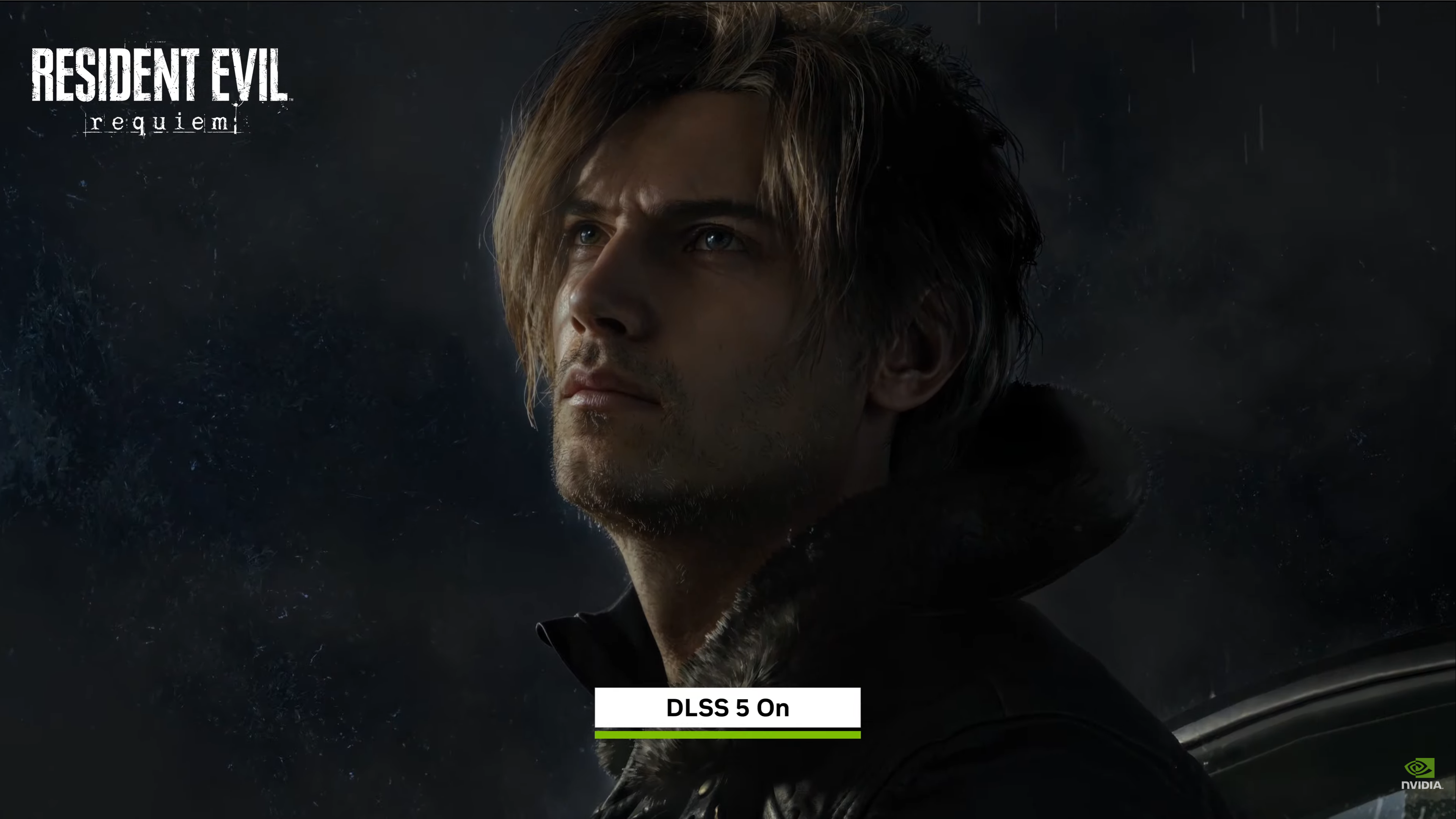

And the second one looks like a wax figure.There's no way you can prefer the first image from this comparison

The first image is so flat and pale it's unreal - she looks like a porcelain doll.

IlGialloMondadori

Member

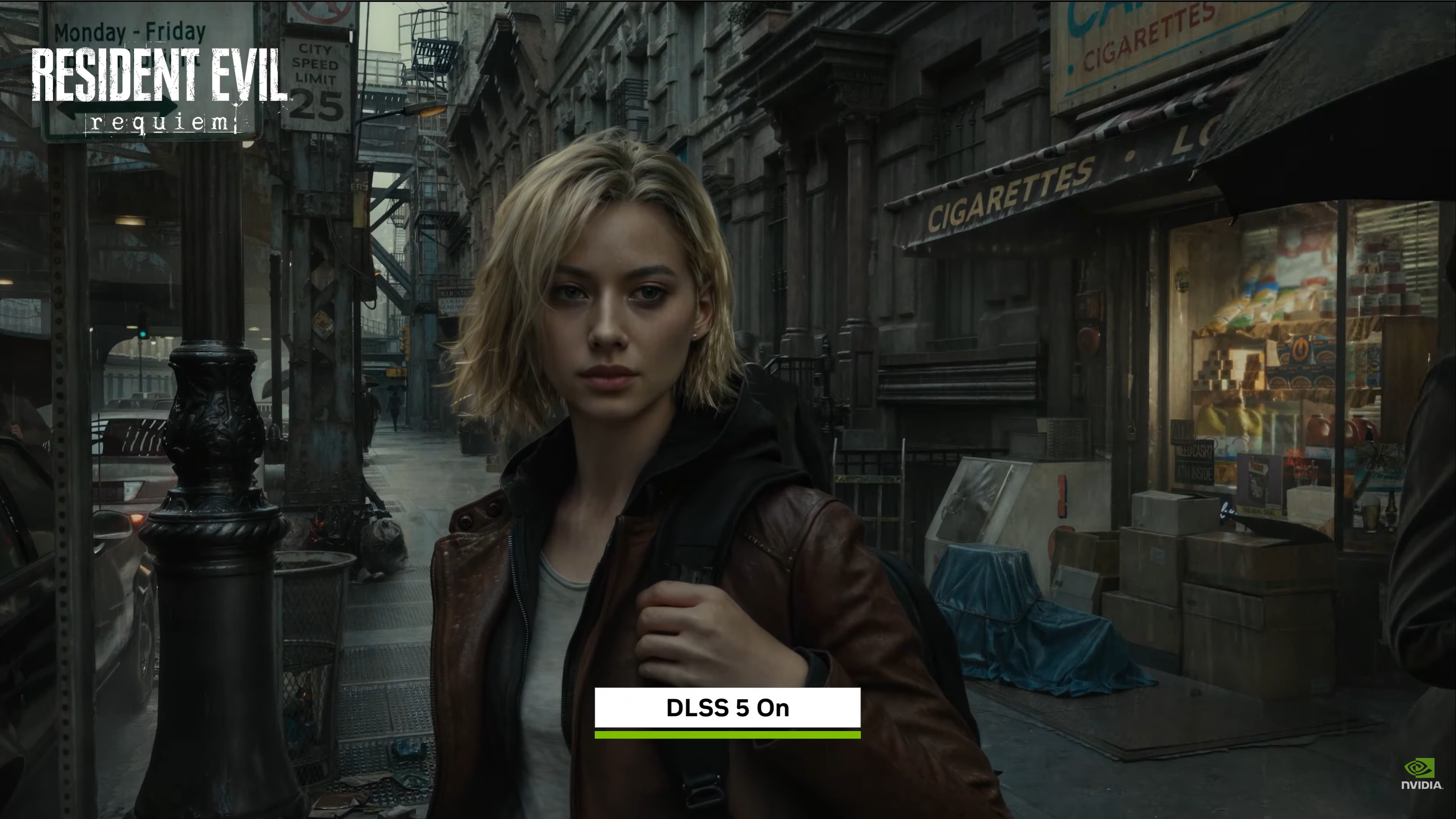

It just looks like someone put the sharpness on the TV to 100, and then overlit everything.No it isnt.

Good for you,

These are the best graphics I've seen in my life. I'll happily enjoy them while you complain about nonsense.

All these images look like the shitty reshade mods combined with an instagram filter on the faces.

TheChumpNation

Member

Impressive, even if it looks overdone right now. Scale it back like 25% and this is a pretty hands down winner.

It'll run on a 4070 right? Right???

It'll run on a 4070 right? Right???

Stafford

Member

That's The Red Dwagon for you.This is moronic.

And I'll take that over DLSS5 without a moment of hesitation.