Represent.

Represent(ative) of bad opinions

Full PC next gen

This could be really good depending on how devs implement

I'd let Nvidia impregnate me

fucking never been happier as a graphics WHORE

Full PC next gen

This could be really good depending on how devs implement

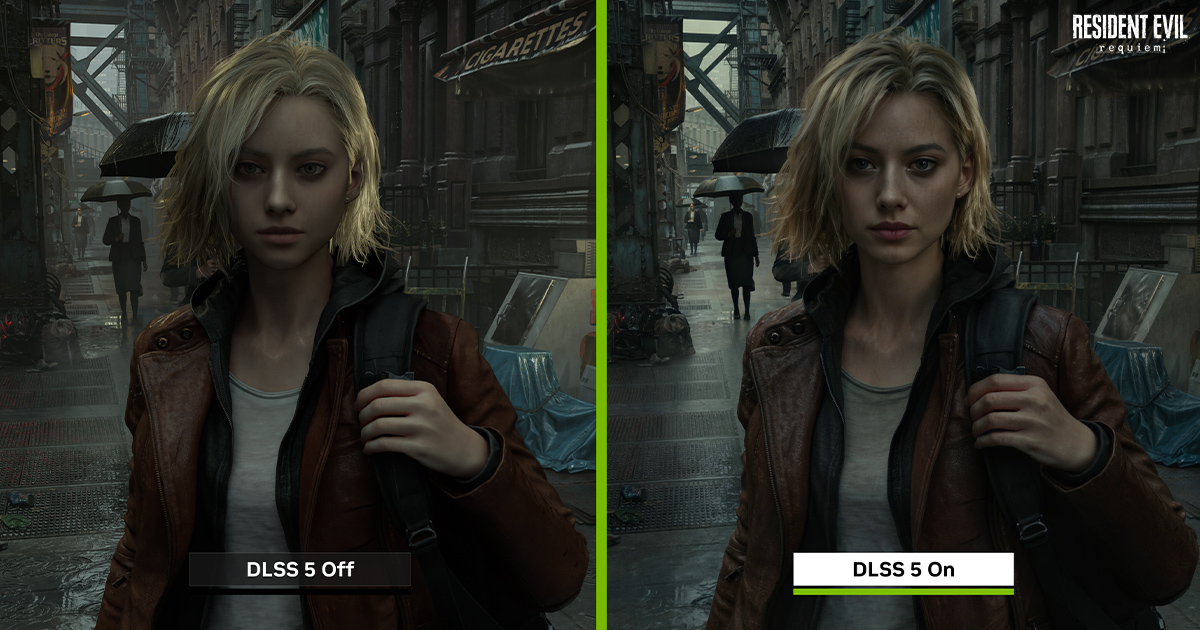

It doesn't explain the eyeshape itself and jawline. Your argument would be better off if you simply said 'this tech is still early in development so some things might appear altered'.

just so you know you're going to be taking out a mortgage on that PC just to subsidize the sloppification machineFull PC next gen

I'd let Nvidia impregnate me

fucking never been happier as a graphics WHORE

Wtf are you talking about?

I'd sell my testicles to play Black Myth Wukong 2 with this techjust so you know you're going to be taking out a mortgage on that PC just to subsidize the sloppification machine

It doesn't explain the eyeshape itself and jawline. Your argument would be better off if you simply said 'this tech is still early in development so some things might appear altered'.

I'm starting to think a huge number of people's brains have been literally broken by some sort of psyop to have a Pavlovian reaction anything ai that literally stops their brains working and forces the tiresome "ahhregh ai slop, slop slop" to just slop out their mouths.

It's all very strange.

I'm not sure what to tell you, it's the same model. The only differences are in the lighting and the texture.

It's wild. You see it everywhere, in unison, on Reddit, X, forums, YouTube comments, you name it. It's like some sort of instantaneous scripted response. Any mention of AI and they'll swarm to it like bees on honey while screaming slop slop slop slop slop until they pass out.I'm starting to think a huge number of people's brains have been literally broken by some sort of psyop to have a Pavlovian reaction anything ai that literally stops their brains working and forces the tiresome "ahhregh ai slop, slop slop" to just slop out their mouths.

It's all very strange.

it's so funny how people here ACTUALLY think that starfield looked better with DLSS5 on.

fuck you

The undefeated champion of having the best posts on gaf. LmaoOf course I have a post for that

Post in thread 'Watching people pretend like they're being deprived of hot women in good video games nowadays is kinda weird to me' https://www.neogaf.com/threads/watc...s-is-kinda-weird-to-me.1691812/post-271139145

You jest but if this is the way forward then maybe I won't be needing it. Tons of cool games already in existence to spend my time playing AI slop.but maybe I don't need a new gaming PC

PC Gamer is already complaining it makes women more attractive. They call it "AI beauty standards". Hahaha

I'm starting to think a huge number of people's brains have been literally broken by some sort of psyop to have a Pavlovian reaction anything ai that literally stops their brains working and forces the tiresome "ahhregh ai slop, slop slop" to just slop out their mouths.

It's all very strange.

In the old days they could do this with something called Brightness.

This could be really good depending on how devs implement

In the old days they could do this with something called Brightness.

This is disengenuous. Zoom in.

It's not just her mouth, her eyes are also larger and shaped differently and her jawline has changed as well.

It doesn't explain the eyeshape itself and jawline. Your argument would be better off if you simply said 'this tech is still early in development so some things might appear altered'.

It's wild. You see it everywhere, in unison, on Reddit, X, forums, YouTube comments, you name it. It's like some sort of instantaneous scripted response. Any mention of AI and they'll swarm to it like bees on honey while screaming slop slop slop slop slop until they pass out.

This is the most amazing tech we've seen in god knows how long. Just look at the differences with Starfield, FIFA, and AC Shadows. It's unbelievable. I'm hoping devs and Nvidia as well don't shelve this based on the robotic slop spam response from the crowd.

a bit annoying how proprietary it is now, but I could see this gradually going into open source tooling for devs to compile their own models, allowing a lot of dev-side customization into your model, tuning on your specific art styles, etcAnd nvidia will be the gatekeeper and overlord that everyone will have to conform too.

And as predicted people are upsetThe undefeated champion of having the best posts on gaf. Lmao

NVIDIA DLSS 5 Delivers AI-Powered Breakthrough In Visual Fidelity For Games

NVIDIA DLSS 5 infuses pixels with photorealistic lighting and materials to bridge the gap between rendering and reality.www.nvidia.com

Damn Mr Huang did it again!

Yah it looks great particularly in motion. Is it without issue? No, but this is an early instance of this tech, you'd have to be blind to not see its potential.tinfoilIt's wild. You see it everywhere, in unison, on Reddit, X, forums, YouTube comments, you name it. It's like some sort of instantaneous scripted response. Any mention of AI and they'll swarm to it like bees on honey while screaming slop slop slop slop slop until they pass out.

This is the most amazing tech we've seen in god knows how long. Just look at the differences with Starfield, FIFA, and AC Shadows. It's unbelievable. I'm hoping devs and Nvidia as well don't shelve this based on the robotic slop spam response from the crowd.

Not quite. You're including environment information in the fundamentals of a character. For context purposes, the AI can distinguish between the person and the environmental impact on that person, such as lighting, rain, wind, and occlusion. The model and its textures and materials don't change - effects are simply applied to those models and textures. The AI contextualises all of this information when creating its output. It might be worth highlighting that DLSS5 isn't a blind filter being applied to a static frame. The AI filter requires "hooks", such as model and texture info, motion vectors, lighting structures, and so on, to actually work. Inside, the AI knows who Grace is, what the lamp post is, what the NPC is, and so on. It's why they showed it off on mostly modern games - games that have those hooks in place for them to tap in to. You can't run DLSS5 on a flat image of DOOM (1993) and get DOOM (2016) because DOOM (1993) doesn't have those hooks to feed the AI.I don't know man. You can give an AI a prompt multiple times and the result won't always be the same. Could imagine being the same case here, specially since "as the underlying information for a given object doesn't change" is just impossible. Characters move around, the lighting changes, the wather changes, fog, shadows, particles near them and what-not. Keeping those AI reconstructions stable must be an engineerning nightmare.

The real question. Can you run every thing at low settings and then get this cost of paint? Would it be greatly diminished?

Imagine being ok with the company that made that now being promoted to same exact level as the studios that have cultivated the industry's best artists for decades, all thanks to a toggle. Except the output of both studios now looks like AI porn.the retard dragon with his wonderful takes once again)))