xool

Member

Splitting the CPU and GPU seems ok, but still left with a massive GPU die area .. [GPU is like 75% of APU die space anyway, and the point of splitting is to reduce die area to increase yields .. so need to do more splitting of GPU]How come chiplets are advantage, when it's about CPUs, but suddenly not the right thing, when it is about APUs?

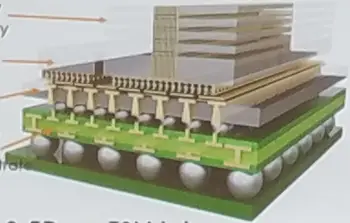

The issue I think is splitting the GPU into smaller GPUlets - problem is rather than sharing a large local (on chip) memory pool/huge register set the GPUlets become mostly separate devices.. cons :

- How will they divide the rendering task ? - eg like Crossfire ??

- Split screen into areas per GPUlet?

- Increased bandwidth - textures/geometry will need often to be loaded twice when they overlap GPU screen areas

[edit - maybe they could make the split at the Shader Engine level - that only requires duplicating the General Data Store and L2 caches .. seems ok ?]

Last edited: