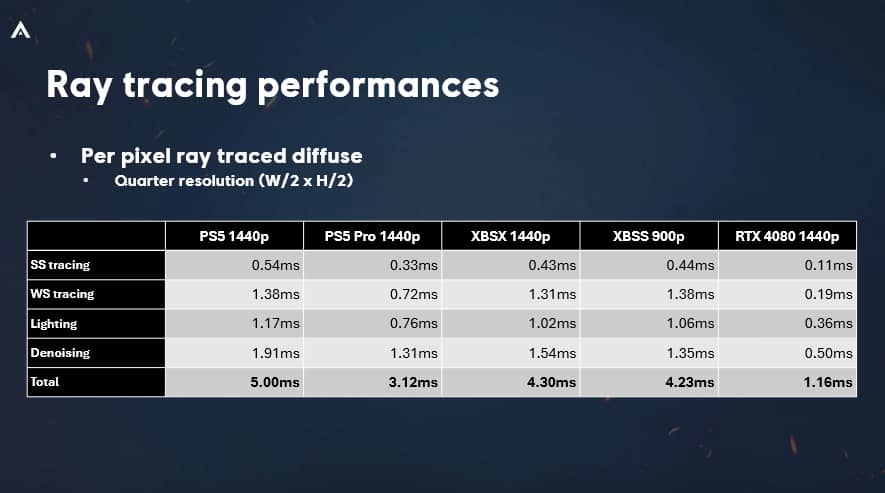

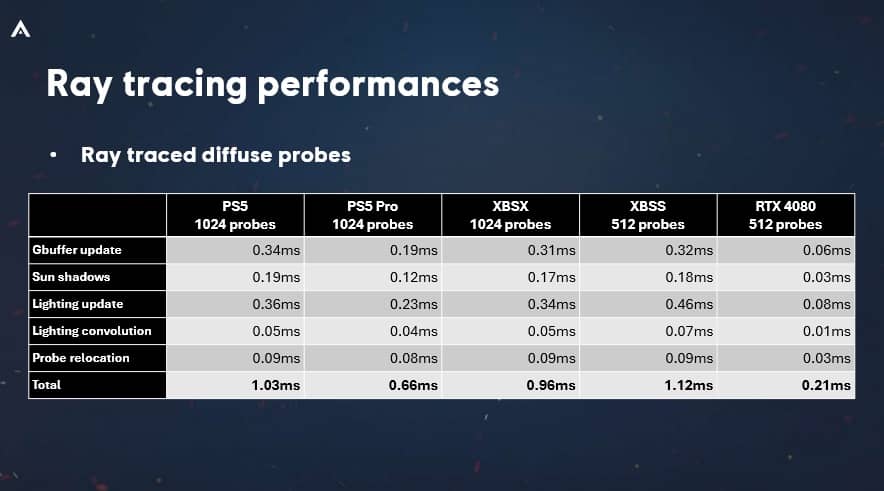

I still don't understand how they managed to design such an unbalanced console that they somehow crippled the main strength of the new architecture to the point its a marginal .5/.6x improvement when we know rdna4 is amd,s most significant leap in rt in real-time. I mean you have to actually put in effort to gimp things this badly. I mean I would legit like to know from a tech expert like

K

KeplerL2

what is the main bottleneck?

Even by sonys own extremely conservative marketing material rt being the only wow point 3-4x rt performance it is not. Only way i can rationalize it is that they neutered their premium console so they could keep keep rdna2 as a base to take an easy approach to avoid any headaches with compatibility. Even the ps4pro which was bandwidth starved retained all of the benefits of arch leaps and even punched beyond its weight due to its loaded rops.

I remember when I was dissapointed when Heisenberg told us it was around 4070[yes i know later he was told it was cod] and I was a dissapointed looking back at the xbox one x(now that was a real pro console) but damn Cerny wtf happened. I mean, I can have unsubstantiated hope that this particular workload on their engine performed below expectations for their engine but let's be real..