Trogdor1123

Gold Member

surely people don't expect more than 32 gigs of ram in the next unit, right?

surely people don't expect more than 32 gigs of ram in the next unit, right?

So you want them to fuck up next gen with 24GB of RAM?Same for nvidia. In fact they are first.

First desktop GPU with 3GB modules launch Q4 2025. You really expect 2x bigger modules in 2027? I expect 4GB to be latest and greatest in 2027.

Yeah, they won't split RAM like that.

So you want them to fuck up next gen with 24GB of RAM?

We are already going to be held back the handheld anyway, so I guess 24GB would work.

I don't expect more than 18GB myself 32 seems a pipe dream for a dedicated console, especially with a dedicated APU sharing that load.surely people don't expect more than 32 gigs of ram in the next unit, right?

You guys are ignoring parts of the leak.I don't expect more than 18GB myself 32 seems a pipe dream for a dedicated console, especially with a dedicated APU sharing that load.

Maybe you're right but in the context of video games I equate AI to frame generation/upscaling/and image processing not logic and workflow. I could be wrong but the problem is going to be costs. Adding all this ram and AI is costly and they can't make 1000 consoles and expect to have millions of units sold a year.You guys are ignoring parts of the leak.

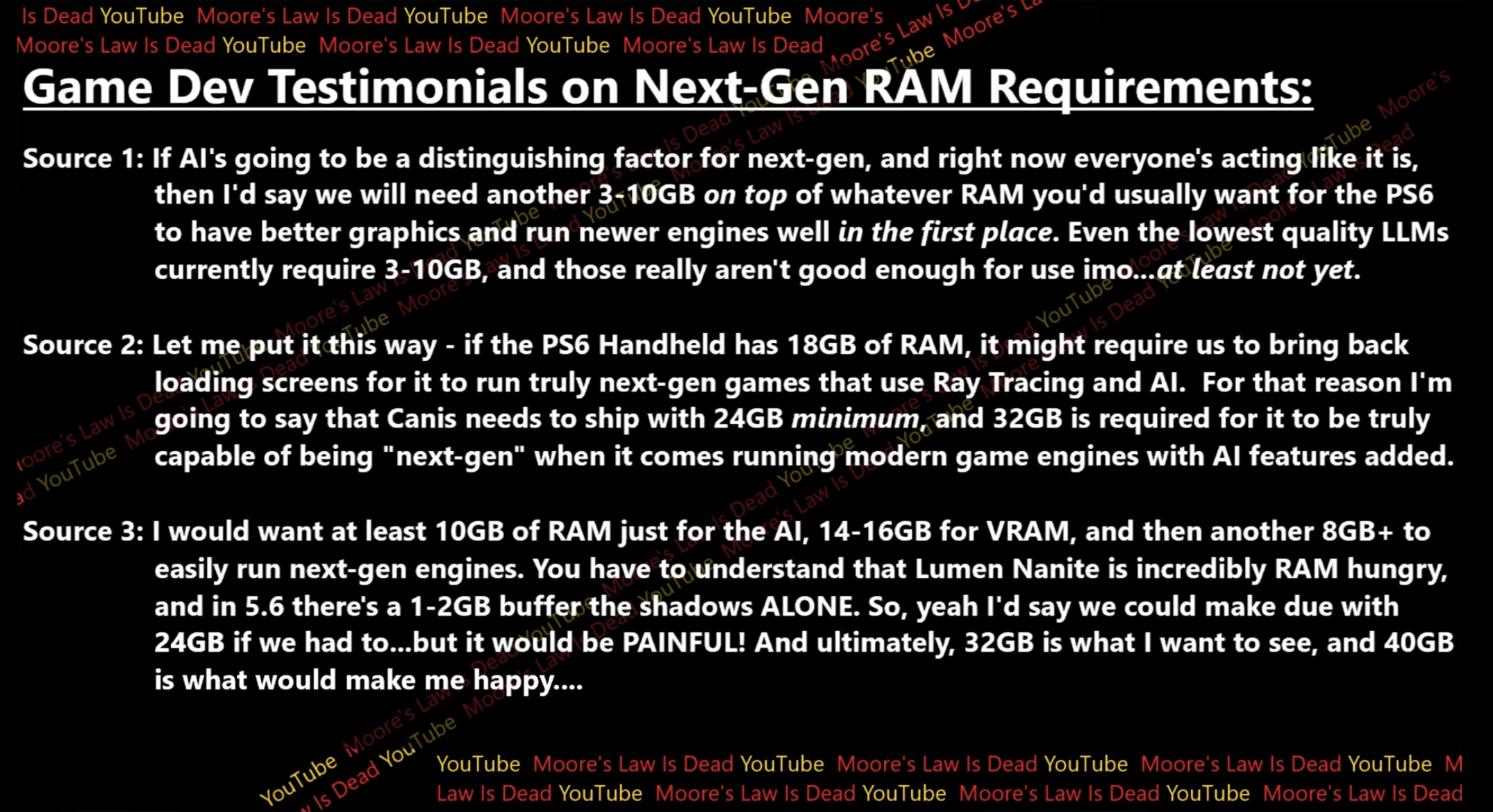

If the PS6 is A.I. heavy (not the AI upscaling portion), it needs more RAM than normal.

It's not like Sony hasn't been exploring and pushing A.I in games beyond just upscaling.40GB for PS6 and 32GB for a 1080p handheld is a laughable assumption, theres 0 chance they will come with that nor will they need that much. Very few games (if any at all) need more then 16gb at native 4k. PS6 wont even be a native 4k console and will be pushing the latest FSR heavily.

Regarding LLMs, i think this will be a next next gen thing, no need to be pushing AI this heavily, nvidea will probably lead the charge in the coming years but it will be super jank and uncanny and wont reach full stride till the end of the PS6 generation, AMD can play catch up when the time comes.

PS6 im betting with have 24gb with 18-20 reserved for games. (more than enough imo)

Did you not see MLiD cost breakdown?Maybe you're right but in the context of video games I equate AI to frame generation/upscaling/and image processing not logic and workflow. I could be wrong but the problem is going to be costs. Adding all this ram and AI is costly and they can't make 1000 consoles and expect to have millions of units sold a year.

Also RAM has always been an issue with developers and consoles, there is never enough

I call it bullshit as there is no point to run LLM locally on console. And practical tasks that gaming console could run require like 1-3% of that space. DLSS4 transformer model (i.e. same basis model as LLM but tailored for specific task) is 300Mb not 3-10Gb.You guys are ignoring parts of the leak.

It's a HUGE waste of resources and could and should be put into cloud portion.If the PS6 is A.I. heavy (not the AI upscaling portion), it needs more RAM than normal.

Horrible example of the GT Sophy application, its a model that has already been trained and isn't some massive LLM running locally with large vram requirements. Additionally, the same Sophy AI runs fine on the base PS5.It's not like Sony hasn't been exploring and pushing A.I in games beyond just upscaling.

Gran Turismo Sophy is a good example.

I suspect Sony would push this even further with the PS6 generation.

But what many fail to understand, next gen isn't just about PS5 fidelity at higher resolutions and frame rate. Devs would go for higher fidelity regardless.

Even without LLMs, 24GB isn't enough. The PS5 Pro for example had to increase to 18GB.

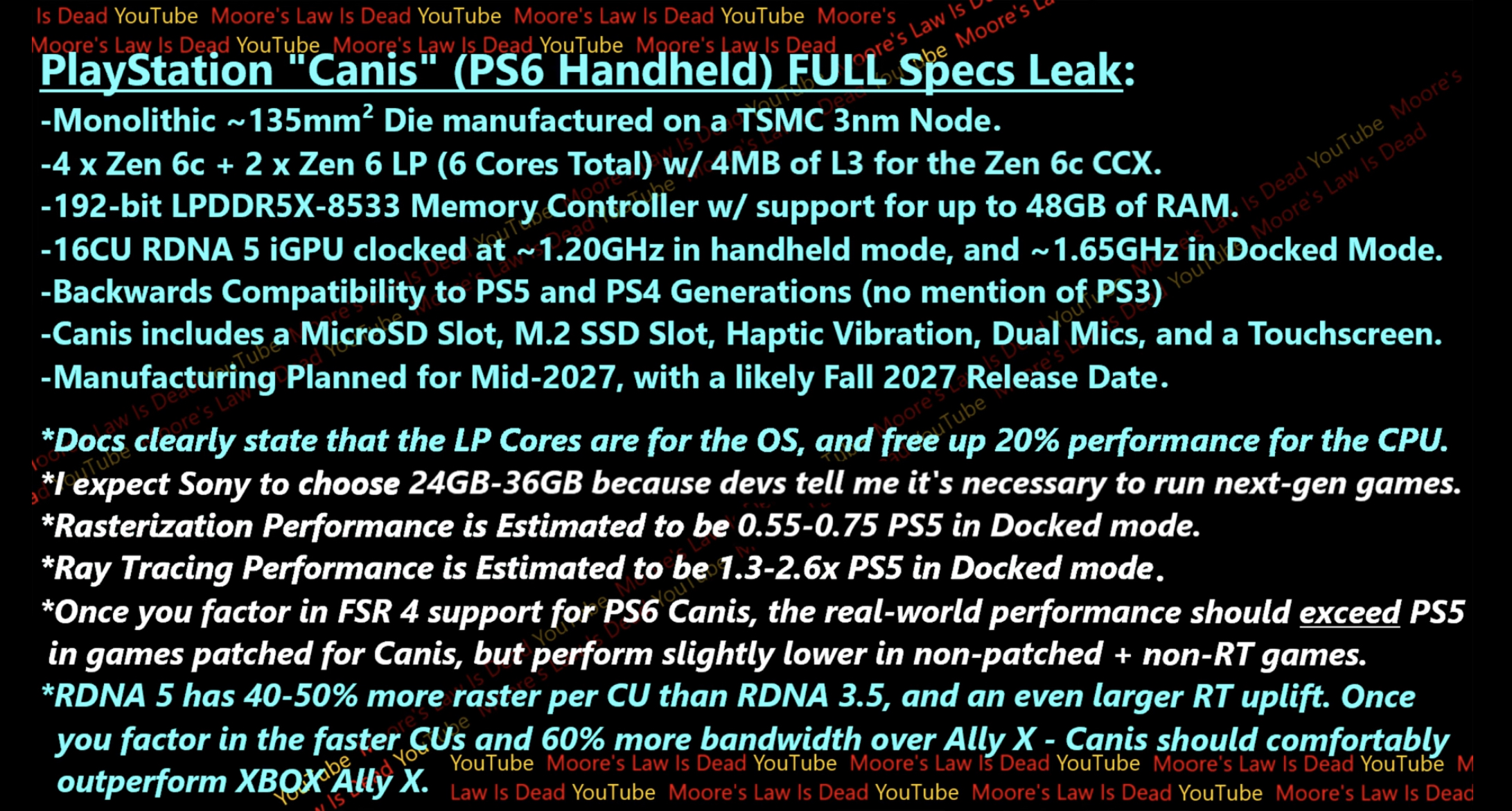

That will be Medusa Premium with 24 CU RDNA5, though that will have the same issue of current Z1E/Z2E handhelds of just using an off-the-shelf laptop APU which means running at 28W (or higher) to get optimal performance. Canis is proper handheld APU that runs optimally at 15W.

WTF is 3-10 Gb AI requirement. It sounds like invented bullshit. Why console would need to run full scale general-purpose, shit-quality LLM models and not just tailored models like DLSS4, which takes ~300Mb at 4K

I used GT Sophy to say Sony was very interesting in AI in games back then, so now they'll go further than that and ues LLMs on the PS6 generation.Horrible example of the GT Sophy application, its a model that has already been trained and isn't some massive LLM running locally with large vram requirements. Additionally, the same Sophy AI runs fine on the base PS5.

If i'm thinking about game design and how LLMs will be integrated in future use cases (fe NPC conversation). I see much smaller more specific models that can be loaded in out of a limited pool of vram at will depending on the context/scene. Having large vram/unified ram pool in a consumer grade device meant for mass consumption is not feasible from a BOM stand point.

Predicting:

PS6 Portable 16-24 GB unified

PS6 24-32 GB unified

Its going to be crazy when Nvidia enters the ring around this time next year. Probably just laptops to start with....but what will it look like in 3 to 5 years.....

Putting it in the cloud would introduce latency issues.I call it bullshit as there is no point to run LLM locally on console. And practical tasks that gaming console could run require like 1-3% of that space. DLSS4 transformer model (i.e. same basis model as LLM but tailored for specific task) is 300Mb not 3-10Gb.

It's a HUGE waste of resources and could and should be put into cloud portion.

Specialized LLM (transformers) are small and there are no point to put general purpose "jack of all trades, master of none" LLM onto console. You don't need game to chat about amazonia butterflies, it's completely stupid waste of resources.

edit - btw to run single query on 10GB LLM will take at least 5-10 sec full load, making it not applicable to ~any~ gaming purposes

We're already at the last third of the generation, not sure if I'd call that 'half way ish'.With ps5 sales dropping a little aggressively half way ish into the gen, I wonder if they are dropping in 2026 instead or early 2027.

Can they easily just force assembly line for "cutting edge tech" to happened one year earlier just because they want to? Sounds like a recipe for failure rate disasters.With ps5 sales dropping a little aggressively half way ish into the gen, I wonder if they are dropping in 2026 instead or early 2027.

That's a rare combination of wordsWith ps5 sales dropping a little aggressively half way ish into the gen, I wonder if they are dropping in 2026 instead or early 2027.

Maybe he meant "dropping modestly."That's a rare combination of words

You really like this Windows Phone comparison (I've seen you bring it up in every conversation surrounding Xbox 's next platform). I don't think it's as good of analogy as you do, but of course, you are allowed to have opinions.The same delusions from the Windows Phone era, that Microsoft's magical and mysterious software would make everything run on Windows Phone, PC, and Xbox without needing a port.

Account created in 2018 that only became active in 2025.

Don't you Xbox users ever get tired? Don't you have anything to do with your life?

As if running it locally would not introduce them. The amount of computations required for 10b LLM put it way out of console hardware tier.Putting it in the cloud would introduce latency issues.

It should be first and foremost resolution, fps, effects and other **practical** stuffFor me, next gen should be more then just running PS5 games at a high resolution and frame rate.

Nobody care about this shit.LLMs enhance NPC intelligence: dynamic dialogue, emergent behaviors, contextual awareness, and story generation.

Even for this shit you don't fucking need LLM - you need transformer trained for specific task in specific environment. WTF your LLM should be able to talk about Amazonia butterflies, Fourier transform or RNA replication when it's about dark fantasy setting of world dying - it doesn't need 99% of those topics and weights related. Get it distilled and trimmed and it'll be DLSS4 size, convenient for specific task and knowledgeMemory & compute implications: NPC-driven LLMs consume context buffers, inference buffers, and weight memory, feeding the AI pipelines for real-time behavior.

This makes AI-heavy games feel alive and reactive, rather than static or scripted. But i get it if you would to continue playing static and scripted games just because you want the console to be $50 - $100 cheaper.

I swear I'm the only one that wants a proper next gen leap on this forum.

You really like this Windows Phone comparison (I've seen you bring it up in every conversation surrounding Xbox 's next platform). I don't think it's as good of analogy as you do, but of course, you are allowed to have opinions.

Did you not read my post?I used GT Sophy to say Sony was very interesting in AI in games back then, so now they'll go further than that and ues LLMs on the PS6 generation.

So why are you still predicting upto 32GB?

BEWARE of FAKE Rumors

Possibly a scaling technology without obligating developers two create two different version or downgrade a specific game which is one of the main culprit for bad optimization this generation especially on multiplatform games(in the case of xbox series S) and developers not maximizing the full potential of a specific hardware.Does this mean PS6 is mid or is Sony going to have two kinda-close spec targets for the next 10 years? Because I'd bet a lot of third-party PS6 games could run on either PSP3 or PS6, but you don't want to limit that first party development to having to make their games run on the PSP3.

The nintendo brainrot is so strong. Open a window and get some fresh air, your brain has gone stale.Sony always following Nintendo, no shit... The good thing is that probably many people will eat crow since it won't be much more powerful than Switch 2... Hardware being this powerful at most for a while is good imo, it gives devs time to polish their tools and start over.

I really hope not. That will just complicate development, potentially will make shrinking the system tougher, and make the whole thing more clunky.What if its 12-16GB of cheap slower ddr5 and 16-20GB of faster GDDR7 simply for cost reduction?

Nothing has enough performance to run next-gen games and an LLM at the same time so this argument is irrelevant.You guys are ignoring parts of the leak.

If the PS6 is A.I. heavy (not the AI upscaling portion), it needs more RAM than normal.

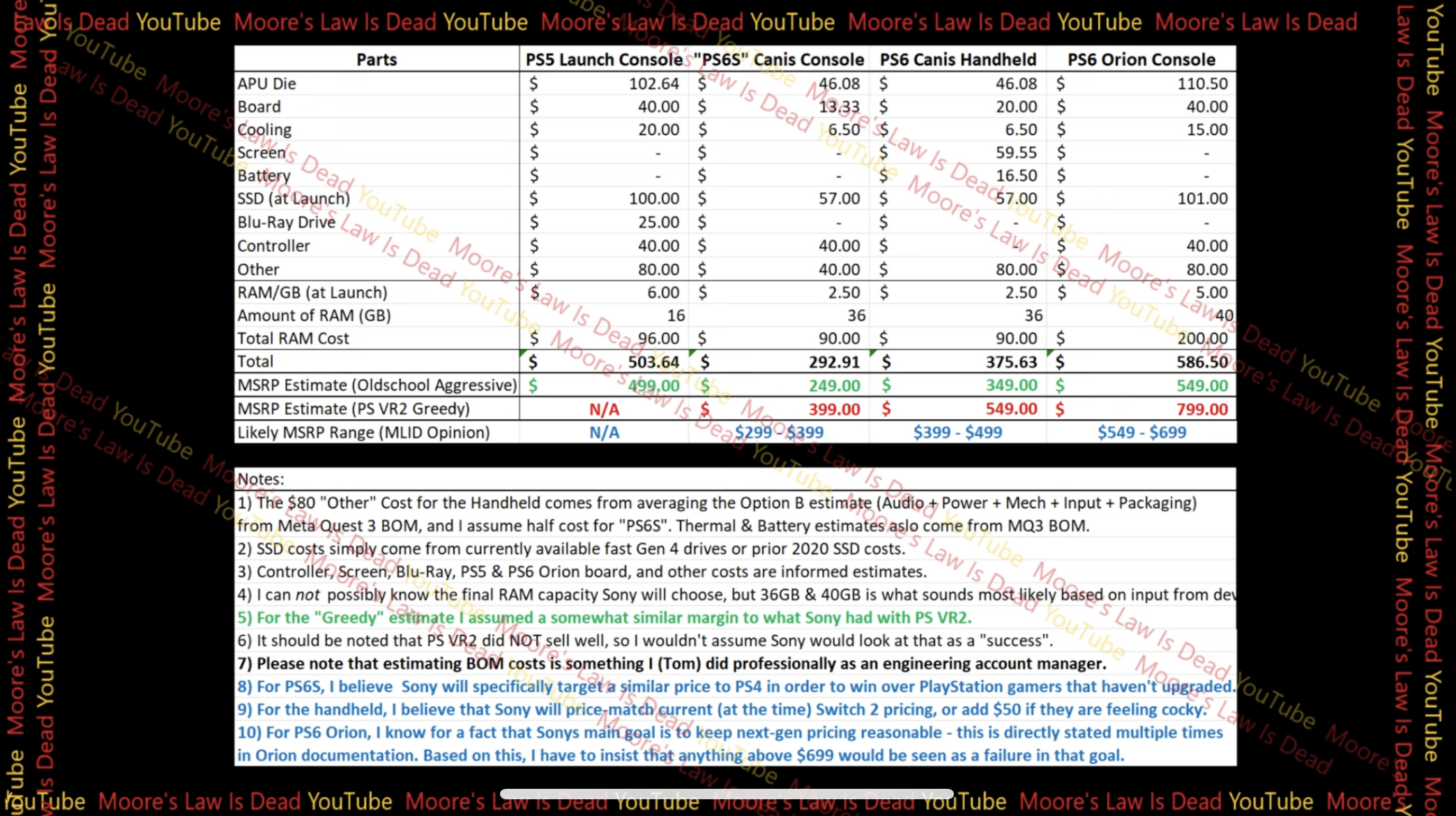

Pictures, specs, and the estimated cost of the powerful APU inside PlayStation 6 "S"!

Chapters:

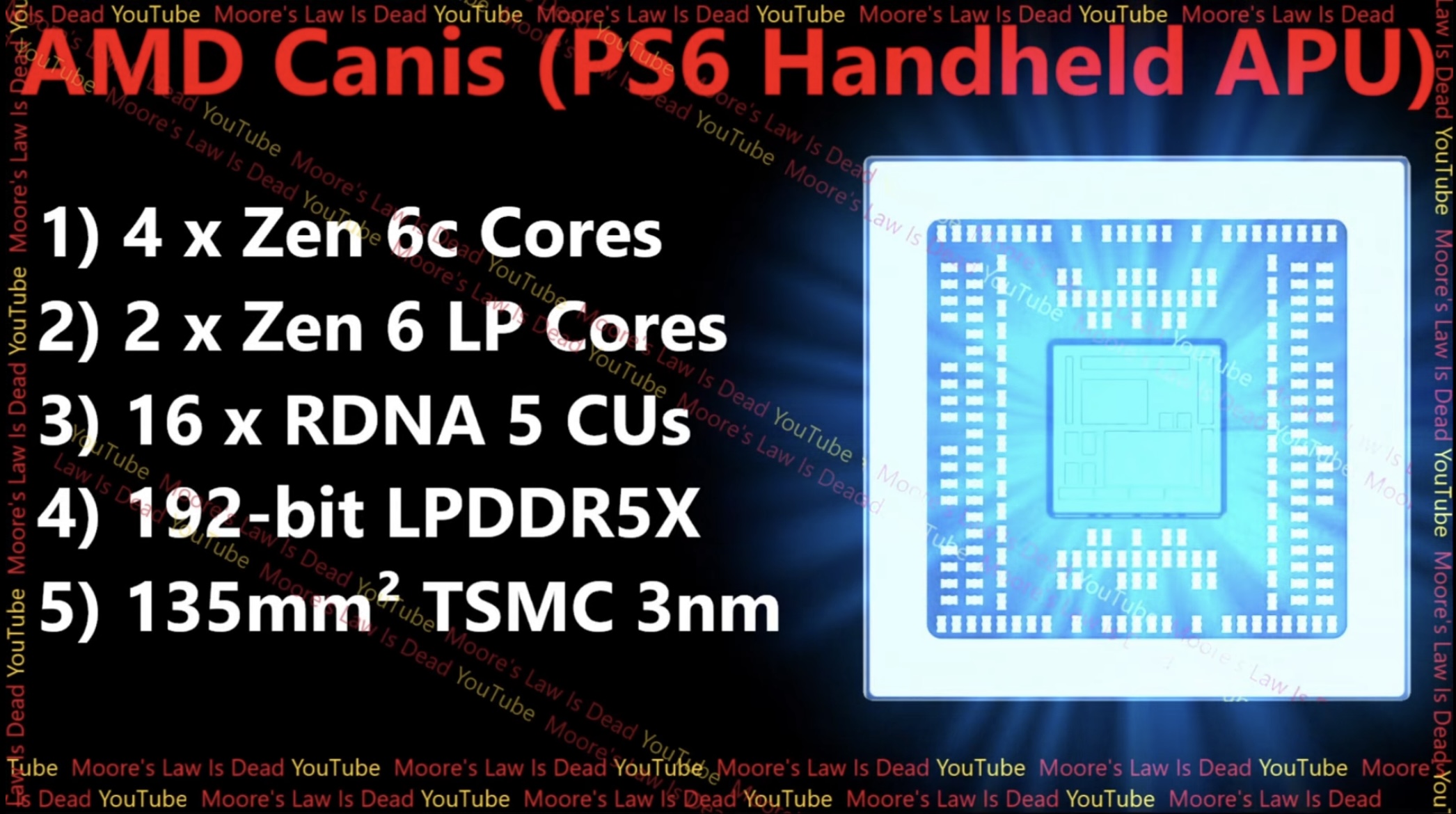

0:00 Pictures of AMD Canis based on Dimensions & Specs!

1:02 PS6 Handheld Specs Overview

2:51 BEWARE of FAKE Rumors

4:37 Sony MASSIVELY upgraded the Specs!

5:29 PS6 Canis & PS6 Orion -Cost Estimate

12:30 This wasn't Easy! Subscribe & Join Patreon!!!

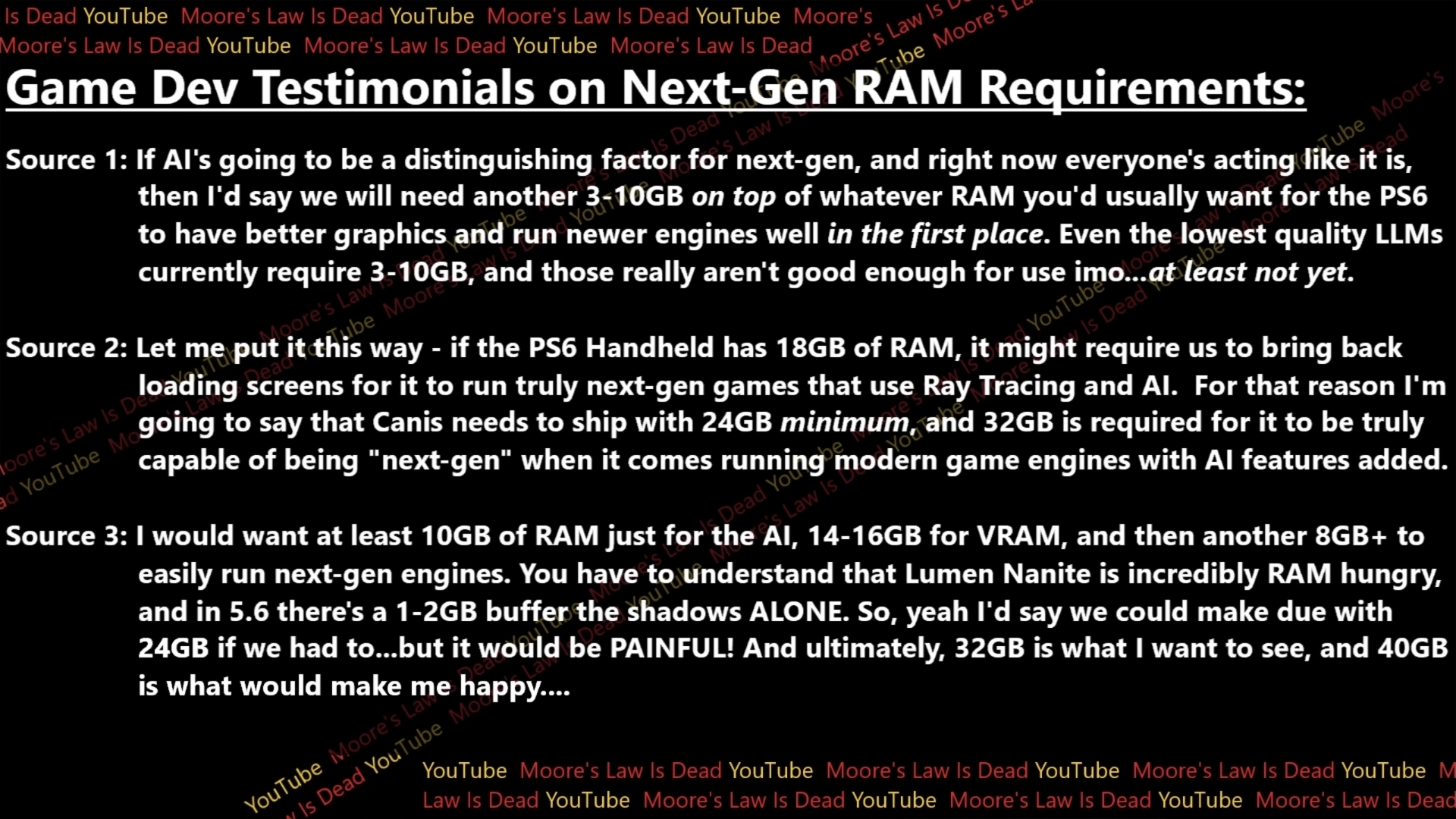

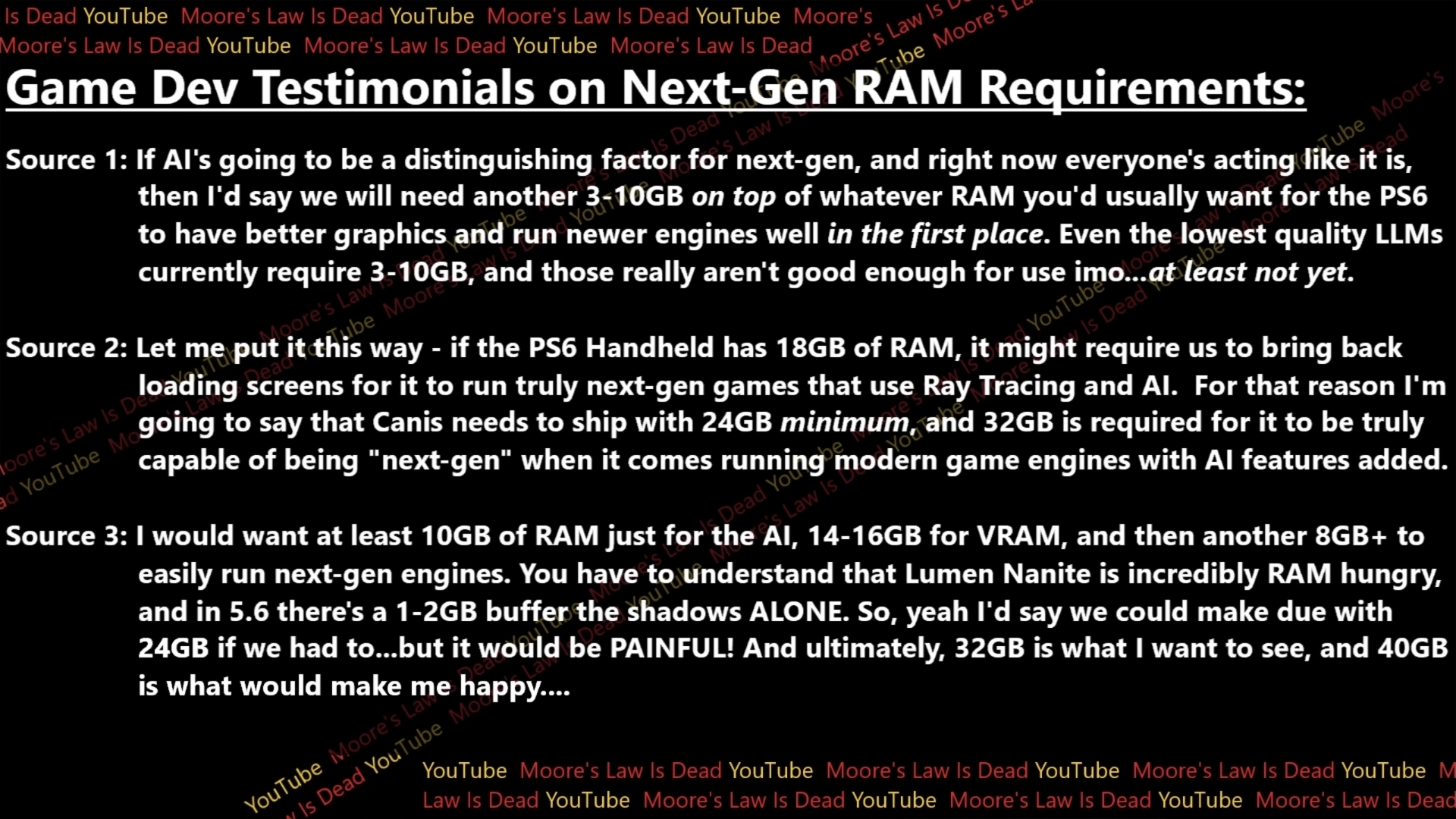

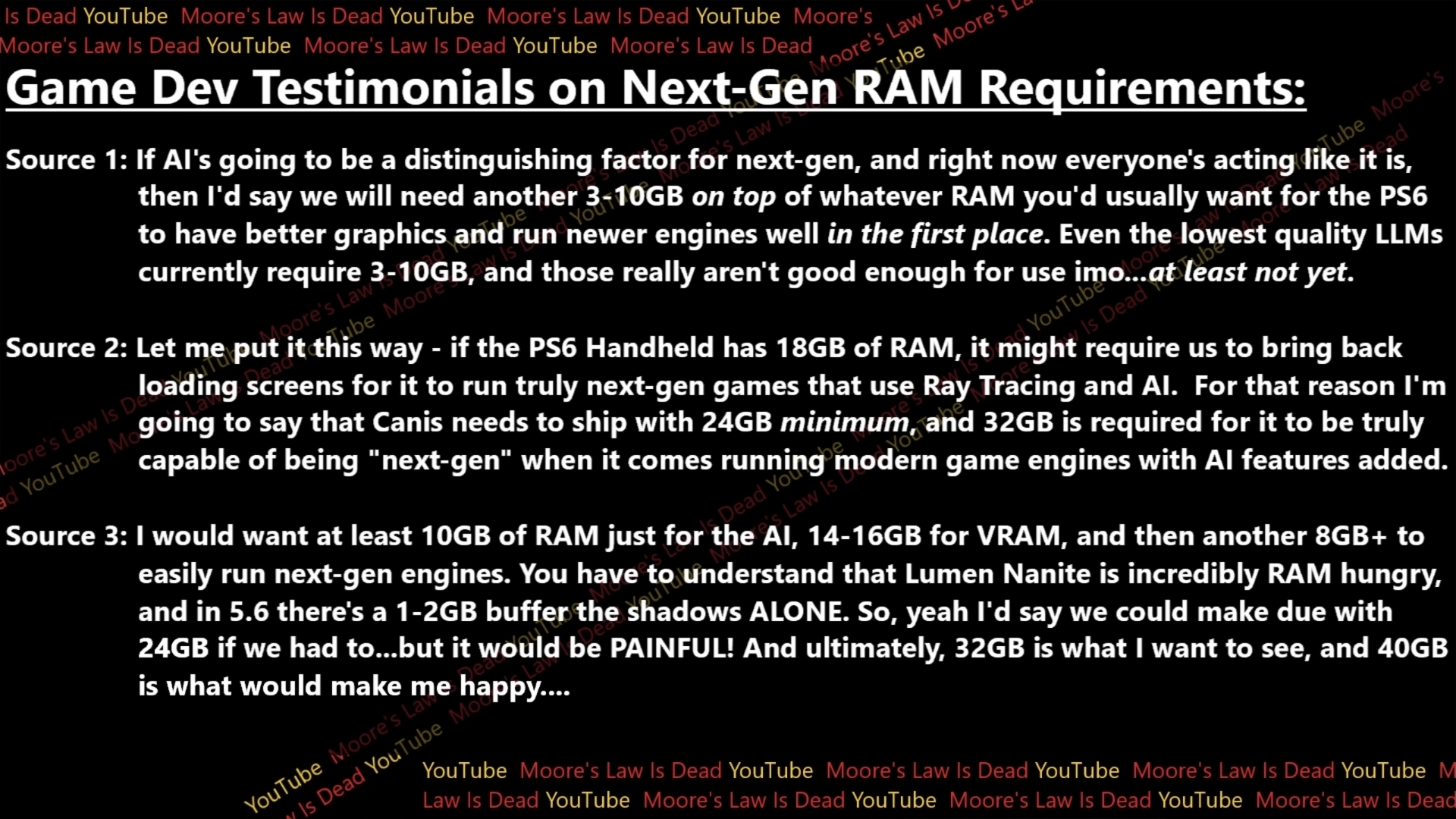

12:30 Game Dev Testimonials on Required RAM

17:57 PS6S Performance & Full Specs Leak

25:30 Sony wants PS4 Gamers to UPGRADE

PS6 "Consoles" estimated cost:-

Anonymous Game Dev Testimonials on Next-Gen RAM Requirements:-

$500 minimum so slightly more expensive than Switch 2.What would be the roughly price estimate for this thing?

To be fair MS says a lot of things and then goes back on them."Renowned leakers KeplerL2 and HeisenbergFX4 have confirmed that Lisa Su and Sarah Bond are full of shit and just piling all products and features together to create a utopian impression of the platform's future and their future stocks"

I'm just going by what he's leaking.Nothing has enough performance to run next-gen games and an LLM at the same time so this argument is irrelevant.

Nothing has enough performance to run next-gen games and an LLM at the same time so this argument is irrelevant.

I brought up this patent a few weeks ago as an example of ML potentially being used to do more than just upscaling on hardware.Nothing has enough performance to run next-gen games and an LLM at the same time so this argument is irrelevant.

tech4gamers.com

tech4gamers.com

At what price?

Secret sauce strikes againPossibly a scaling technology without obligating developers two create two different version or downgrade a specific game which is one of the main culprit for bad optimization this generation especially on multiplatform games(in the case of xbox series S) and developers not maximizing the full potential of a specific hardware.

LLMs are big because they are general purpose and tries to store humanity whole data. There is no need for that for tailored AI designed for specific tasks that make them much smaller and faster.The way I see it first there were pixels then vectors and now AI vectors (GAN texture model). Pixels, vectors, latents — each step a higher-order abstraction, pointing toward generative scene reconstruction. I mean the way we are headed you could see AI reconstructing entire scenes based on a design language matched to a trained model, but to begin with you have texture reconstruction as covered in the patent. LLM's are just a single possible use case of on board ML capability. Anyway don't get hung up on this coming generation having a LLM focus. I suspect thats either going to be cloud based for now and/or the following generation on board.

Yes there is. A wrapper. Same thing they do with backwards compatibility and why you can play a 360 game at 4K. In this case it'll be the opposite: divide the original resolution.Secret sauce strikes again

Tools (tm) might help but there will be no automatic scaling down technology (and devs prefer to scale down and not scale up), so testing profiling and adjustment still be required.